Markus Schoeler

SparseRadNet: Sparse Perception Neural Network on Subsampled Radar Data

Jun 18, 2024

Abstract:Radar-based perception has gained increasing attention in autonomous driving, yet the inherent sparsity of radars poses challenges. Radar raw data often contains excessive noise, whereas radar point clouds retain only limited information. In this work, we holistically treat the sparse nature of radar data by introducing an adaptive subsampling method together with a tailored network architecture that exploits the sparsity patterns to discover global and local dependencies in the radar signal. Our subsampling module selects a subset of pixels from range-doppler (RD) spectra that contribute most to the downstream perception tasks. To improve the feature extraction on sparse subsampled data, we propose a new way of applying graph neural networks on radar data and design a novel two-branch backbone to capture both global and local neighbor information. An attentive fusion module is applied to combine features from both branches. Experiments on the RADIal dataset show that our SparseRadNet exceeds state-of-the-art (SOTA) performance in object detection and achieves close to SOTA accuracy in freespace segmentation, meanwhile using sparse subsampled input data.

Semantic Segmentation of Radar Detections using Convolutions on Point Clouds

May 22, 2023Abstract:For autonomous driving, radar sensors provide superior reliability regardless of weather conditions as well as a significantly high detection range. State-of-the-art algorithms for environment perception based on radar scans build up on deep neural network architectures that can be costly in terms of memory and computation. By processing radar scans as point clouds, however, an increase in efficiency can be achieved in this respect. While Convolutional Neural Networks show superior performance on pattern recognition of regular data formats like images, the concept of convolutions is not yet fully established in the domain of radar detections represented as point clouds. The main challenge in convolving point clouds lies in their irregular and unordered data format and the associated permutation variance. Therefore, we apply a deep-learning based method introduced by PointCNN that weights and permutes grouped radar detections allowing the resulting permutation invariant cluster to be convolved. In addition, we further adapt this algorithm to radar-specific properties through distance-dependent clustering and pre-processing of input point clouds. Finally, we show that our network outperforms state-of-the-art approaches that are based on PointNet++ on the task of semantic segmentation of radar point clouds.

* 5th International Conference on Artificial Intelligence, Automation and Control Technologies (AIACT 2021), 26-28 March 2021, Shanghai, China

Semantic Pose using Deep Networks Trained on Synthetic RGB-D

Aug 04, 2015

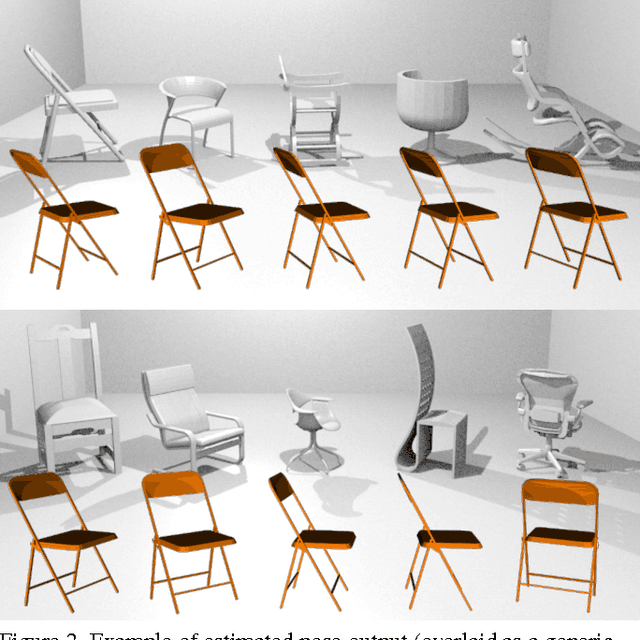

Abstract:In this work we address the problem of indoor scene understanding from RGB-D images. Specifically, we propose to find instances of common furniture classes, their spatial extent, and their pose with respect to generalized class models. To accomplish this, we use a deep, wide, multi-output convolutional neural network (CNN) that predicts class, pose, and location of possible objects simultaneously. To overcome the lack of large annotated RGB-D training sets (especially those with pose), we use an on-the-fly rendering pipeline that generates realistic cluttered room scenes in parallel to training. We then perform transfer learning on the relatively small amount of publicly available annotated RGB-D data, and find that our model is able to successfully annotate even highly challenging real scenes. Importantly, our trained network is able to understand noisy and sparse observations of highly cluttered scenes with a remarkable degree of accuracy, inferring class and pose from a very limited set of cues. Additionally, our neural network is only moderately deep and computes class, pose and position in tandem, so the overall run-time is significantly faster than existing methods, estimating all output parameters simultaneously in parallel on a GPU in seconds.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge