Mark Harley

Out-of-sample scoring and automatic selection of causal estimators

Dec 20, 2022

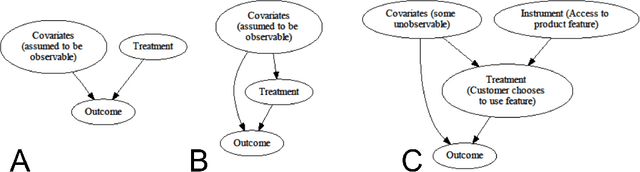

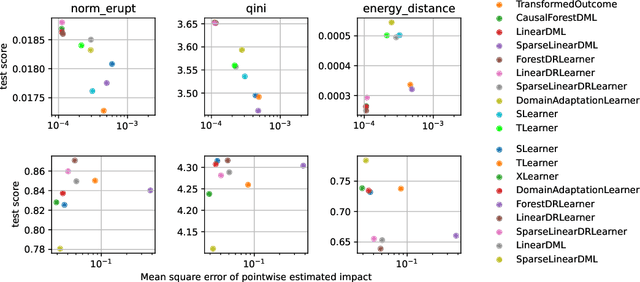

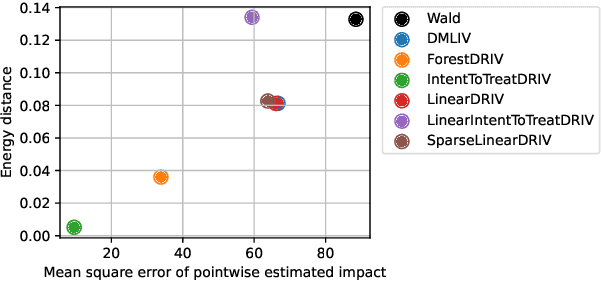

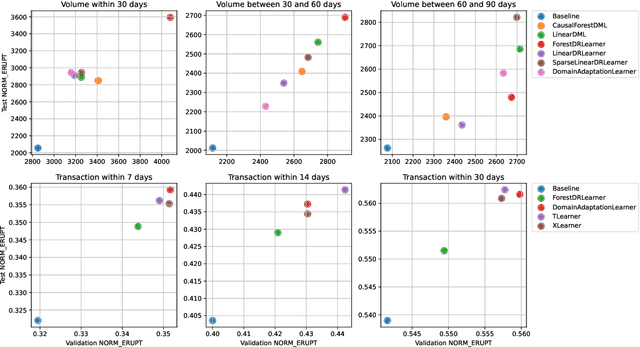

Abstract:Recently, many causal estimators for Conditional Average Treatment Effect (CATE) and instrumental variable (IV) problems have been published and open sourced, allowing to estimate granular impact of both randomized treatments (such as A/B tests) and of user choices on the outcomes of interest. However, the practical application of such models has ben hampered by the lack of a valid way to score the performance of such models out of sample, in order to select the best one for a given application. We address that gap by proposing novel scoring approaches for both the CATE case and an important subset of instrumental variable problems, namely those where the instrumental variable is customer acces to a product feature, and the treatment is the customer's choice to use that feature. Being able to score model performance out of sample allows us to apply hyperparameter optimization methods to causal model selection and tuning. We implement that in an open source package that relies on DoWhy and EconML libraries for implementation of causal inference models (and also includes a Transformed Outcome model implementation), and on FLAML for hyperparameter optimization and for component models used in the causal models. We demonstrate on synthetic data that optimizing the proposed scores is a reliable method for choosing the model and its hyperparameter values, whose estimates are close to the true impact, in the randomized CATE and IV cases. Further, we provide examles of applying these methods to real customer data from Wise.

Probabilistic hypergraph grammars for efficient molecular optimization

Jun 05, 2019

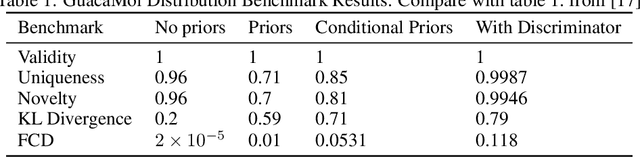

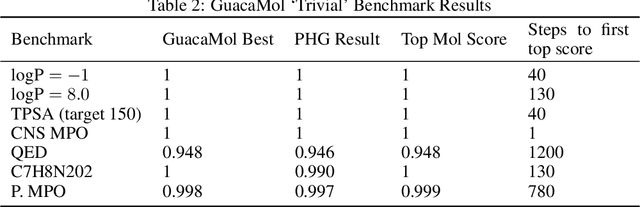

Abstract:We present an approach to make molecular optimization more efficient. We infer a hypergraph replacement grammar from the ChEMBL database, count the frequencies of particular rules being used to expand particular nonterminals in other rules, and use these as conditional priors for the policy model. Simulating random molecules from the resulting probabilistic grammar, we show that conditional priors result in a molecular distribution closer to the training set than using equal rule probabilities or unconditional priors. We then treat molecular optimization as a reinforcement learning problem, using a novel modification of the policy gradient algorithm - batch-advantage: using individual rewards minus the batch average reward to weight the log probability loss. The reinforcement learning agent is tasked with building molecules using this grammar, with the goal of maximizing benchmark scores available from the literature. To do so, the agent has policies both to choose the next node in the graph to expand and to select the next grammar rule to apply. The policies are implemented using the Transformer architecture with the partially expanded graph as the input at each step. We show that using the empirical priors as the starting point for a policy eliminates the need for pre-training, and allows us to reach optima faster. We achieve competitive performance on common benchmarks from the literature, such as penalized logP and QED, with only hundreds of training steps on a budget GPU instance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge