Marijn ten Thij

VFL-RPS: Relevant Participant Selection in Vertical Federated Learning

Feb 20, 2025

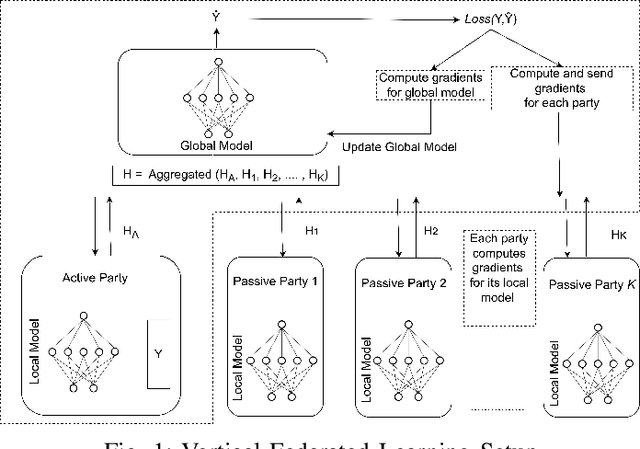

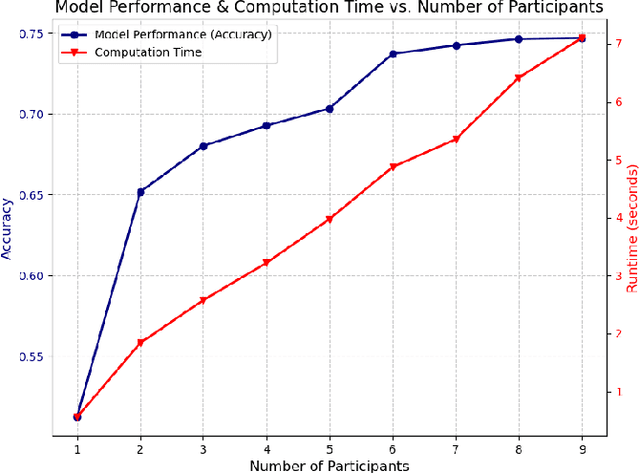

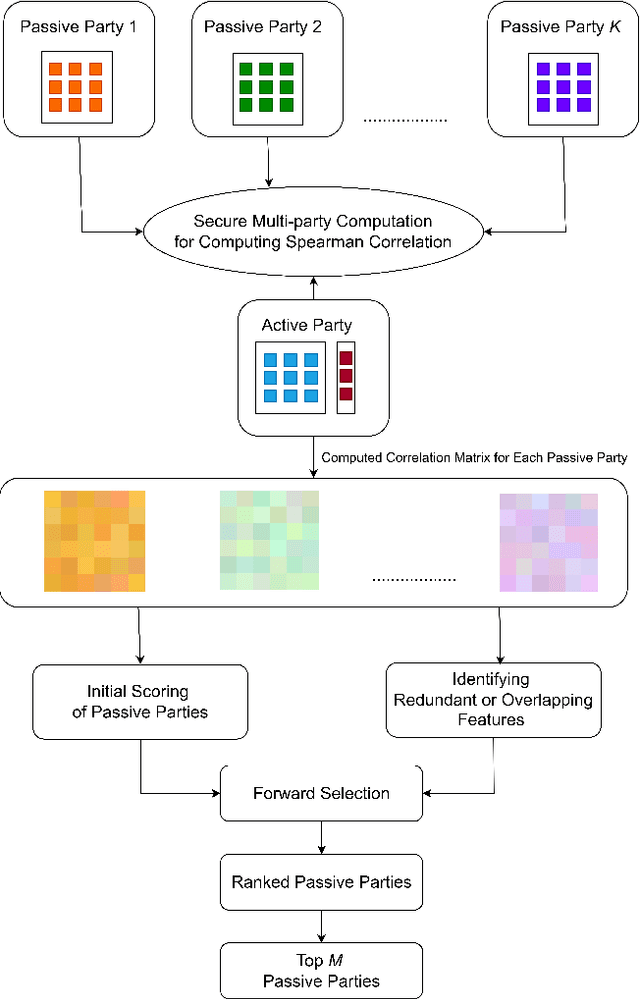

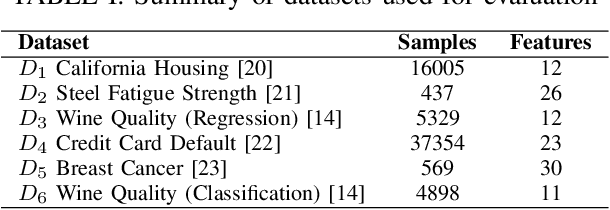

Abstract:Federated Learning (FL) allows collaboration between different parties, while ensuring that the data across these parties is not shared. However, not every collaboration is helpful in terms of the resulting model performance. Therefore, it is an important challenge to select the correct participants in a collaboration. As it currently stands, most of the efforts in participant selection in the literature have focused on Horizontal Federated Learning (HFL), which assumes that all features are the same across all participants, disregarding the possibility of different features across participants which is captured in Vertical Federated Learning (VFL). To close this gap in the literature, we propose a novel method VFL-RPS for participant selection in VFL, as a pre-training step. We have tested our method on several data sets performing both regression and classification tasks, showing that our method leads to comparable results as using all data by only selecting a few participants. In addition, we show that our method outperforms existing methods for participant selection in VFL.

Incentive Allocation in Vertical Federated Learning Based on Bankruptcy Problem

Jul 07, 2023Abstract:Vertical federated learning (VFL) is a promising approach for collaboratively training machine learning models using private data partitioned vertically across different parties. Ideally in a VFL setting, the active party (party possessing features of samples with labels) benefits by improving its machine learning model through collaboration with some passive parties (parties possessing additional features of the same samples without labels) in a privacy preserving manner. However, motivating passive parties to participate in VFL can be challenging. In this paper, we focus on the problem of allocating incentives to the passive parties by the active party based on their contributions to the VFL process. We formulate this problem as a variant of the Nucleolus game theory concept, known as the Bankruptcy Problem, and solve it using the Talmud's division rule. We evaluate our proposed method on synthetic and real-world datasets and show that it ensures fairness and stability in incentive allocation among passive parties who contribute their data to the federated model. Additionally, we compare our method to the existing solution of calculating Shapley values and show that our approach provides a more efficient solution with fewer computations.

Vertical Federated Learning: A Structured Literature Review

Dec 01, 2022Abstract:Federated Learning (FL) has emerged as a promising distributed learning paradigm with an added advantage of data privacy. With the growing interest in having collaboration among data owners, FL has gained significant attention of organizations. The idea of FL is to enable collaborating participants train machine learning (ML) models on decentralized data without breaching privacy. In simpler words, federated learning is the approach of ``bringing the model to the data, instead of bringing the data to the mode''. Federated learning, when applied to data which is partitioned vertically across participants, is able to build a complete ML model by combining local models trained only using the data with distinct features at the local sites. This architecture of FL is referred to as vertical federated learning (VFL), which differs from the conventional FL on horizontally partitioned data. As VFL is different from conventional FL, it comes with its own issues and challenges. In this paper, we present a structured literature review discussing the state-of-the-art approaches in VFL. Additionally, the literature review highlights the existing solutions to challenges in VFL and provides potential research directions in this domain.

Depressed individuals express more distorted thinking on social media

Feb 07, 2020

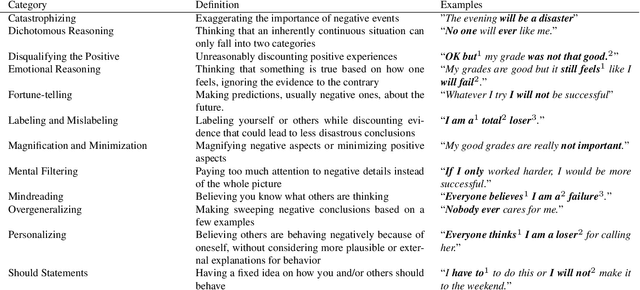

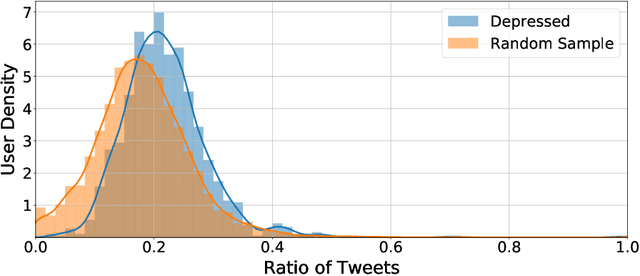

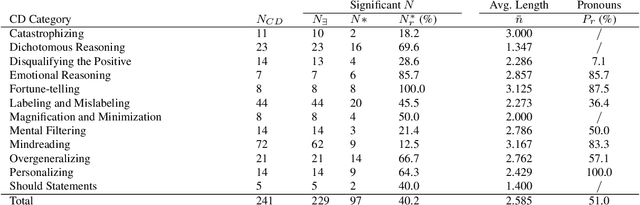

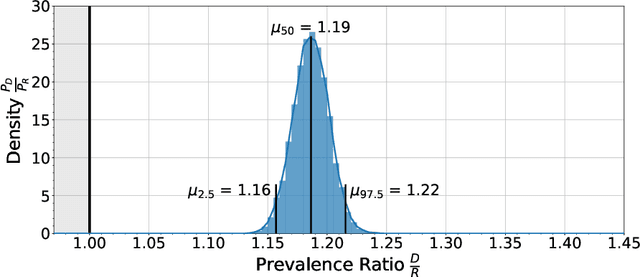

Abstract:Depression is a leading cause of disability worldwide, but is often under-diagnosed and under-treated. One of the tenets of cognitive-behavioral therapy (CBT) is that individuals who are depressed exhibit distorted modes of thinking, so-called cognitive distortions, which can negatively affect their emotions and motivation. Here, we show that individuals with a self-reported diagnosis of depression on social media express higher levels of distorted thinking than a random sample. Some types of distorted thinking were found to be more than twice as prevalent in our depressed cohort, in particular Personalizing and Emotional Reasoning. This effect is specific to the distorted content of the expression and can not be explained by the presence of specific topics, sentiment, or first-person pronouns. Our results point towards the detection, and possibly mitigation, of patterns of online language that are generally deemed depressogenic. They may also provide insight into recent observations that social media usage can have a negative impact on mental health.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge