Maria Arostegi

AiGAS-dEVL-RC: An Adaptive Growing Neural Gas Model for Recurrently Drifting Unsupervised Data Streams

Apr 10, 2025

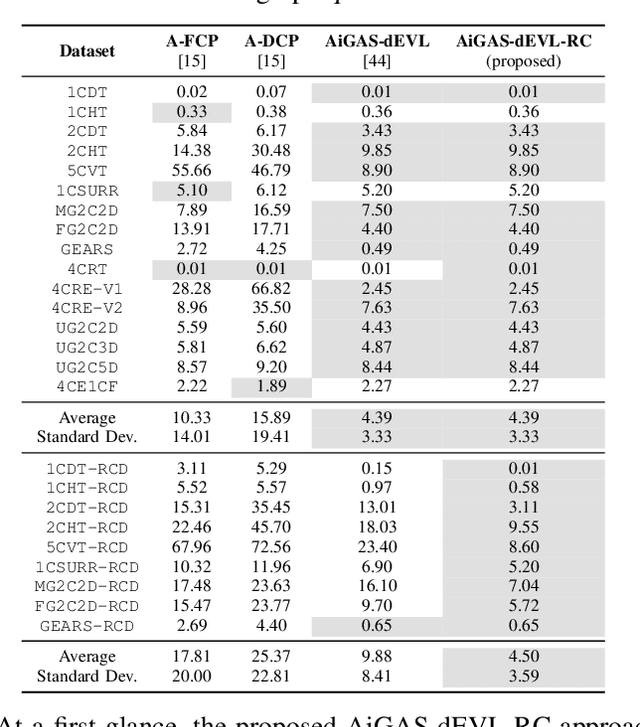

Abstract:Concept drift and extreme verification latency pose significant challenges in data stream learning, particularly when dealing with recurring concept changes in dynamic environments. This work introduces a novel method based on the Growing Neural Gas (GNG) algorithm, designed to effectively handle abrupt recurrent drifts while adapting to incrementally evolving data distributions (incremental drifts). Leveraging the self-organizing and topological adaptability of GNG, the proposed approach maintains a compact yet informative memory structure, allowing it to efficiently store and retrieve knowledge of past or recurring concepts, even under conditions of delayed or sparse stream supervision. Our experiments highlight the superiority of our approach over existing data stream learning methods designed to cope with incremental non-stationarities and verification latency, demonstrating its ability to quickly adapt to new drifts, robustly manage recurring patterns, and maintain high predictive accuracy with a minimal memory footprint. Unlike other techniques that fail to leverage recurring knowledge, our proposed approach is proven to be a robust and efficient online learning solution for unsupervised drifting data flows.

AiGAS-dEVL: An Adaptive Incremental Neural Gas Model for Drifting Data Streams under Extreme Verification Latency

Jul 07, 2024Abstract:The ever-growing speed at which data are generated nowadays, together with the substantial cost of labeling processes cause Machine Learning models to face scenarios in which data are partially labeled. The extreme case where such a supervision is indefinitely unavailable is referred to as extreme verification latency. On the other hand, in streaming setups data flows are affected by exogenous factors that yield non-stationarities in the patterns (concept drift), compelling models learned incrementally from the data streams to adapt their modeled knowledge to the concepts within the stream. In this work we address the casuistry in which these two conditions occur together, by which adaptation mechanisms to accommodate drifts within the stream are challenged by the lack of supervision, requiring further mechanisms to track the evolution of concepts in the absence of verification. To this end we propose a novel approach, AiGAS-dEVL (Adaptive Incremental neural GAS model for drifting Streams under Extreme Verification Latency), which relies on growing neural gas to characterize the distributions of all concepts detected within the stream over time. Our approach exposes that the online analysis of the behavior of these prototypical points over time facilitates the definition of the evolution of concepts in the feature space, the detection of changes in their behavior, and the design of adaptation policies to mitigate the effect of such changes in the model. We assess the performance of AiGAS-dEVL over several synthetic datasets, comparing it to that of state-of-the-art approaches proposed in the recent past to tackle this stream learning setup. Our results reveal that AiGAS-dEVL performs competitively with respect to the rest of baselines, exhibiting a superior adaptability over several datasets in the benchmark while ensuring a simple and interpretable instance-based adaptation strategy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge