Marcello Canova

Conformalized Transfer Learning for Li-ion Battery State of Health Forecasting under Manufacturing and Usage Variability

Mar 25, 2026Abstract:Accurate forecasting of state-of-health (SOH) is essential for ensuring safe and reliable operation of lithium-ion cells. However, existing models calibrated on laboratory tests at specific conditions often fail to generalize to new cells that differ due to small manufacturing variations or operate under different conditions. To address this challenge, an uncertainty-aware transfer learning framework is proposed, combining a Long Short-Term Memory (LSTM) model with domain adaptation via Maximum Mean Discrepancy (MMD) and uncertainty quantification through Conformal Prediction (CP). The LSTM model is trained on a virtual battery dataset designed to capture real-world variability in electrode manufacturing and operating conditions. MMD aligns latent feature distributions between simulated and target domains to mitigate domain shift, while CP provides calibrated, distribution-free prediction intervals. This framework improves both the generalization and trustworthiness of SOH forecasts across heterogeneous cells.

Quadratic Surrogate Attractor for Particle Swarm Optimization

Mar 17, 2026Abstract:This paper presents a particle swarm optimization algorithm that leverages surrogate modeling to replace the conventional global best solution with the minimum of an n-dimensional quadratic form, providing a better-conditioned dynamic attractor for the swarm. This refined convergence target, informed by the local landscape, enhances global convergence behavior and increases robustness against premature convergence and noise, while incurring only minimal computational overhead. The surrogate-augmented approach is evaluated against the standard algorithm through a numerical study on a set of benchmark optimization functions that exhibit diverse landscapes. To ensure statistical significance, 400 independent runs are conducted for each function and algorithm, and the results are analyzed based on their statistical characteristics and corresponding distributions. The quadratic surrogate attractor consistently outperforms the conventional algorithm across all tested functions. The improvement is particularly pronounced for quasi-convex functions, where the surrogate model can exploit the underlying convex-like structure of the landscape.

Real-Time Optimal Design of Experiment for Parameter Identification of Li-Ion Cell Electrochemical Model

Apr 22, 2025

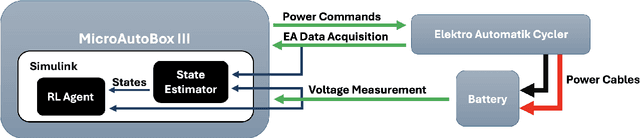

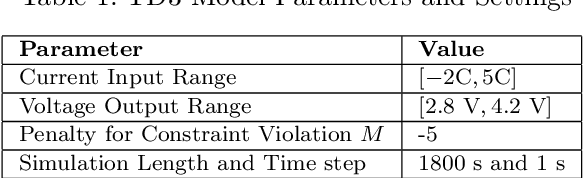

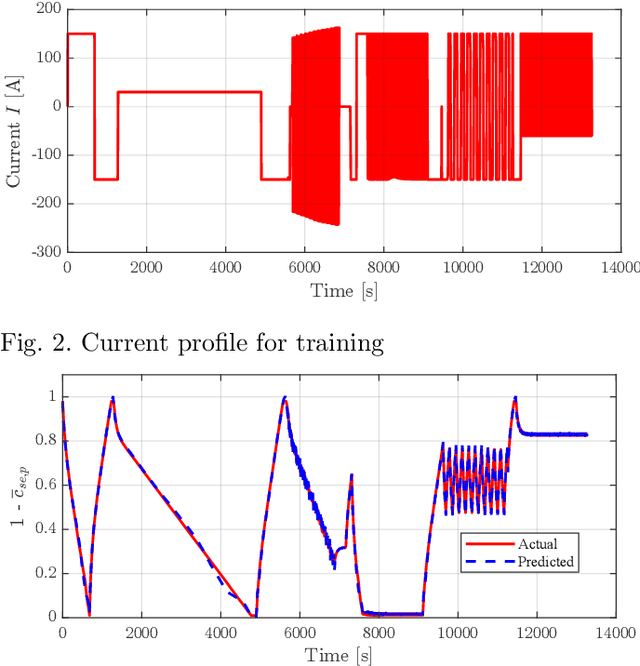

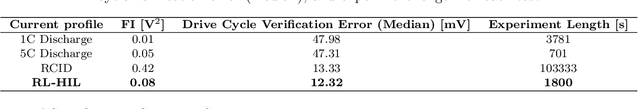

Abstract:Accurately identifying the parameters of electrochemical models of li-ion battery (LiB) cells is a critical task for enhancing the fidelity and predictive ability. Traditional parameter identification methods often require extensive data collection experiments and lack adaptability in dynamic environments. This paper describes a Reinforcement Learning (RL) based approach that dynamically tailors the current profile applied to a LiB cell to optimize the parameters identifiability of the electrochemical model. The proposed framework is implemented in real-time using a Hardware-in-the-Loop (HIL) setup, which serves as a reliable testbed for evaluating the RL-based design strategy. The HIL validation confirms that the RL-based experimental design outperforms conventional test protocols used for parameter identification in terms of both reducing the modeling errors on a verification test and minimizing the duration of the experiment used for parameter identification.

Improving Low-Fidelity Models of Li-ion Batteries via Hybrid Sparse Identification of Nonlinear Dynamics

Nov 20, 2024Abstract:Accurate modeling of lithium ion (li-ion) batteries is essential for enhancing the safety, and efficiency of electric vehicles and renewable energy systems. This paper presents a data-inspired approach for improving the fidelity of reduced-order li-ion battery models. The proposed method combines a Genetic Algorithm with Sequentially Thresholded Ridge Regression (GA-STRidge) to identify and compensate for discrepancies between a low-fidelity model (LFM) and data generated either from testing or a high-fidelity model (HFM). The hybrid model, combining physics-based and data-driven methods, is tested across different driving cycles to demonstrate the ability to significantly reduce the voltage prediction error compared to the baseline LFM, while preserving computational efficiency. The model robustness is also evaluated under various operating conditions, showing low prediction errors and high Pearson correlation coefficients for terminal voltage in unseen environments.

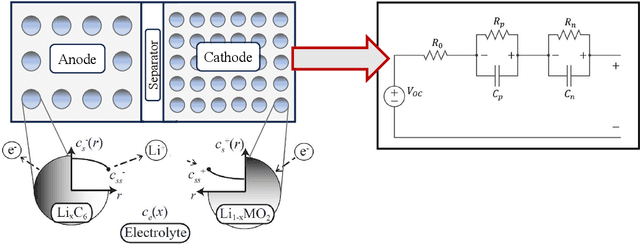

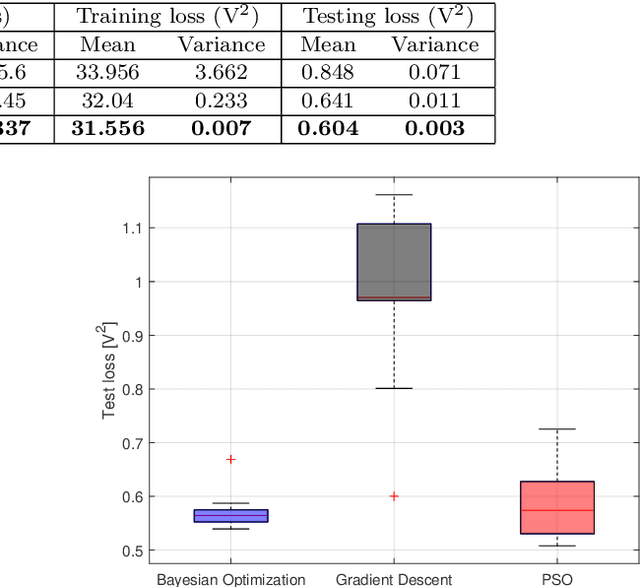

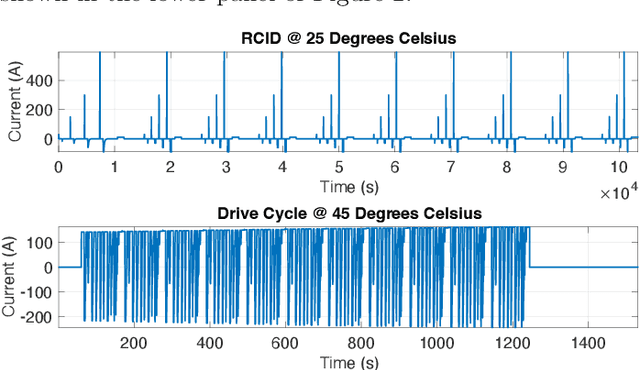

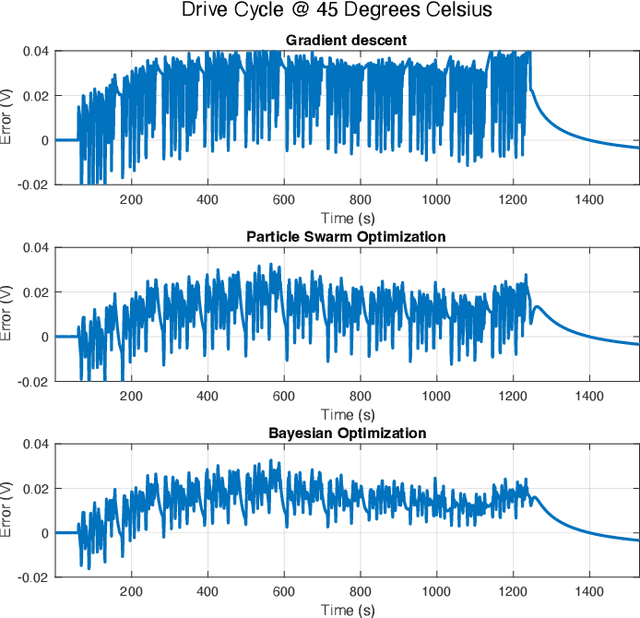

Parameter Identification for Electrochemical Models of Lithium-Ion Batteries Using Bayesian Optimization

May 17, 2024

Abstract:Efficient parameter identification of electrochemical models is crucial for accurate monitoring and control of lithium-ion cells. This process becomes challenging when applied to complex models that rely on a considerable number of interdependent parameters that affect the output response. Gradient-based and metaheuristic optimization techniques, although previously employed for this task, are limited by their lack of robustness, high computational costs, and susceptibility to local minima. In this study, Bayesian Optimization is used for tuning the dynamic parameters of an electrochemical equivalent circuit battery model (E-ECM) for a nickel-manganese-cobalt (NMC)-graphite cell. The performance of the Bayesian Optimization is compared with baseline methods based on gradient-based and metaheuristic approaches. The robustness of the parameter optimization method is tested by performing verification using an experimental drive cycle. The results indicate that Bayesian Optimization outperforms Gradient Descent and PSO optimization techniques, achieving reductions on average testing loss by 28.8% and 5.8%, respectively. Moreover, Bayesian optimization significantly reduces the variance in testing loss by 95.8% and 72.7%, respectively.

Eco-Driving Control of Connected and Automated Vehicles using Neural Network based Rollout

Oct 16, 2023Abstract:Connected and autonomous vehicles have the potential to minimize energy consumption by optimizing the vehicle velocity and powertrain dynamics with Vehicle-to-Everything info en route. Existing deterministic and stochastic methods created to solve the eco-driving problem generally suffer from high computational and memory requirements, which makes online implementation challenging. This work proposes a hierarchical multi-horizon optimization framework implemented via a neural network. The neural network learns a full-route value function to account for the variability in route information and is then used to approximate the terminal cost in a receding horizon optimization. Simulations over real-world routes demonstrate that the proposed approach achieves comparable performance to a stochastic optimization solution obtained via reinforcement learning, while requiring no sophisticated training paradigm and negligible on-board memory.

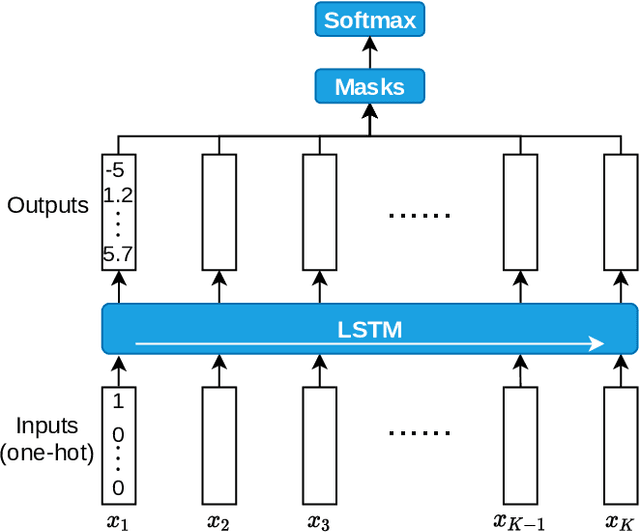

Data-driven Driver Model for Speed Advisory Systems in Partially Automated Vehicles

May 17, 2022

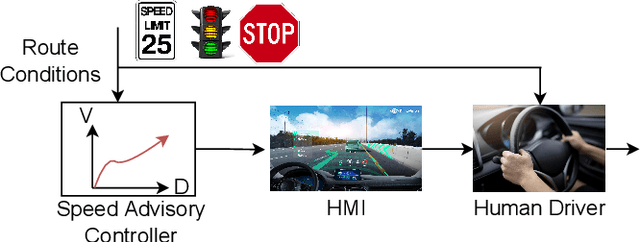

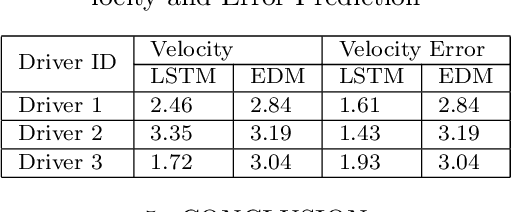

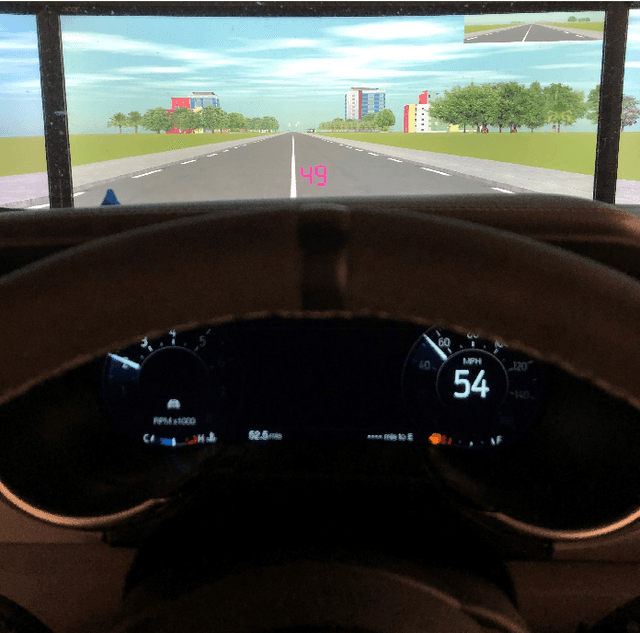

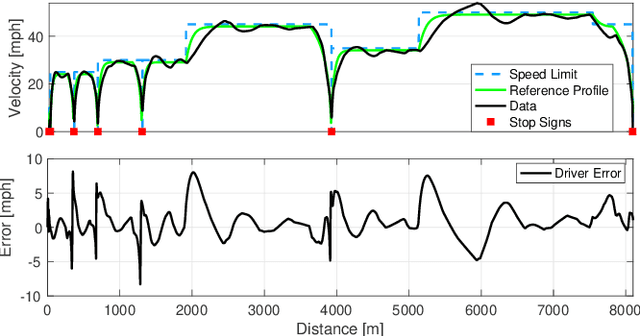

Abstract:Vehicle control algorithms exploiting connectivity and automation, such as Connected and Automated Vehicles (CAVs) or Advanced Driver Assistance Systems (ADAS), have the opportunity to improve energy savings. However, lower levels of automation involve a human-machine interaction stage, where the presence of a human driver affects the performance of the control algorithm in closed loop. This occurs for instance in the case of Eco-Driving control algorithms implemented as a velocity advisory system, where the driver is displayed an optimal speed trajectory to follow to reduce energy consumption. Achieving the control objectives relies on the human driver perfectly following the recommended speed. If the driver is unable to follow the recommended speed, a decline in energy savings and poor vehicle performance may occur. This warrants the creation of methods to model and forecast the response of a human driver when operating in the loop with a speed advisory system. This work focuses on developing a sequence to sequence long-short term memory (LSTM)-based driver behavior model that models the interaction of the human driver to a suggested desired vehicle speed trajectory in real-world conditions. A driving simulator is used for data collection and training the driver model, which is then compared against the driving data and a deterministic model. Results show close proximity of the LSTM-based model with the driving data, demonstrating that the model can be adopted as a tool to design human-centered speed advisory systems.

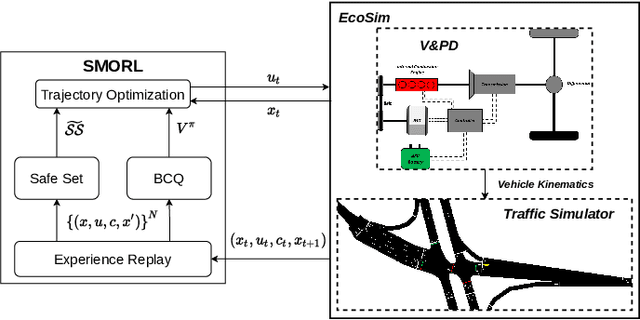

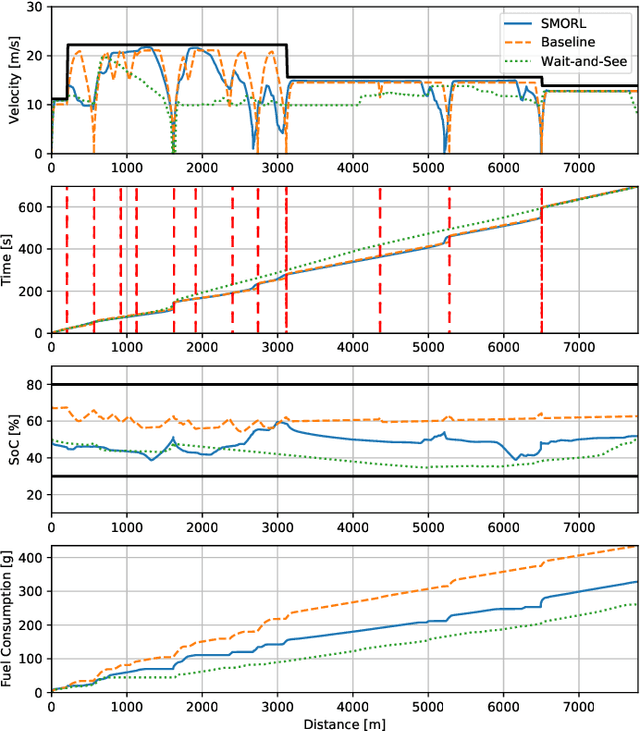

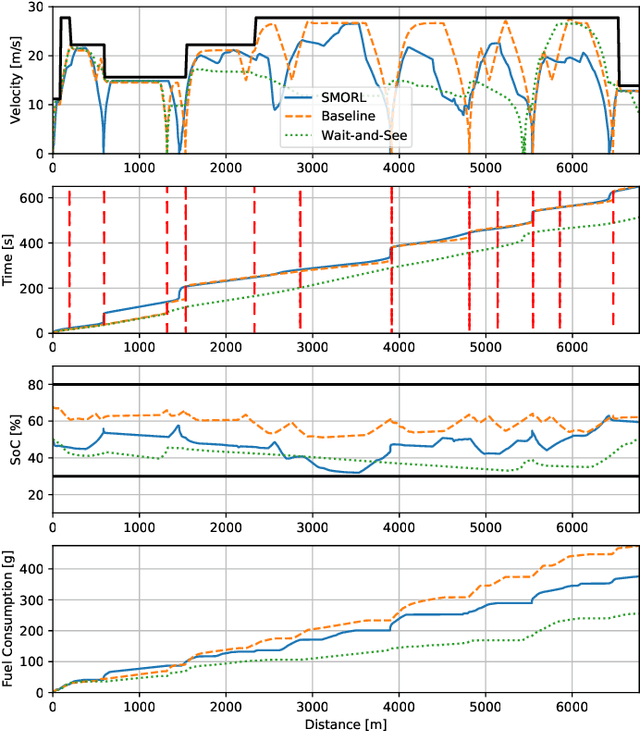

Safe Model-based Off-policy Reinforcement Learning for Eco-Driving in Connected and Automated Hybrid Electric Vehicles

May 25, 2021

Abstract:Connected and Automated Hybrid Electric Vehicles have the potential to reduce fuel consumption and travel time in real-world driving conditions. The eco-driving problem seeks to design optimal speed and power usage profiles based upon look-ahead information from connectivity and advanced mapping features. Recently, Deep Reinforcement Learning (DRL) has been applied to the eco-driving problem. While the previous studies synthesize simulators and model-free DRL to reduce online computation, this work proposes a Safe Off-policy Model-Based Reinforcement Learning algorithm for the eco-driving problem. The advantages over the existing literature are three-fold. First, the combination of off-policy learning and the use of a physics-based model improves the sample efficiency. Second, the training does not require any extrinsic rewarding mechanism for constraint satisfaction. Third, the feasibility of trajectory is guaranteed by using a safe set approximated by deep generative models. The performance of the proposed method is benchmarked against a baseline controller representing human drivers, a previously designed model-free DRL strategy, and the wait-and-see optimal solution. In simulation, the proposed algorithm leads to a policy with a higher average speed and a better fuel economy compared to the model-free agent. Compared to the baseline controller, the learned strategy reduces the fuel consumption by more than 21\% while keeping the average speed comparable.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge