Marc-Antoine Moinnereau

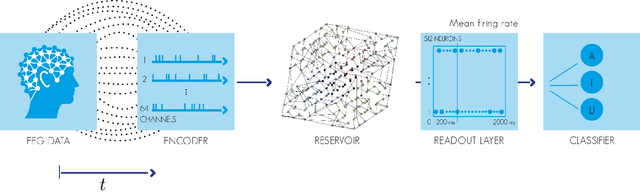

Classification of auditory stimuli from EEG signals with a regulated recurrent neural network reservoir

Apr 27, 2018

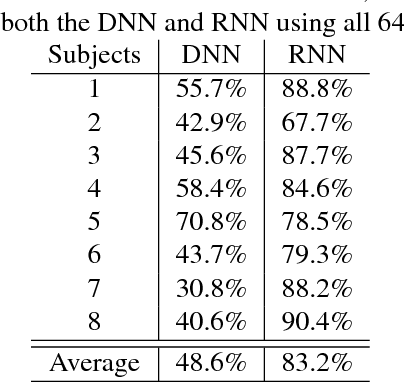

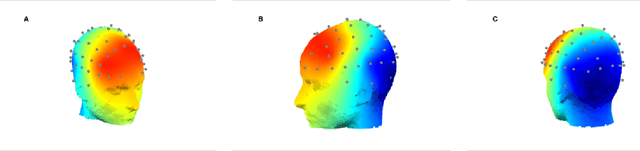

Abstract:The use of electroencephalogram (EEG) as the main input signal in brain-machine interfaces has been widely proposed due to the non-invasive nature of the EEG. Here we are specifically interested in interfaces that extract information from the auditory system and more specifically in the task of classifying heard speech from EEGs. To do so, we propose to limit the preprocessing of the EEGs and use machine learning approaches to automatically extract their meaningful characteristics. More specifically, we use a regulated recurrent neural network (RNN) reservoir, which has been shown to outperform classic machine learning approaches when applied to several different bio-signals, and we compare it with a deep neural network approach. Moreover, we also investigate the classification performance as a function of the number of EEG electrodes. A set of 8 subjects were presented randomly with 3 different auditory stimuli (English vowels a, i and u). We obtained an excellent classification rate of 83.2% with the RNN when considering all 64 electrodes. A rate of 81.7% was achieved with only 10 electrodes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge