Mansoor Ali

Performance Evaluation of Deep Learning and Transformer Models Using Multimodal Data for Breast Cancer Classification

Oct 14, 2024Abstract:Rising breast cancer (BC) occurrence and mortality are major global concerns for women. Deep learning (DL) has demonstrated superior diagnostic performance in BC classification compared to human expert readers. However, the predominant use of unimodal (digital mammography) features may limit the current performance of diagnostic models. To address this, we collected a novel multimodal dataset comprising both imaging and textual data. This study proposes a multimodal DL architecture for BC classification, utilising images (mammograms; four views) and textual data (radiological reports) from our new in-house dataset. Various augmentation techniques were applied to enhance the training data size for both imaging and textual data. We explored the performance of eleven SOTA DL architectures (VGG16, VGG19, ResNet34, ResNet50, MobileNet-v3, EffNet-b0, EffNet-b1, EffNet-b2, EffNet-b3, EffNet-b7, and Vision Transformer (ViT)) as imaging feature extractors. For textual feature extraction, we utilised either artificial neural networks (ANNs) or long short-term memory (LSTM) networks. The combined imaging and textual features were then inputted into an ANN classifier for BC classification, using the late fusion technique. We evaluated different feature extractor and classifier arrangements. The VGG19 and ANN combinations achieved the highest accuracy of 0.951. For precision, the VGG19 and ANN combination again surpassed other CNN and LSTM, ANN based architectures by achieving a score of 0.95. The best sensitivity score of 0.903 was achieved by the VGG16+LSTM. The highest F1 score of 0.931 was achieved by VGG19+LSTM. Only the VGG16+LSTM achieved the best area under the curve (AUC) of 0.937, with VGG16+LSTM closely following with a 0.929 AUC score.

A comprehensive survey on recent deep learning-based methods applied to surgical data

Sep 03, 2022

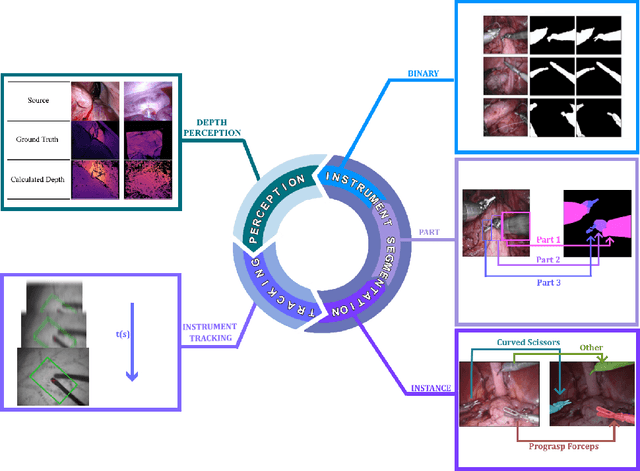

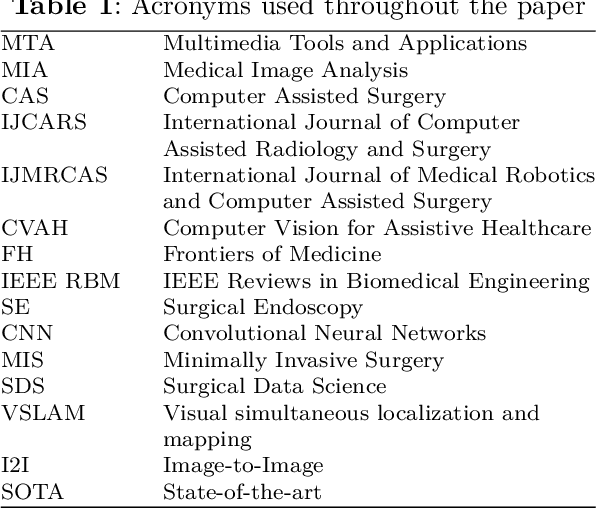

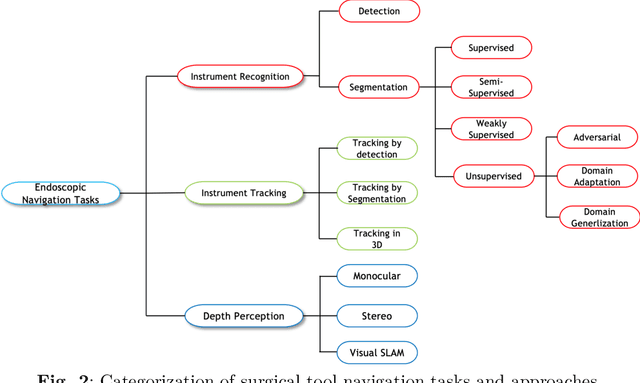

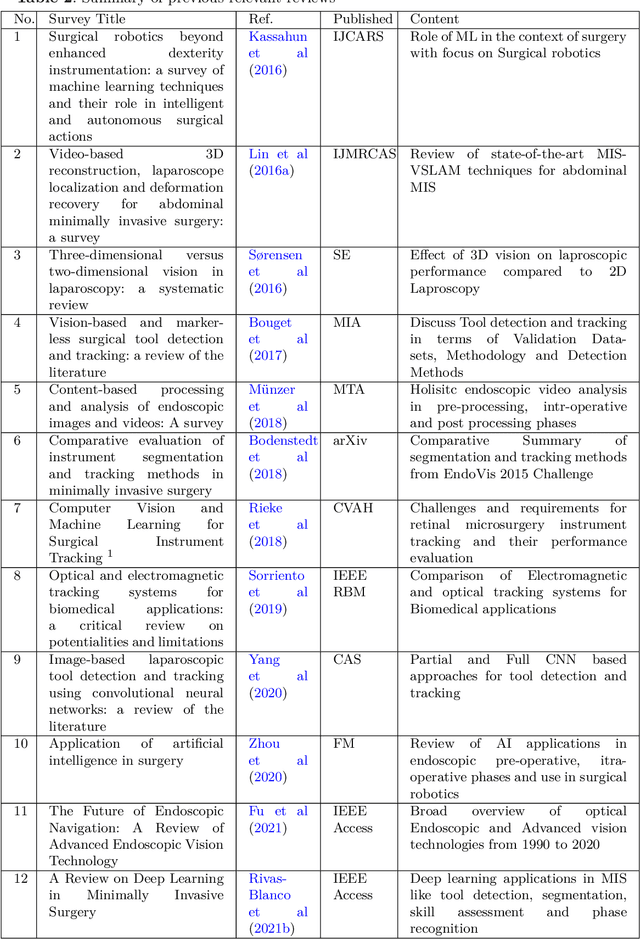

Abstract:Minimally invasive surgery is highly operator dependant with lengthy procedural times causing fatigue and risk to patients. In order to mitigate these risks, real-time systems can help assist surgeons to navigate and track tools, by providing clear understanding of scene and avoid miscalculations during operation. While several efforts have been made in this direction, a lack of diverse datasets, as well as very dynamic scenes and its variability in each patient entails major hurdle in accomplishing robust systems. In this work, we present a systematic review of recent machine learning-based approaches including surgical tool localisation, segmentation, tracking and 3D scene perception. Furthermore, we present current gaps and directions of these invented methods and provide rational behind clinical integration of these approaches.

A semi-supervised Teacher-Student framework for surgical tool detection and localization

Aug 21, 2022

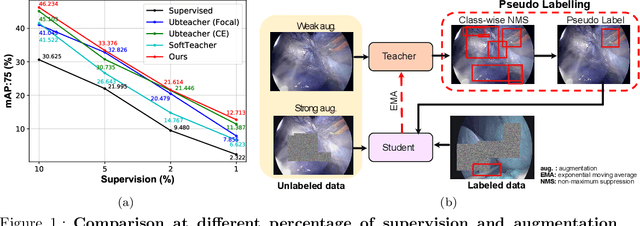

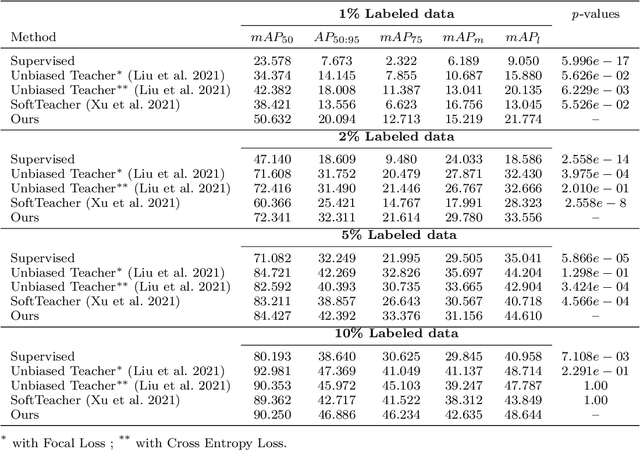

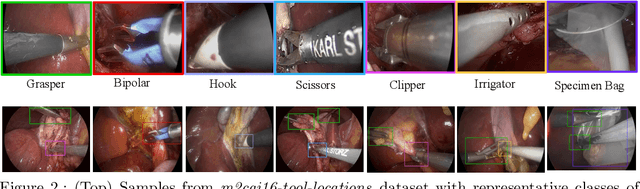

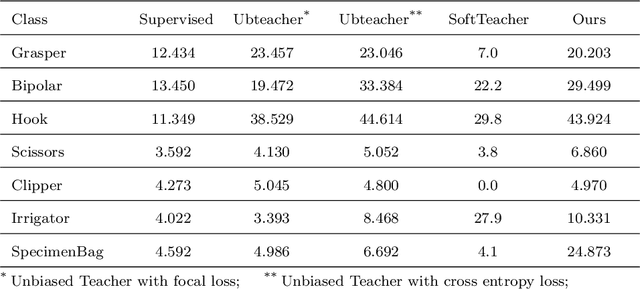

Abstract:Surgical tool detection in minimally invasive surgery is an essential part of computer-assisted interventions. Current approaches are mostly based on supervised methods which require large fully labeled data to train supervised models and suffer from pseudo label bias because of class imbalance issues. However large image datasets with bounding box annotations are often scarcely available. Semi-supervised learning (SSL) has recently emerged as a means for training large models using only a modest amount of annotated data; apart from reducing the annotation cost. SSL has also shown promise to produce models that are more robust and generalizable. Therefore, in this paper we introduce a semi-supervised learning (SSL) framework in surgical tool detection paradigm which aims to mitigate the scarcity of training data and the data imbalance through a knowledge distillation approach. In the proposed work, we train a model with labeled data which initialises the Teacher-Student joint learning, where the Student is trained on Teacher-generated pseudo labels from unlabeled data. We propose a multi-class distance with a margin based classification loss function in the region-of-interest head of the detector to effectively segregate foreground classes from background region. Our results on m2cai16-tool-locations dataset indicate the superiority of our approach on different supervised data settings (1%, 2%, 5%, 10% of annotated data) where our model achieves overall improvements of 8%, 12% and 27% in mAP (on 1% labeled data) over the state-of-the-art SSL methods and a fully supervised baseline, respectively. The code is available at https://github.com/Mansoor-at/Semi-supervised-surgical-tool-det

Federated Learning for Privacy Preservation in Smart Healthcare Systems: A Comprehensive Survey

Mar 18, 2022

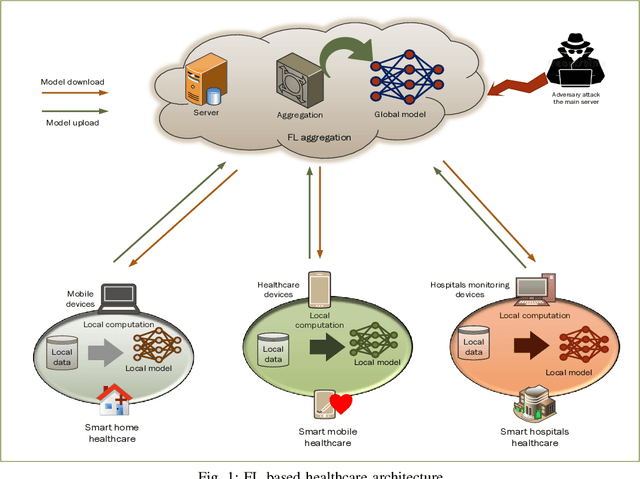

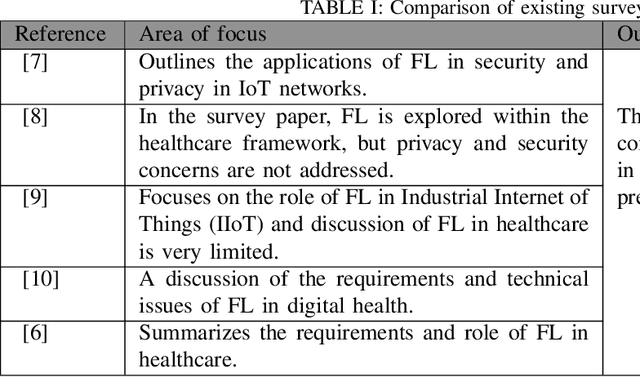

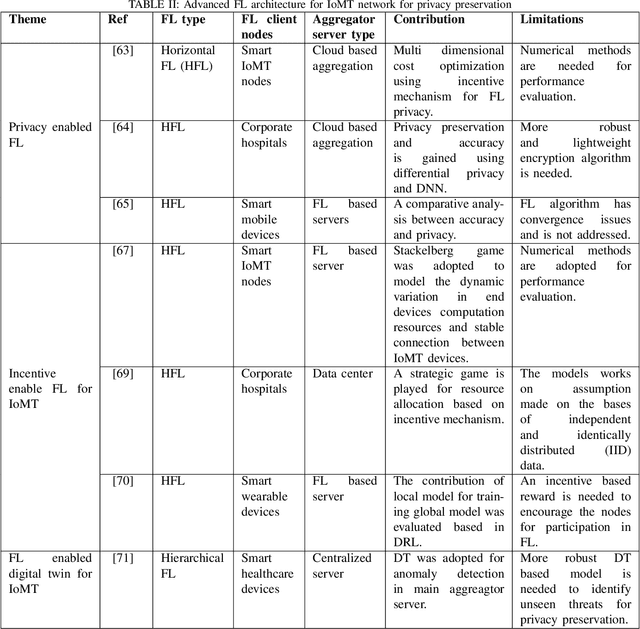

Abstract:Recent advances in electronic devices and communication infrastructure have revolutionized the traditional healthcare system into a smart healthcare system by using IoMT devices. However, due to the centralized training approach of artificial intelligence (AI), the use of mobile and wearable IoMT devices raises privacy concerns with respect to the information that has been communicated between hospitals and end users. The information conveyed by the IoMT devices is highly confidential and can be exposed to adversaries. In this regard, federated learning (FL), a distributive AI paradigm has opened up new opportunities for privacy-preservation in IoMT without accessing the confidential data of the participants. Further, FL provides privacy to end users as only gradients are shared during training. For these specific properties of FL, in this paper we present privacy related issues in IoMT. Afterwards, we present the role of FL in IoMT networks for privacy preservation and introduce some advanced FL architectures incorporating deep reinforcement learning (DRL), digital twin, and generative adversarial networks (GANs) for detecting privacy threats. Subsequently, we present some practical opportunities of FL in smart healthcare systems. At the end, we conclude this survey by providing open research challenges for FL that can be used in future smart healthcare systems

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge