Manish Acharya

Optimizing Code Runtime Performance through Context-Aware Retrieval-Augmented Generation

Jan 29, 2025

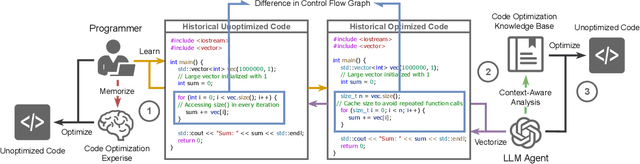

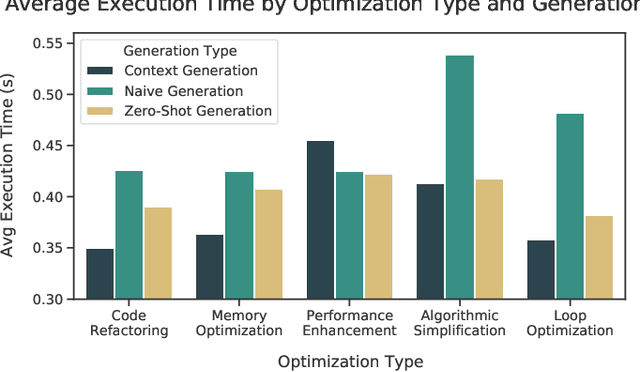

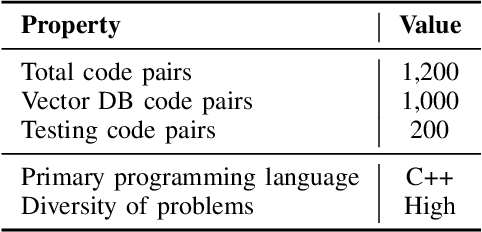

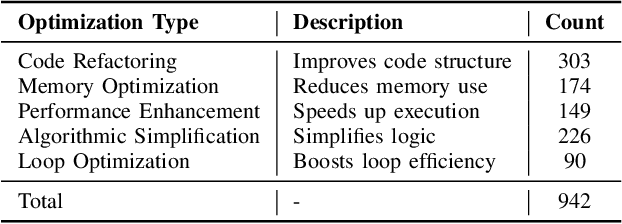

Abstract:Optimizing software performance through automated code refinement offers a promising avenue for enhancing execution speed and efficiency. Despite recent advancements in LLMs, a significant gap remains in their ability to perform in-depth program analysis. This study introduces AUTOPATCH, an in-context learning approach designed to bridge this gap by enabling LLMs to automatically generate optimized code. Inspired by how programmers learn and apply knowledge to optimize software, AUTOPATCH incorporates three key components: (1) an analogy-driven framework to align LLM optimization with human cognitive processes, (2) a unified approach that integrates historical code examples and CFG analysis for context-aware learning, and (3) an automated pipeline for generating optimized code through in-context prompting. Experimental results demonstrate that AUTOPATCH achieves a 7.3% improvement in execution efficiency over GPT-4o across common generated executable code, highlighting its potential to advance automated program runtime optimization.

An Application of Large Language Models to Coding Negotiation Transcripts

Jul 18, 2024

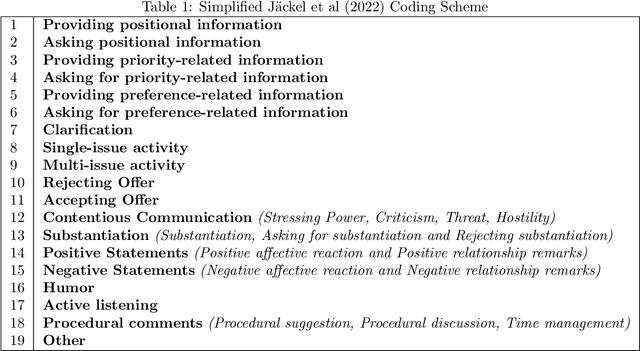

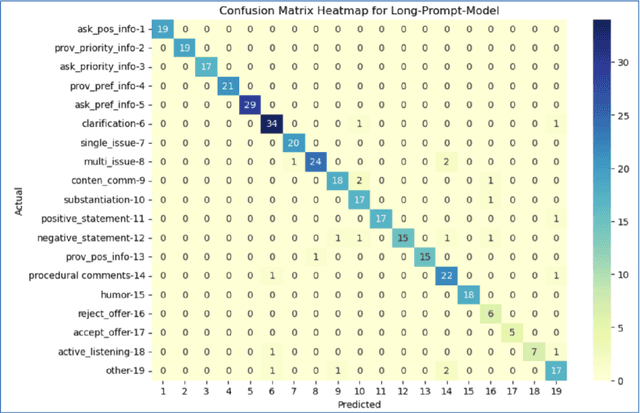

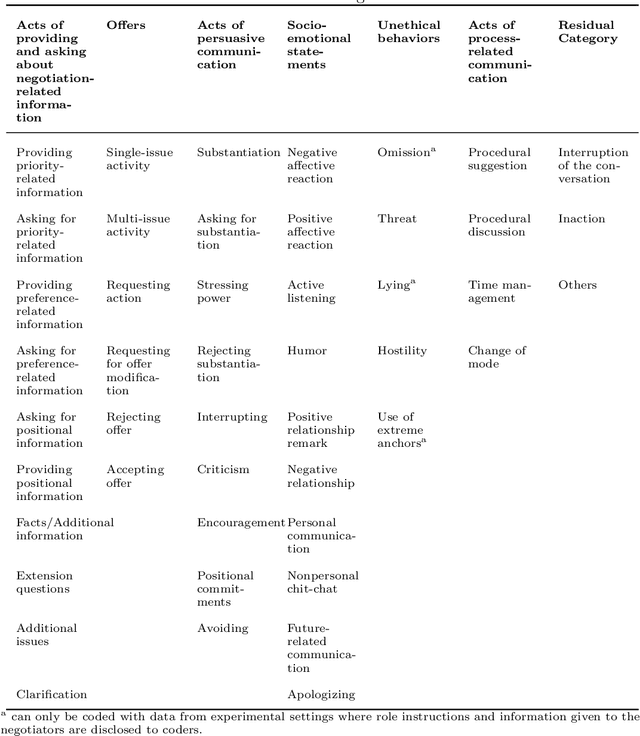

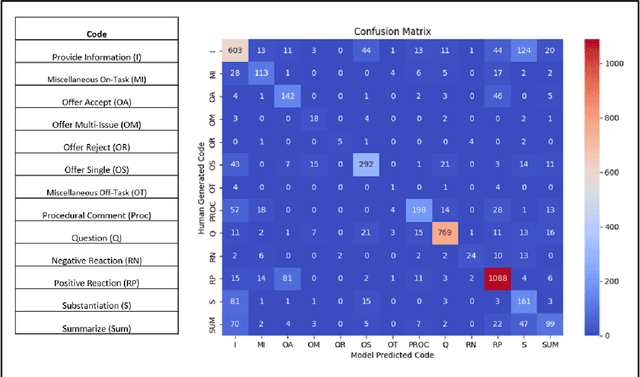

Abstract:In recent years, Large Language Models (LLM) have demonstrated impressive capabilities in the field of natural language processing (NLP). This paper explores the application of LLMs in negotiation transcript analysis by the Vanderbilt AI Negotiation Lab. Starting in September 2022, we applied multiple strategies using LLMs from zero shot learning to fine tuning models to in-context learning). The final strategy we developed is explained, along with how to access and use the model. This study provides a sense of both the opportunities and roadblocks for the implementation of LLMs in real life applications and offers a model for how LLMs can be applied to coding in other fields.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge