Maciej Pietrowski

Impact of GPU uncertainty on the training of predictive deep neural networks

Sep 25, 2021

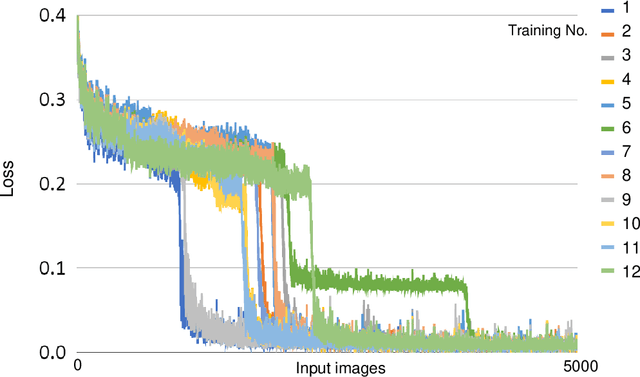

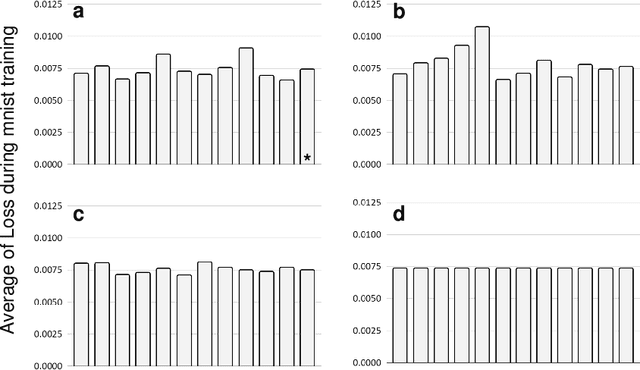

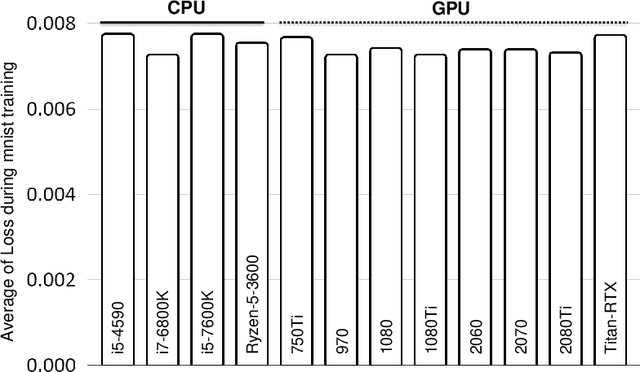

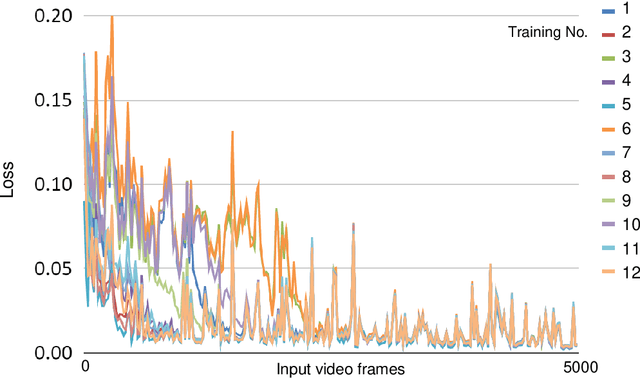

Abstract:[retracted] We found out that the difference was dependent on the Chainer library, and does not replicate with another library (pytorch) which indicates that the results are probably due to a bug in Chainer, rather than being hardware-dependent. -- old abstract Deep neural networks often present uncertainties such as hardware- and software-derived noise and randomness. We studied the effects of such uncertainty on learning outcomes, with a particular focus on the function of graphics processing units (GPUs), and found that GPU-induced uncertainty increased learning accuracy of a certain deep neural network. When training a predictive deep neural network using only the CPU without the GPU, the learning error is higher than when training the same number of epochs using the GPU, suggesting that the GPU plays a different role in the learning process than just increasing the computational speed. Because this effect cannot be observed in learning by a simple autoencoder, it could be a phenomenon specific to certain types of neural networks. GPU-specific computational processing is more indeterminate than that by CPUs, and hardware-derived uncertainties, which are often considered obstacles that need to be eliminated, might, in some cases, be successfully incorporated into the training of deep neural networks. Moreover, such uncertainties might be interesting phenomena to consider in brain-related computational processing, which comprises a large mass of uncertain signals.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge