Lulu Hu

MASQuant: Modality-Aware Smoothing Quantization for Multimodal Large Language Models

Mar 05, 2026Abstract:Post-training quantization (PTQ) with computational invariance for Large Language Models~(LLMs) have demonstrated remarkable advances, however, their application to Multimodal Large Language Models~(MLLMs) presents substantial challenges. In this paper, we analyze SmoothQuant as a case study and identify two critical issues: Smoothing Misalignment and Cross-Modal Computational Invariance. To address these issues, we propose Modality-Aware Smoothing Quantization (MASQuant), a novel framework that introduces (1) Modality-Aware Smoothing (MAS), which learns separate, modality-specific smoothing factors to prevent Smoothing Misalignment, and (2) Cross-Modal Compensation (CMC), which addresses Cross-modal Computational Invariance by using SVD whitening to transform multi-modal activation differences into low-rank forms, enabling unified quantization across modalities. MASQuant demonstrates stable quantization performance across both dual-modal and tri-modal MLLMs. Experimental results show that MASQuant is competitive among the state-of-the-art PTQ algorithms. Source code: https://github.com/alibaba/EfficientAI.

D-CORE: Incentivizing Task Decomposition in Large Reasoning Models for Complex Tool Use

Feb 02, 2026Abstract:Effective tool use and reasoning are essential capabilities for large reasoning models~(LRMs) to address complex real-world problems. Through empirical analysis, we identify that current LRMs lack the capability of sub-task decomposition in complex tool use scenarios, leading to Lazy Reasoning. To address this, we propose a two-stage training framework D-CORE~(\underline{\textbf{D}}ecomposing tasks and \underline{\textbf{Co}}mposing \underline{\textbf{Re}}asoning processes) that first incentivize the LRMs' task decomposition reasoning capability via self-distillation, followed by diversity-aware reinforcement learning~(RL) to restore LRMs' reflective reasoning capability. D-CORE achieves robust tool-use improvements across diverse benchmarks and model scales. Experiments on BFCLv3 demonstrate superiority of our method: D-CORE-8B reaches 77.7\% accuracy, surpassing the best-performing 8B model by 5.7\%. Meanwhile, D-CORE-14B establishes a new state-of-the-art at 79.3\%, outperforming 70B models despite being 5$\times$ smaller. The source code is available at https://github.com/alibaba/EfficientAI.

La RoSA: Enhancing LLM Efficiency via Layerwise Rotated Sparse Activation

Jul 02, 2025

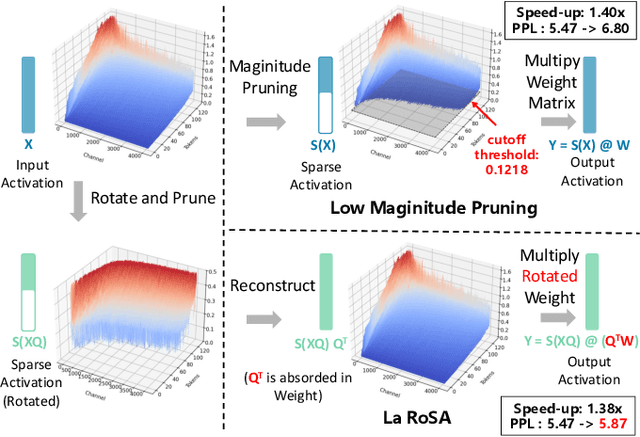

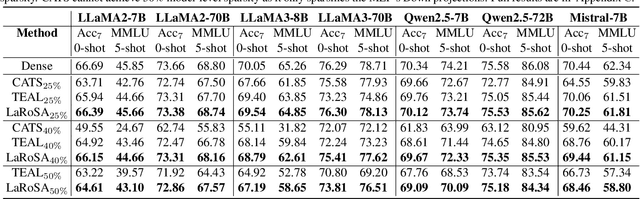

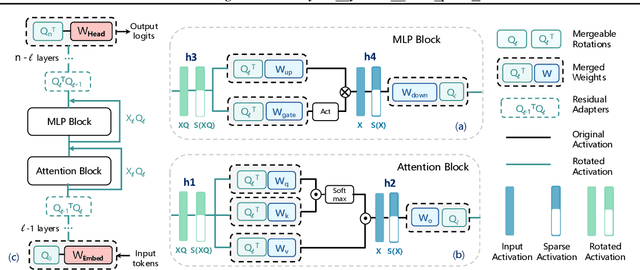

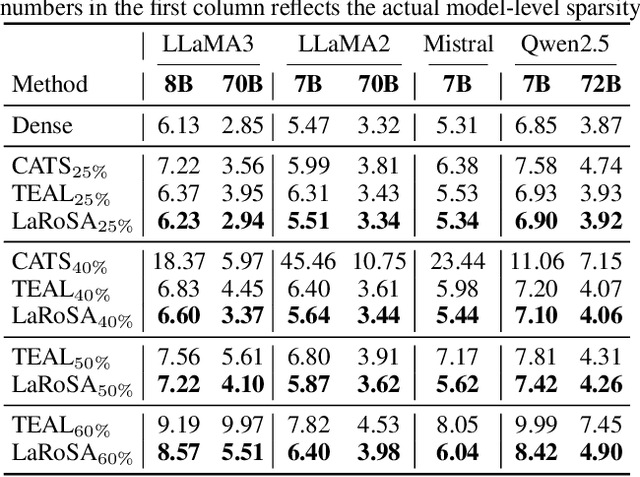

Abstract:Activation sparsity can reduce the computational overhead and memory transfers during the forward pass of Large Language Model (LLM) inference. Existing methods face limitations, either demanding time-consuming recovery training that hinders real-world adoption, or relying on empirical magnitude-based pruning, which causes fluctuating sparsity and unstable inference speed-up. This paper introduces LaRoSA (Layerwise Rotated Sparse Activation), a novel method for activation sparsification designed to improve LLM efficiency without requiring additional training or magnitude-based pruning. We leverage layerwise orthogonal rotations to transform input activations into rotated forms that are more suitable for sparsification. By employing a Top-K selection approach within the rotated activations, we achieve consistent model-level sparsity and reliable wall-clock time speed-up. LaRoSA is effective across various sizes and types of LLMs, demonstrating minimal performance degradation and robust inference acceleration. Specifically, for LLaMA2-7B at 40% sparsity, LaRoSA achieves a mere 0.17 perplexity gap with a consistent 1.30x wall-clock time speed-up, and reduces the accuracy gap in zero-shot tasks compared to the dense model to just 0.54%, while surpassing TEAL by 1.77% and CATS by 17.14%.

Mixture-of-Instructions: Comprehensive Alignment of a Large Language Model through the Mixture of Diverse System Prompting Instructions

Apr 29, 2024

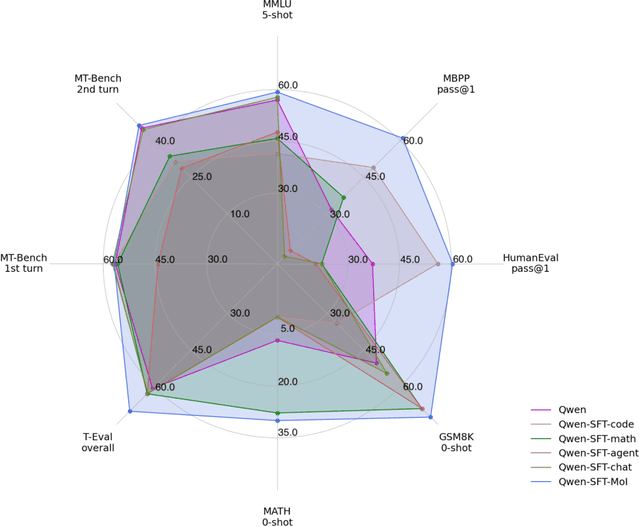

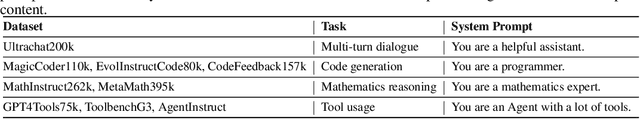

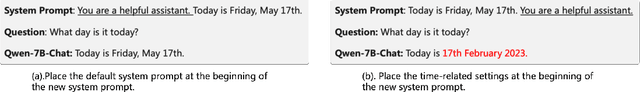

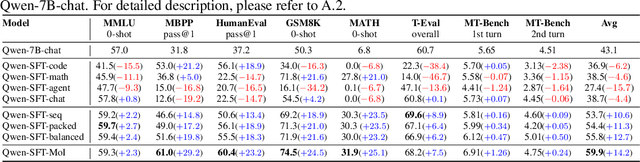

Abstract:With the proliferation of large language models (LLMs), the comprehensive alignment of such models across multiple tasks has emerged as a critical area of research. Existing alignment methodologies primarily address single task, such as multi-turn dialogue, coding, mathematical problem-solving, and tool usage. However, AI-driven products that leverage language models usually necessitate a fusion of these abilities to function effectively in real-world scenarios. Moreover, the considerable computational resources required for proper alignment of LLMs underscore the need for a more robust, efficient, and encompassing approach to multi-task alignment, ensuring improved generative performance. In response to these challenges, we introduce a novel technique termed Mixture-of-Instructions (MoI), which employs a strategy of instruction concatenation combined with diverse system prompts to boost the alignment efficiency of language models. We have also compiled a diverse set of seven benchmark datasets to rigorously evaluate the alignment efficacy of the MoI-enhanced language model. Our methodology was applied to the open-source Qwen-7B-chat model, culminating in the development of Qwen-SFT-MoI. This enhanced model demonstrates significant advancements in generative capabilities across coding, mathematics, and tool use tasks.

Efficient Teacher: Semi-Supervised Object Detection for YOLOv5

Feb 16, 2023Abstract:Semi-Supervised Object Detection (SSOD) has been successful in improving the performance of both R-CNN series and anchor-free detectors. However, one-stage anchor-based detectors lack the structure to generate high-quality or flexible pseudo labels, leading to serious inconsistency problems in SSOD. In this paper, we propose the Efficient Teacher framework for scalable and effective one-stage anchor-based SSOD training, consisting of Dense Detector, Pseudo Label Assigner, and Epoch Adaptor. Dense Detector is a baseline model that extends RetinaNet with dense sampling techniques inspired by YOLOv5. The Efficient Teacher framework introduces a novel pseudo label assignment mechanism, named Pseudo Label Assigner, which makes more refined use of pseudo labels from Dense Detector. Epoch Adaptor is a method that enables a stable and efficient end-to-end semi-supervised training schedule for Dense Detector. The Pseudo Label Assigner prevents the occurrence of bias caused by a large number of low-quality pseudo labels that may interfere with the Dense Detector during the student-teacher mutual learning mechanism, and the Epoch Adaptor utilizes domain and distribution adaptation to allow Dense Detector to learn globally distributed consistent features, making the training independent of the proportion of labeled data. Our experiments show that the Efficient Teacher framework achieves state-of-the-art results on VOC, COCO-standard, and COCO-additional using fewer FLOPs than previous methods. To the best of our knowledge, this is the first attempt to apply Semi-Supervised Object Detection to YOLOv5.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge