Luis Felipe Gutierrez

Toward Explainable Users: Using NLP to Enable AI to Understand Users' Perceptions of Cyber Attacks

Jun 03, 2021

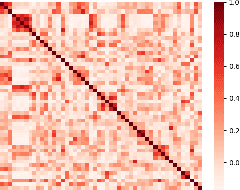

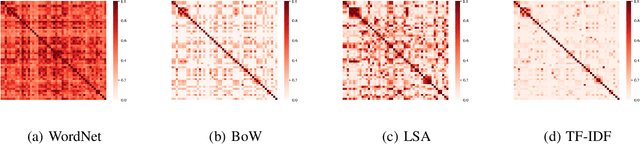

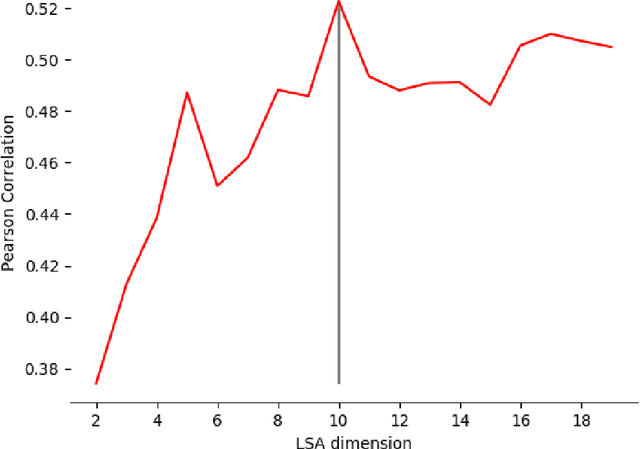

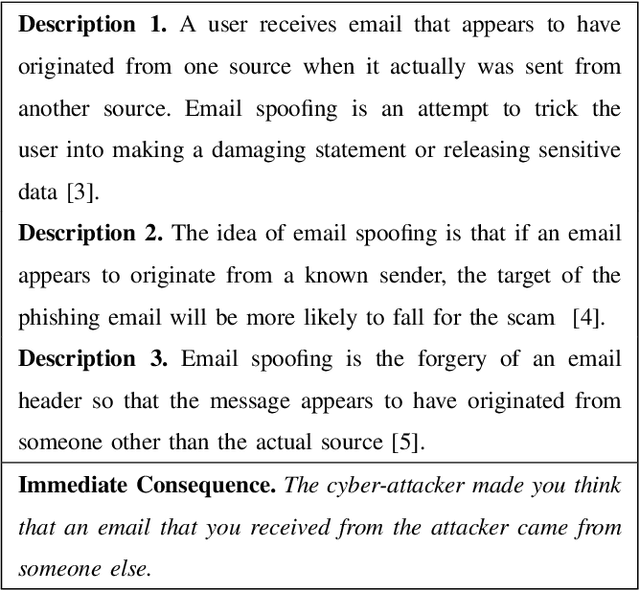

Abstract:To understand how end-users conceptualize consequences of cyber security attacks, we performed a card sorting study, a well-known technique in Cognitive Sciences, where participants were free to group the given consequences of chosen cyber attacks into as many categories as they wished using rationales they see fit. The results of the open card sorting study showed a large amount of inter-participant variation making the research team wonder how the consequences of security attacks were comprehended by the participants. As an exploration of whether it is possible to explain user's mental model and behavior through Artificial Intelligence (AI) techniques, the research team compared the card sorting data with the outputs of a number of Natural Language Processing (NLP) techniques with the goal of understanding how participants perceived and interpreted the consequences of cyber attacks written in natural languages. The results of the NLP-based exploration methods revealed an interesting observation implying that participants had mostly employed checking individual keywords in each sentence to group cyber attack consequences together and less considered the semantics behind the description of consequences of cyber attacks. The results reported in this paper are seemingly useful and important for cyber attacks comprehension from user's perspectives. To the best of our knowledge, this paper is the first introducing the use of AI techniques in explaining and modeling users' behavior and their perceptions about a context. The novel idea introduced here is about explaining users using AI.

Fake Reviews Detection through Analysis of Linguistic Features

Oct 08, 2020

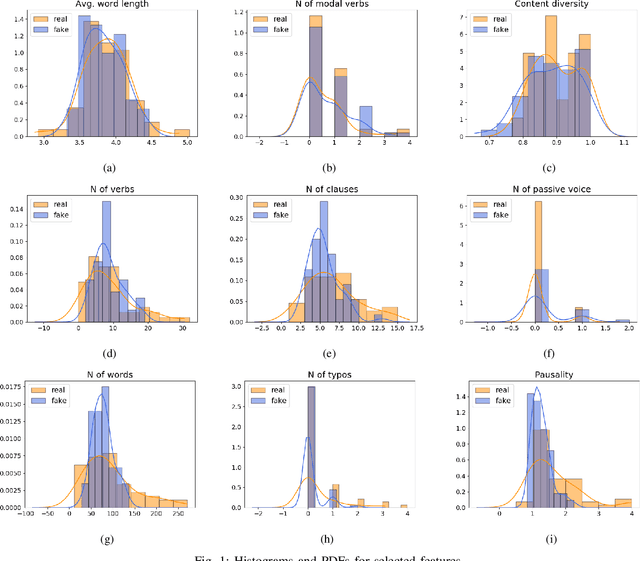

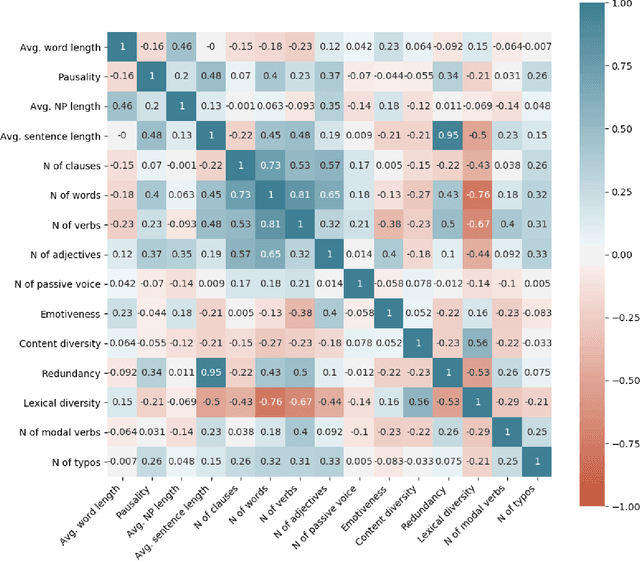

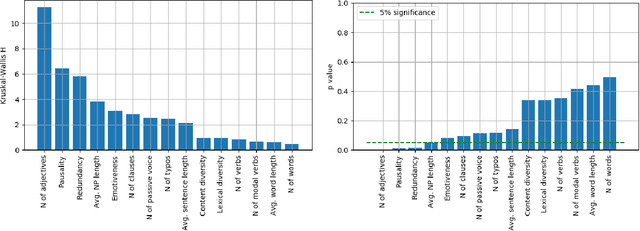

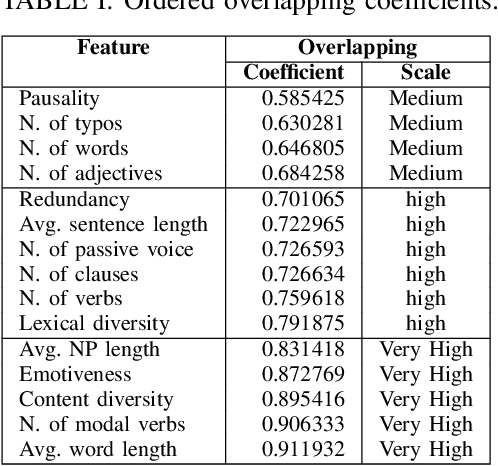

Abstract:Online reviews play an integral part for success or failure of businesses. Prior to purchasing services or goods, customers first review the online comments submitted by previous customers. However, it is possible to superficially boost or hinder some businesses through posting counterfeit and fake reviews. This paper explores a natural language processing approach to identify fake reviews. We present a detailed analysis of linguistic features for distinguishing fake and trustworthy online reviews. We study 15 linguistic features and measure their significance and importance towards the classification schemes employed in this study. Our results indicate that fake reviews tend to include more redundant terms and pauses, and generally contain longer sentences. The application of several machine learning classification algorithms revealed that we were able to discriminate fake from real reviews with high accuracy using these linguistic features.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge