Lena Kästner

Mapping the Potential of Explainable Artificial Intelligence (XAI) for Fairness Along the AI Lifecycle

Apr 30, 2024

Abstract:The widespread use of artificial intelligence (AI) systems across various domains is increasingly highlighting issues related to algorithmic fairness, especially in high-stakes scenarios. Thus, critical considerations of how fairness in AI systems might be improved, and what measures are available to aid this process, are overdue. Many researchers and policymakers see explainable AI (XAI) as a promising way to increase fairness in AI systems. However, there is a wide variety of XAI methods and fairness conceptions expressing different desiderata, and the precise connections between XAI and fairness remain largely nebulous. Besides, different measures to increase algorithmic fairness might be applicable at different points throughout an AI system's lifecycle. Yet, there currently is no coherent mapping of fairness desiderata along the AI lifecycle. In this paper, we set out to bridge both these gaps: We distill eight fairness desiderata, map them along the AI lifecycle, and discuss how XAI could help address each of them. We hope to provide orientation for practical applications and to inspire XAI research specifically focused on these fairness desiderata.

Sources of Opacity in Computer Systems: Towards a Comprehensive Taxonomy

Jul 26, 2023

Abstract:Modern computer systems are ubiquitous in contemporary life yet many of them remain opaque. This poses significant challenges in domains where desiderata such as fairness or accountability are crucial. We suggest that the best strategy for achieving system transparency varies depending on the specific source of opacity prevalent in a given context. Synthesizing and extending existing discussions, we propose a taxonomy consisting of eight sources of opacity that fall into three main categories: architectural, analytical, and socio-technical. For each source, we provide initial suggestions as to how to address the resulting opacity in practice. The taxonomy provides a starting point for requirements engineers and other practitioners to understand contextually prevalent sources of opacity, and to select or develop appropriate strategies for overcoming them.

What Do We Want From Explainable Artificial Intelligence ? -- A Stakeholder Perspective on XAI and a Conceptual Model Guiding Interdisciplinary XAI Research

Feb 15, 2021

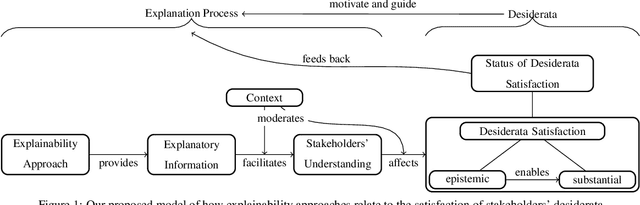

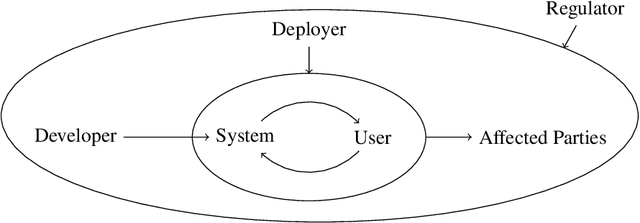

Abstract:Previous research in Explainable Artificial Intelligence (XAI) suggests that a main aim of explainability approaches is to satisfy specific interests, goals, expectations, needs, and demands regarding artificial systems (we call these stakeholders' desiderata) in a variety of contexts. However, the literature on XAI is vast, spreads out across multiple largely disconnected disciplines, and it often remains unclear how explainability approaches are supposed to achieve the goal of satisfying stakeholders' desiderata. This paper discusses the main classes of stakeholders calling for explainability of artificial systems and reviews their desiderata. We provide a model that explicitly spells out the main concepts and relations necessary to consider and investigate when evaluating, adjusting, choosing, and developing explainability approaches that aim to satisfy stakeholders' desiderata. This model can serve researchers from the variety of different disciplines involved in XAI as a common ground. It emphasizes where there is interdisciplinary potential in the evaluation and the development of explainability approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge