Lelia Glass

Generating event descriptions under syntactic and semantic constraints

Dec 24, 2024

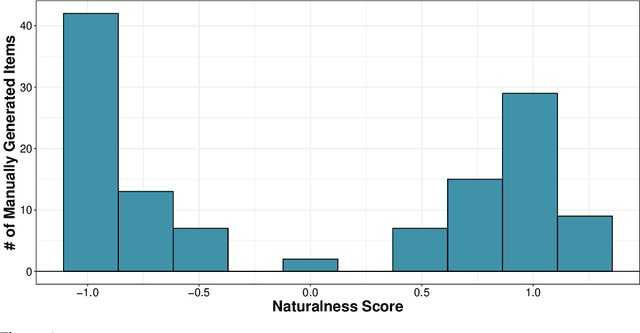

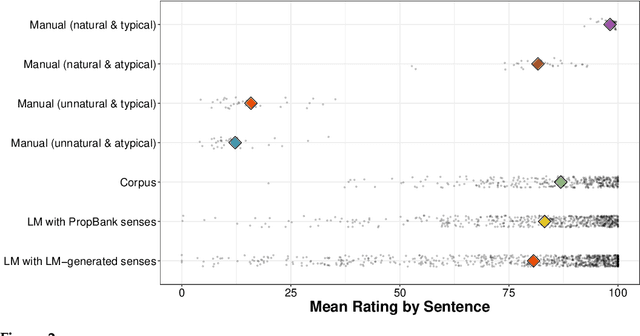

Abstract:With the goal of supporting scalable lexical semantic annotation, analysis, and theorizing, we conduct a comprehensive evaluation of different methods for generating event descriptions under both syntactic constraints -- e.g. desired clause structure -- and semantic constraints -- e.g. desired verb sense. We compare three different methods -- (i) manual generation by experts; (ii) sampling from a corpus annotated for syntactic and semantic information; and (iii) sampling from a language model (LM) conditioned on syntactic and semantic information -- along three dimensions of the generated event descriptions: (a) naturalness, (b) typicality, and (c) distinctiveness. We find that all methods reliably produce natural, typical, and distinctive event descriptions, but that manual generation continues to produce event descriptions that are more natural, typical, and distinctive than the automated generation methods. We conclude that the automated methods we consider produce event descriptions of sufficient quality for use in downstream annotation and analysis insofar as the methods used for this annotation and analysis are robust to a small amount of degradation in the resulting event descriptions.

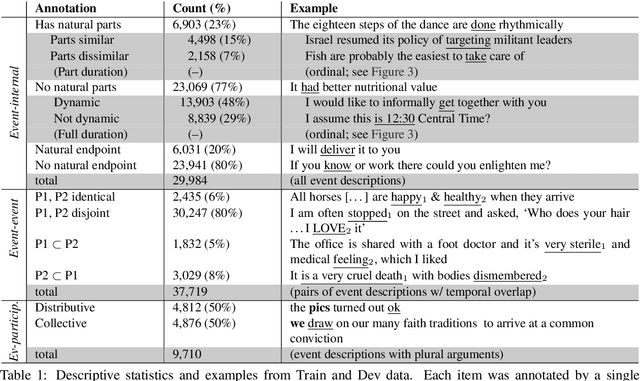

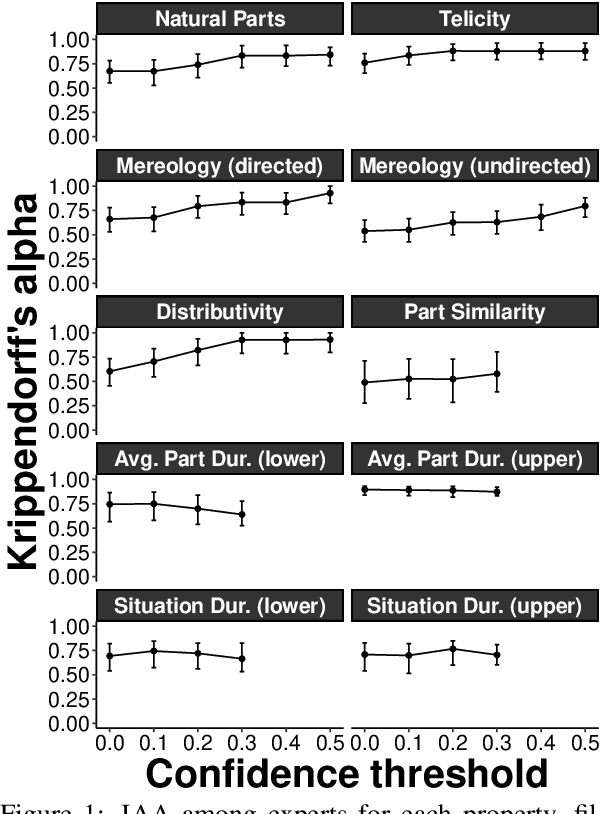

Decomposing and Recomposing Event Structure

Mar 18, 2021

Abstract:We present an event structure ontology empirically derived from inferential properties annotated on sentence- and document-level semantic graphs. We induce this ontology jointly with semantic role, entity type, and event-event relation ontologies using a document-level generative model, identifying sets of types that align closely with previous theoretically-motivated taxonomies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge