Laurent Perrinet

INT

Future-Guided Learning: A Predictive Approach To Enhance Time-Series Forecasting

Oct 19, 2024

Abstract:Accurate time-series forecasting is essential across a multitude of scientific and industrial domains, yet deep learning models often struggle with challenges such as capturing long-term dependencies and adapting to drift in data distributions over time. We introduce Future-Guided Learning, an approach that enhances time-series event forecasting through a dynamic feedback mechanism inspired by predictive coding. Our approach involves two models: a detection model that analyzes future data to identify critical events and a forecasting model that predicts these events based on present data. When discrepancies arise between the forecasting and detection models, the forecasting model undergoes more substantial updates, effectively minimizing surprise and adapting to shifts in the data distribution by aligning its predictions with actual future outcomes. This feedback loop, drawing upon principles of predictive coding, enables the forecasting model to dynamically adjust its parameters, improving accuracy by focusing on features that remain relevant despite changes in the underlying data. We validate our method on a variety of tasks such as seizure prediction in biomedical signal analysis and forecasting in dynamical systems, achieving a 40\% increase in the area under the receiver operating characteristic curve (AUC-ROC) and a 10\% reduction in mean absolute error (MAE), respectively. By incorporating a predictive feedback mechanism that adapts to data distribution drift, Future-Guided Learning offers a promising avenue for advancing time-series forecasting with deep learning.

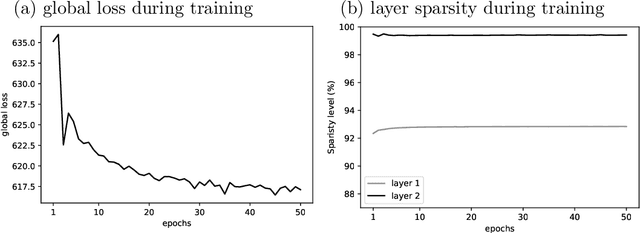

Effect of top-down connections in Hierarchical Sparse Coding

Feb 03, 2020

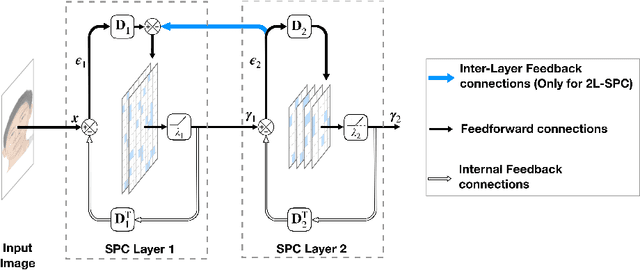

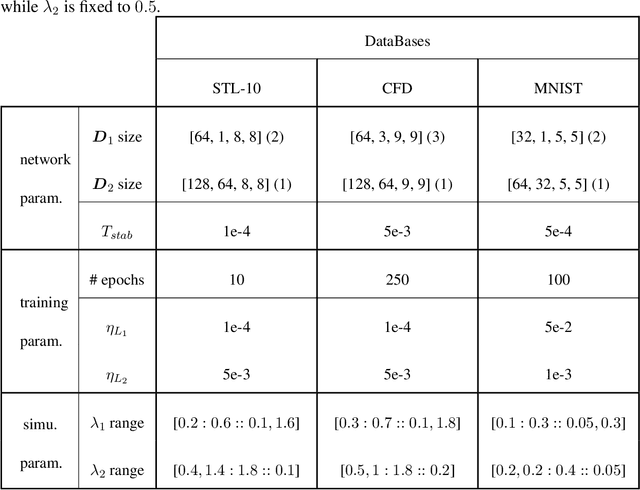

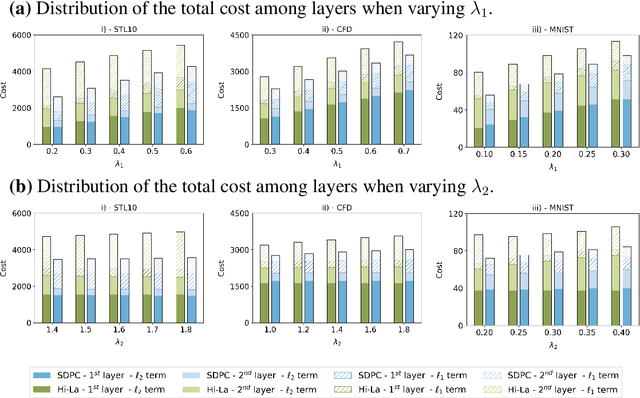

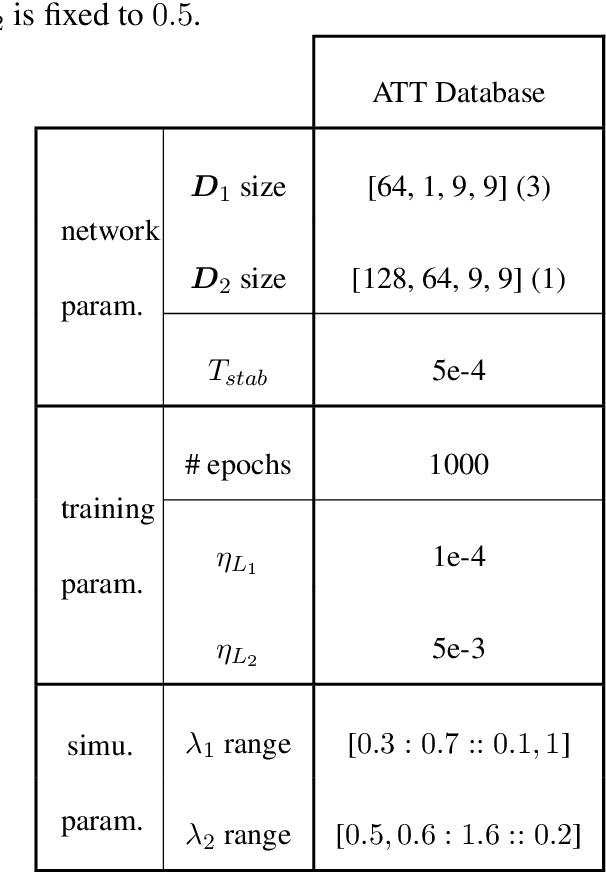

Abstract:Hierarchical Sparse Coding (HSC) is a powerful model to efficiently represent multi-dimensional, structured data such as images. The simplest solution to solve this computationally hard problem is to decompose it into independent layer-wise subproblems. However, neuroscientific evidence would suggest inter-connecting these subproblems as in the Predictive Coding (PC) theory, which adds top-down connections between consecutive layers. In this study, a new model called 2-Layers Sparse Predictive Coding (2L-SPC) is introduced to assess the impact of this inter-layer feedback connection. In particular, the 2L-SPC is compared with a Hierarchical Lasso (Hi-La) network made out of a sequence of independent Lasso layers. The 2L-SPC and the 2-layers Hi-La networks are trained on 4 different databases and with different sparsity parameters on each layer. First, we show that the overall prediction error generated by 2L-SPC is lower thanks to the feedback mechanism as it transfers prediction error between layers. Second, we demonstrate that the inference stage of the 2L-SPC is faster to converge than for the Hi-La model. Third, we show that the 2L-SPC also accelerates the learning process. Finally, the qualitative analysis of both models dictionaries, supported by their activation probability, show that the 2L-SPC features are more generic and informative.

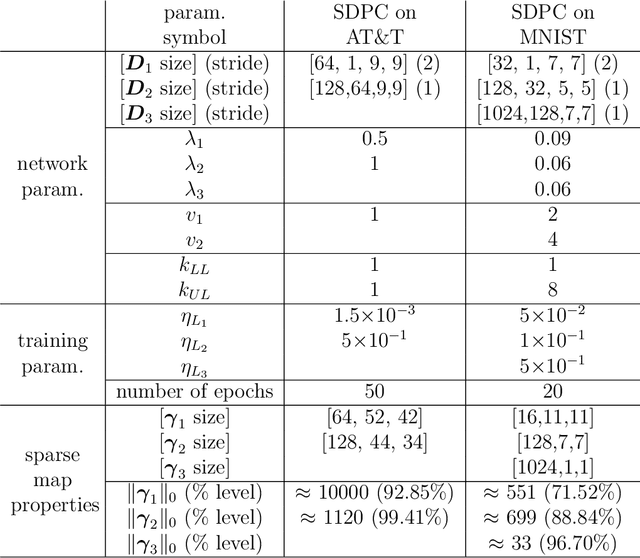

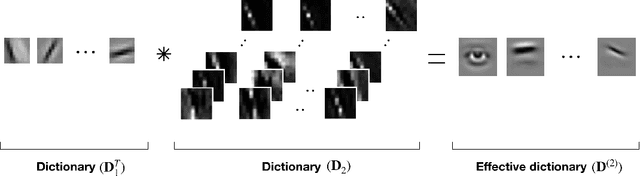

Meaningful representations emerge from Sparse Deep Predictive Coding

Feb 20, 2019

Abstract:Convolutional Neural Networks (CNNs) are the state-of-the-art algorithms used in computer vision. However, these models often suffer from the lack of interpretability of their information transformation process. To address this problem, we introduce a novel model called Sparse Deep Predictive Coding (SDPC). In a biologically realistic manner, SDPC mimics how the brain is efficiently representing visual information. This model complements the hierarchical convolutional layers found in CNNs with the feed-forward and feed-back update scheme described in the Predictive Coding (PC) theory and found in the architecture of the mammalian visual system. We experimentally demonstrate on two databases that the SDPC model extracts qualitatively meaningful features. These features, besides being similar to some of the biological Receptive Fields of the visual cortex, also represent hierarchically independent components of the image that are crucial to describe it in a generic manner. For the first time, the SDPC model demonstrates a meaningful representation of features within the hierarchical generative model and of the decision-making process leading to a specific prediction. A quantitative analysis reveals that the features extracted by the SDPC model encode the input image into a representation that is both easily classifiable and robust to noise.

Sparse models for Computer Vision

Jan 24, 2017

Abstract:The representation of images in the brain is known to be sparse. That is, as neural activity is recorded in a visual area ---for instance the primary visual cortex of primates--- only a few neurons are active at a given time with respect to the whole population. It is believed that such a property reflects the efficient match of the representation with the statistics of natural scenes. Applying such a paradigm to computer vision therefore seems a promising approach towards more biomimetic algorithms. Herein, we will describe a biologically-inspired approach to this problem. First, we will describe an unsupervised learning paradigm which is particularly adapted to the efficient coding of image patches. Then, we will outline a complete multi-scale framework ---SparseLets--- implementing a biologically inspired sparse representation of natural images. Finally, we will propose novel methods for integrating prior information into these algorithms and provide some preliminary experimental results. We will conclude by giving some perspective on applying such algorithms to computer vision. More specifically, we will propose that bio-inspired approaches may be applied to computer vision using predictive coding schemes, sparse models being one simple and efficient instance of such schemes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge