Laura I. Galindez Olascoaga

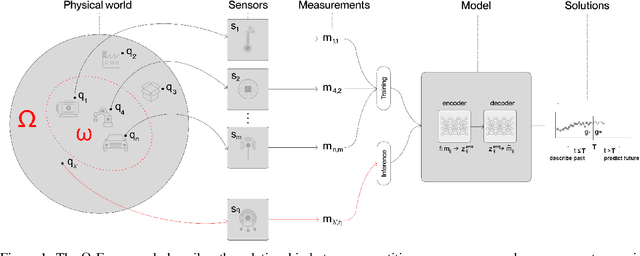

A Phenomenological AI Foundation Model for Physical Signals

Oct 15, 2024

Abstract:The objective of this work is to develop an AI foundation model for physical signals that can generalize across diverse phenomena, domains, applications, and sensing apparatuses. We propose a phenomenological approach and framework for creating and validating such AI foundation models. Based on this framework, we developed and trained a model on 0.59 billion samples of cross-modal sensor measurements, ranging from electrical current to fluid flow to optical sensors. Notably, no prior knowledge of physical laws or inductive biases were introduced into the model. Through several real-world experiments, we demonstrate that a single foundation model could effectively encode and predict physical behaviors, such as mechanical motion and thermodynamics, including phenomena not seen in training. The model also scales across physical processes of varying complexity, from tracking the trajectory of a simple spring-mass system to forecasting large electrical grid dynamics. This work highlights the potential of building a unified AI foundation model for diverse physical world processes.

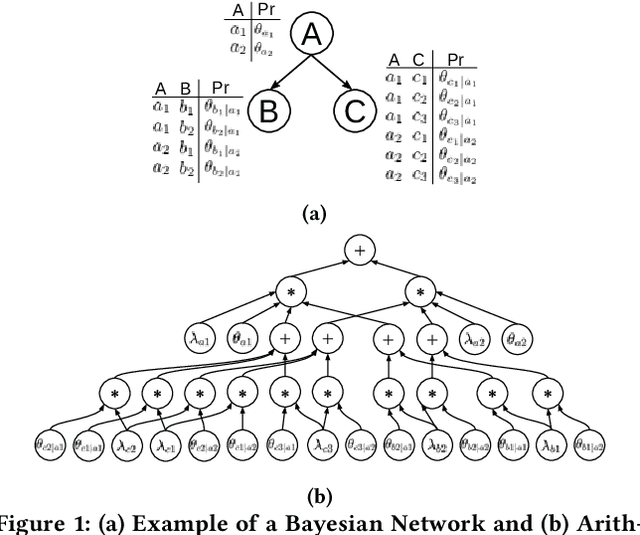

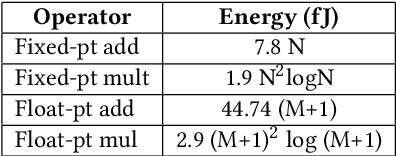

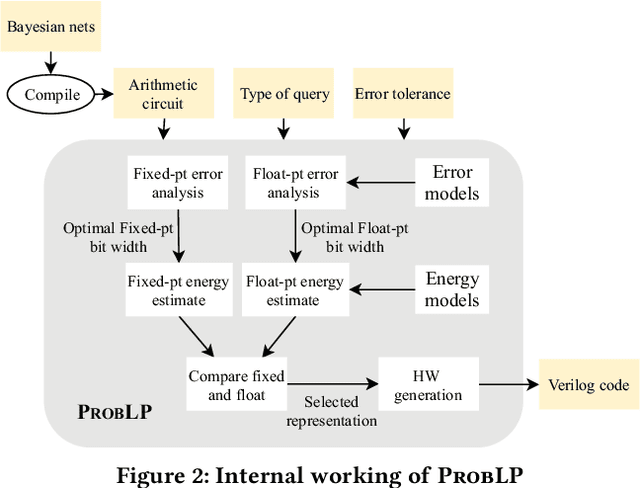

ProbLP: A framework for low-precision probabilistic inference

Feb 27, 2021

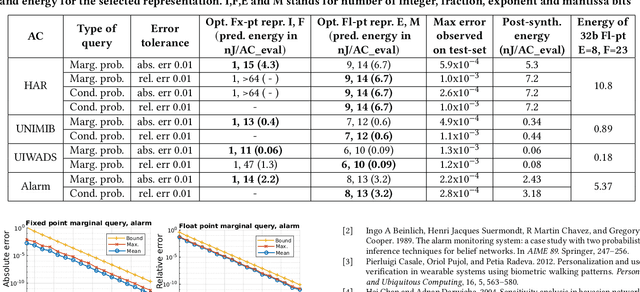

Abstract:Bayesian reasoning is a powerful mechanism for probabilistic inference in smart edge-devices. During such inferences, a low-precision arithmetic representation can enable improved energy efficiency. However, its impact on inference accuracy is not yet understood. Furthermore, general-purpose hardware does not natively support low-precision representation. To address this, we propose ProbLP, a framework that automates the analysis and design of low-precision probabilistic inference hardware. It automatically chooses an appropriate energy-efficient representation based on worst-case error-bounds and hardware energy-models. It generates custom hardware for the resulting inference network exploiting parallelism, pipelining and low-precision operation. The framework is validated on several embedded-sensing benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge