Kwangmin Yu

Fourier neural operators for spatiotemporal dynamics in two-dimensional turbulence

Sep 25, 2024Abstract:High-fidelity direct numerical simulation of turbulent flows for most real-world applications remains an outstanding computational challenge. Several machine learning approaches have recently been proposed to alleviate the computational cost even though they become unstable or unphysical for long time predictions. We identify that the Fourier neural operator (FNO) based models combined with a partial differential equation (PDE) solver can accelerate fluid dynamic simulations and thus address computational expense of large-scale turbulence simulations. We treat the FNO model on the same footing as a PDE solver and answer important questions about the volume and temporal resolution of data required to build pre-trained models for turbulence. We also discuss the pitfalls of purely data-driven approaches that need to be avoided by the machine learning models to become viable and competitive tools for long time simulations of turbulence.

Layered SGD: A Decentralized and Synchronous SGD Algorithm for Scalable Deep Neural Network Training

Jun 13, 2019

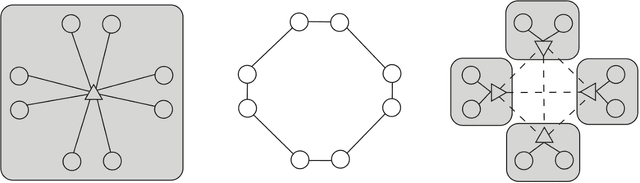

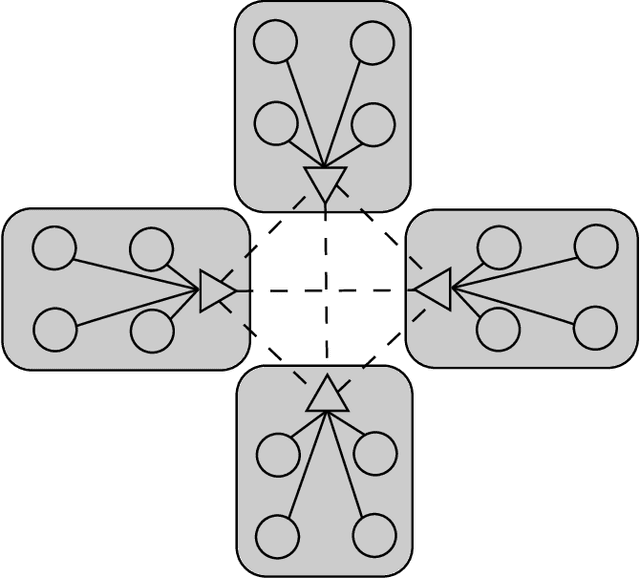

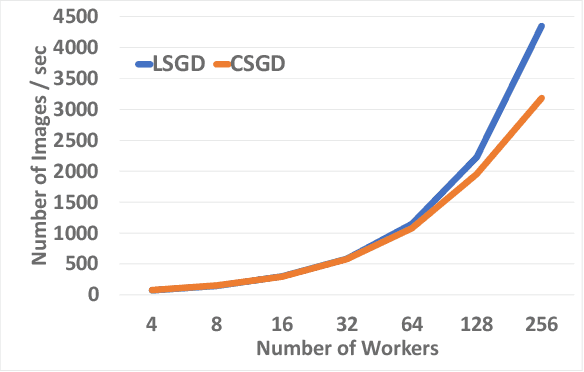

Abstract:Stochastic Gradient Descent (SGD) is the most popular algorithm for training deep neural networks (DNNs). As larger networks and datasets cause longer training times, training on distributed systems is common and distributed SGD variants, mainly asynchronous and synchronous SGD, are widely used. Asynchronous SGD is communication efficient but suffers from accuracy degradation due to delayed parameter updating. Synchronous SGD becomes communication intensive when the number of nodes increases regardless of its advantage. To address these issues, we introduce Layered SGD (LSGD), a new decentralized synchronous SGD algorithm. LSGD partitions computing resources into subgroups that each contain a communication layer (communicator) and a computation layer (worker). Each subgroup has centralized communication for parameter updates while communication between subgroups is handled by communicators. As a result, communication time is overlapped with I/O latency of workers. The efficiency of the algorithm is tested by training a deep network on the ImageNet classification task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge