Koichi Konishi

Stability Analysis of ChatGPT-based Sentiment Analysis in AI Quality Assurance

Jan 15, 2024

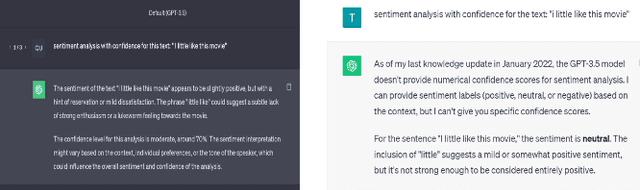

Abstract:In the era of large AI models, the complex architecture and vast parameters present substantial challenges for effective AI quality management (AIQM), e.g. large language model (LLM). This paper focuses on investigating the quality assurance of a specific LLM-based AI product--a ChatGPT-based sentiment analysis system. The study delves into stability issues related to both the operation and robustness of the expansive AI model on which ChatGPT is based. Experimental analysis is conducted using benchmark datasets for sentiment analysis. The results reveal that the constructed ChatGPT-based sentiment analysis system exhibits uncertainty, which is attributed to various operational factors. It demonstrated that the system also exhibits stability issues in handling conventional small text attacks involving robustness.

Threats, Vulnerabilities, and Controls of Machine Learning Based Systems: A Survey and Taxonomy

Jan 19, 2023

Abstract:In this article, we propose the Artificial Intelligence Security Taxonomy to systematize the knowledge of threats, vulnerabilities, and security controls of machine-learning-based (ML-based) systems. We first classify the damage caused by attacks against ML-based systems, define ML-specific security, and discuss its characteristics. Next, we enumerate all relevant assets and stakeholders and provide a general taxonomy for ML-specific threats. Then, we collect a wide range of security controls against ML-specific threats through an extensive review of recent literature. Finally, we classify the vulnerabilities and controls of an ML-based system in terms of each vulnerable asset in the system's entire lifecycle.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge