Kirill Chechil

Unsupervised Model Drift Estimation with Batch Normalization Statistics for Dataset Shift Detection and Model Selection

Jul 01, 2021

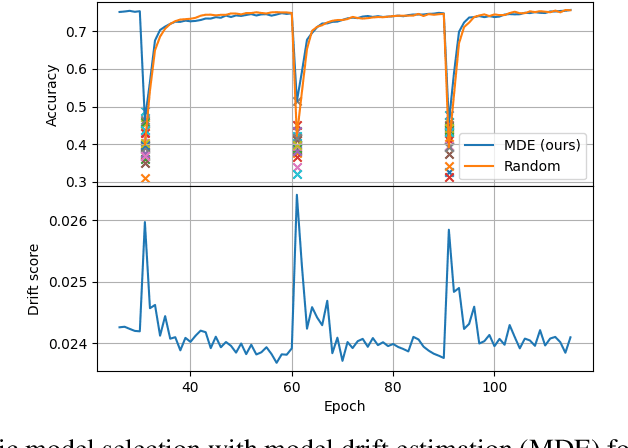

Abstract:While many real-world data streams imply that they change frequently in a nonstationary way, most of deep learning methods optimize neural networks on training data, and this leads to severe performance degradation when dataset shift happens. However, it is less possible to annotate or inspect newly streamed data by humans, and thus it is desired to measure model drift at inference time in an unsupervised manner. In this paper, we propose a novel method of model drift estimation by exploiting statistics of batch normalization layer on unlabeled test data. To remedy possible sampling error of streamed input data, we adopt low-rank approximation to each representational layer. We show the effectiveness of our method not only on dataset shift detection but also on model selection when there are multiple candidate models among model zoo or training trajectories in an unsupervised way. We further demonstrate the consistency of our method by comparing model drift scores between different network architectures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge