Kenta Tsukahara

Continual Multi-Robot Learning from Black-Box Visual Place Recognition Models

Mar 04, 2025

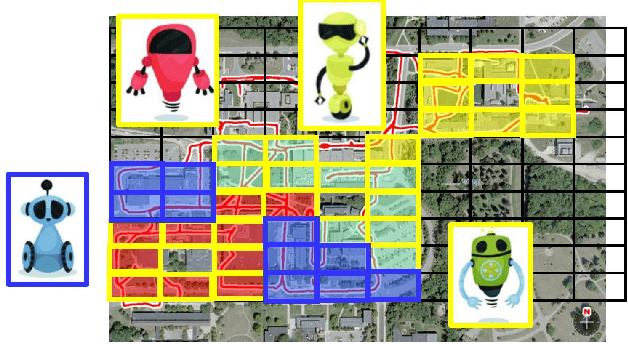

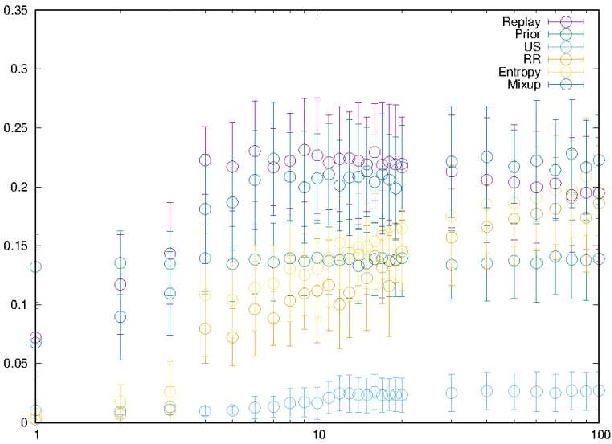

Abstract:In the context of visual place recognition (VPR), continual learning (CL) techniques offer significant potential for avoiding catastrophic forgetting when learning new places. However, existing CL methods often focus on knowledge transfer from a known model to a new one, overlooking the existence of unknown black-box models. We explore a novel multi-robot CL approach that enables knowledge transfer from black-box VPR models (teachers), such as those of local robots encountered by traveler robots (students) in unknown environments. Specifically, we introduce Membership Inference Attack, or MIA, the only major privacy attack applicable to black-box models, and leverage it to reconstruct pseudo training sets, which serve as the key knowledge to be exchanged between robots, from black-box VPR models. Furthermore, we aim to overcome the inherently low sampling efficiency of MIA by leveraging insights on place class prediction distribution and un-learned class detection imported from the VPR literature as a prior distribution. We also analyze both the individual effects of these methods and their combined impact. Experimental results demonstrate that our black-box MIA (BB-MIA) approach is remarkably powerful despite its simplicity, significantly enhancing the VPR capability of lower-performing robots through brief communication with other robots. This study contributes to optimizing knowledge sharing between robots in VPR and enhancing autonomy in open-world environments with multi-robot systems that are fault-tolerant and scalable.

LMD-PGN: Cross-Modal Knowledge Distillation from First-Person-View Images to Third-Person-View BEV Maps for Universal Point Goal Navigation

Dec 23, 2024Abstract:Point goal navigation (PGN) is a mapless navigation approach that trains robots to visually navigate to goal points without relying on pre-built maps. Despite significant progress in handling complex environments using deep reinforcement learning, current PGN methods are designed for single-robot systems, limiting their generalizability to multi-robot scenarios with diverse platforms. This paper addresses this limitation by proposing a knowledge transfer framework for PGN, allowing a teacher robot to transfer its learned navigation model to student robots, including those with unknown or black-box platforms. We introduce a novel knowledge distillation (KD) framework that transfers first-person-view (FPV) representations (view images, turning/forward actions) to universally applicable third-person-view (TPV) representations (local maps, subgoals). The state is redefined as reconstructed local maps using SLAM, while actions are mapped to subgoals on a predefined grid. To enhance training efficiency, we propose a sampling-efficient KD approach that aligns training episodes via a noise-robust local map descriptor (LMD). Although validated on 2D wheeled robots, this method can be extended to 3D action spaces, such as drones. Experiments conducted in Habitat-Sim demonstrate the feasibility of the proposed framework, requiring minimal implementation effort. This study highlights the potential for scalable and cross-platform PGN solutions, expanding the applicability of embodied AI systems in multi-robot scenarios.

Training Self-localization Models for Unseen Unfamiliar Places via Teacher-to-Student Data-Free Knowledge Transfer

Mar 13, 2024

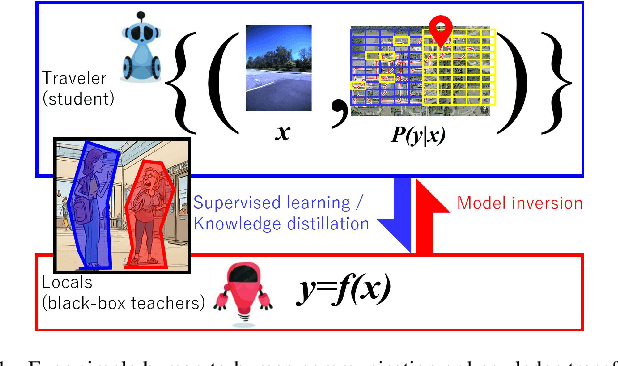

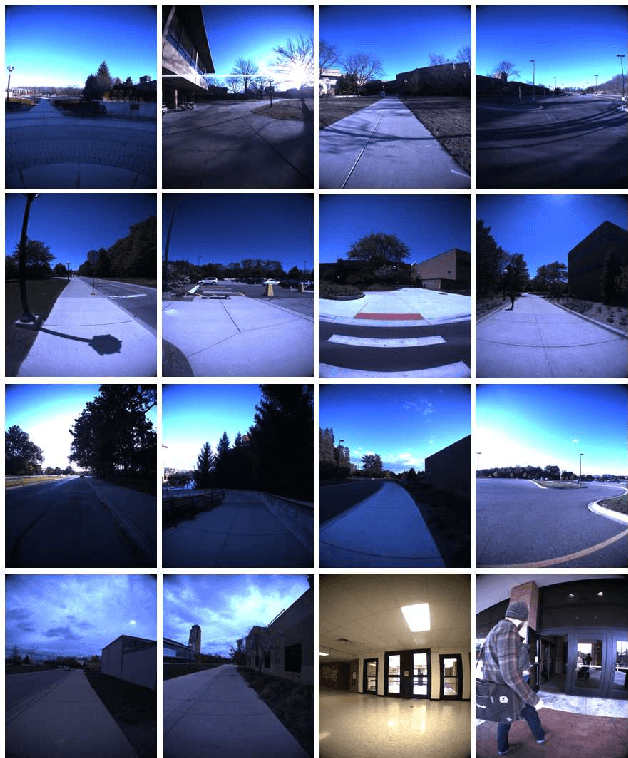

Abstract:A typical assumption in state-of-the-art self-localization models is that an annotated training dataset is available in the target workspace. However, this does not always hold when a robot travels in a general open-world. This study introduces a novel training scheme for open-world distributed robot systems. In our scheme, a robot ("student") can ask the other robots it meets at unfamiliar places ("teachers") for guidance. Specifically, a pseudo-training dataset is reconstructed from the teacher model and thereafter used for continual learning of the student model. Unlike typical knowledge transfer schemes, our scheme introduces only minimal assumptions on the teacher model, such that it can handle various types of open-set teachers, including uncooperative, untrainable (e.g., image retrieval engines), and blackbox teachers (i.e., data privacy). Rather than relying on the availability of private data of teachers as in existing methods, we propose to exploit an assumption that holds universally in self-localization tasks: "The teacher model is a self-localization system" and to reuse the self-localization system of a teacher as a sole accessible communication channel. We particularly focus on designing an excellent student/questioner whose interactions with teachers can yield effective question-and-answer sequences that can be used as pseudo-training datasets for the student self-localization model. When applied to a generic recursive knowledge distillation scenario, our approach exhibited stable and consistent performance improvement.

Recursive Distillation for Open-Set Distributed Robot Localization

Dec 26, 2023Abstract:A typical assumption in state-of-the-art self-localization models is that an annotated training dataset is available for the target workspace. However, this is not necessarily true when a robot travels around the general open world. This work introduces a novel training scheme for open-world distributed robot systems. In our scheme, a robot (``student") can ask the other robots it meets at unfamiliar places (``teachers") for guidance. Specifically, a pseudo-training dataset is reconstructed from the teacher model and then used for continual learning of the student model under domain, class, and vocabulary incremental setup. Unlike typical knowledge transfer schemes, our scheme introduces only minimal assumptions on the teacher model, so that it can handle various types of open-set teachers, including those uncooperative, untrainable (e.g., image retrieval engines), or black-box teachers (i.e., data privacy). In this paper, we investigate a ranking function as an instance of such generic models, using a challenging data-free recursive distillation scenario, where a student once trained can recursively join the next-generation open teacher set.

A Multi-modal Approach to Single-modal Visual Place Classification

May 11, 2023Abstract:Visual place classification from a first-person-view monocular RGB image is a fundamental problem in long-term robot navigation. A difficulty arises from the fact that RGB image classifiers are often vulnerable to spatial and appearance changes and degrade due to domain shifts, such as seasonal, weather, and lighting differences. To address this issue, multi-sensor fusion approaches combining RGB and depth (D) (e.g., LIDAR, radar, stereo) have gained popularity in recent years. Inspired by these efforts in multimodal RGB-D fusion, we explore the use of pseudo-depth measurements from recently-developed techniques of ``domain invariant" monocular depth estimation as an additional pseudo depth modality, by reformulating the single-modal RGB image classification task as a pseudo multi-modal RGB-D classification problem. Specifically, a practical, fully self-supervised framework for training, appropriately processing, fusing, and classifying these two modalities, RGB and pseudo-D, is described. Experiments on challenging cross-domain scenarios using public NCLT datasets validate effectiveness of the proposed framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge