Keisuke Sugita

Embedded Image-to-Image Translation for Efficient Sim-to-Real Transfer in Learning-based Robot-Assisted Soft Manipulation

Sep 16, 2024

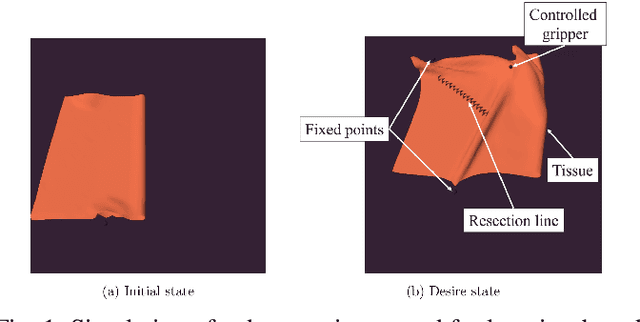

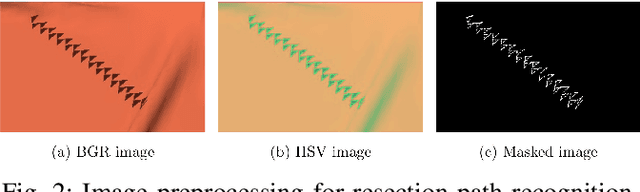

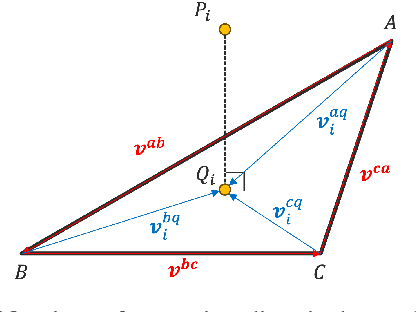

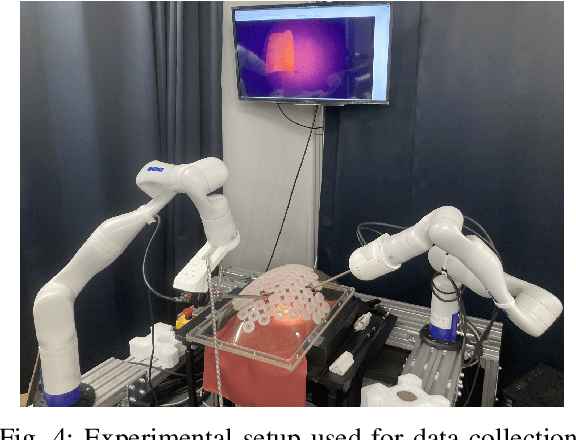

Abstract:Recent advances in robotic learning in simulation have shown impressive results in accelerating learning complex manipulation skills. However, the sim-to-real gap, caused by discrepancies between simulation and reality, poses significant challenges for the effective deployment of autonomous surgical systems. We propose a novel approach utilizing image translation models to mitigate domain mismatches and facilitate efficient robot skill learning in a simulated environment. Our method involves the use of contrastive unpaired Image-to-image translation, allowing for the acquisition of embedded representations from these transformed images. Subsequently, these embeddings are used to improve the efficiency of training surgical manipulation models. We conducted experiments to evaluate the performance of our approach, demonstrating that it significantly enhances task success rates and reduces the steps required for task completion compared to traditional methods. The results indicate that our proposed system effectively bridges the sim-to-real gap, providing a robust framework for advancing the autonomy of surgical robots in minimally invasive procedures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge