Kedeng Tong

Learned Focused Plenoptic Image Compression with Microimage Preprocessing and Global Attention

Apr 30, 2023

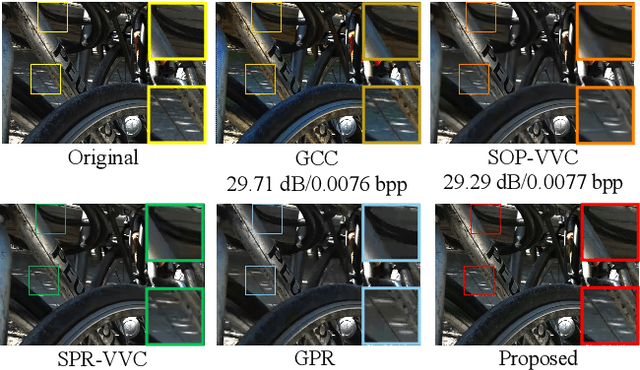

Abstract:Focused plenoptic cameras can record spatial and angular information of the light field (LF) simultaneously with higher spatial resolution relative to traditional plenoptic cameras, which facilitate various applications in computer vision. However, the existing plenoptic image compression methods present ineffectiveness to the captured images due to the complex micro-textures generated by the microlens relay imaging and long-distance correlations among the microimages. In this paper, a lossy end-to-end learning architecture is proposed to compress the focused plenoptic images efficiently. First, a data preprocessing scheme is designed according to the imaging principle to remove the sub-aperture image ineffective pixels in the recorded light field and align the microimages to the rectangular grid. Then, the global attention module with large receptive field is proposed to capture the global correlation among the feature maps using pixel-wise vector attention computed in the resampling process. Also, a new image dataset consisting of 1910 focused plenoptic images with content and depth diversity is built to benefit training and testing. Extensive experimental evaluations demonstrate the effectiveness of the proposed approach. It outperforms intra coding of HEVC and VVC by an average of 62.57% and 51.67% bitrate reduction on the 20 preprocessed focused plenoptic images, respectively. Also, it achieves 18.73% bitrate saving and generates perceptually pleasant reconstructions compared to the state-of-the-art end-to-end image compression methods, which benefits the applications of focused plenoptic cameras greatly. The dataset and code are publicly available at https://github.com/VincentChandelier/GACN.

QVRF: A Quantization-error-aware Variable Rate Framework for Learned Image Compression

Mar 10, 2023Abstract:Learned image compression has exhibited promising compression performance, but variable bitrates over a wide range remain a challenge. State-of-the-art variable rate methods compromise the loss of model performance and require numerous additional parameters. In this paper, we present a Quantization-error-aware Variable Rate Framework (QVRF) that utilizes a univariate quantization regulator a to achieve wide-range variable rates within a single model. Specifically, QVRF defines a quantization regulator vector coupled with predefined Lagrange multipliers to control quantization error of all latent representation for discrete variable rates. Additionally, the reparameterization method makes QVRF compatible with a round quantizer. Exhaustive experiments demonstrate that existing fixed-rate VAE-based methods equipped with QVRF can achieve wide-range continuous variable rates within a single model without significant performance degradation. Furthermore, QVRF outperforms contemporary variable-rate methods in rate-distortion performance with minimal additional parameters.

SADN: Learned Light Field Image Compression with Spatial-Angular Decorrelation

Feb 22, 2022

Abstract:Light field image becomes one of the most promising media types for immersive video applications. In this paper, we propose a novel end-to-end spatial-angular-decorrelated network (SADN) for high-efficiency light field image compression. Different from the existing methods that exploit either spatial or angular consistency in the light field image, SADN decouples the angular and spatial information by dilation convolution and stride convolution in spatial-angular interaction, and performs feature fusion to compress spatial and angular information jointly. To train a stable and robust algorithm, a large-scale dataset consisting of 7549 light field images is proposed and built. The proposed method provides 2.137 times and 2.849 times higher compression efficiency relative to H.266/VVC and H.265/HEVC inter coding, respectively. It also outperforms the end-to-end image compression networks by an average of 79.6% bitrate saving with much higher subjective quality and light field consistency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge