Kecheng Fan

Mobility-Aware Joint User Scheduling and Resource Allocation for Low Latency Federated Learning

Jul 18, 2023

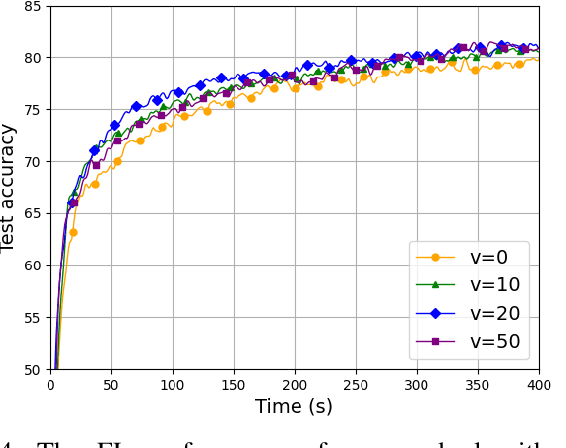

Abstract:As an efficient distributed machine learning approach, Federated learning (FL) can obtain a shared model by iterative local model training at the user side and global model aggregating at the central server side, thereby protecting privacy of users. Mobile users in FL systems typically communicate with base stations (BSs) via wireless channels, where training performance could be degraded due to unreliable access caused by user mobility. However, existing work only investigates a static scenario or random initialization of user locations, which fail to capture mobility in real-world networks. To tackle this issue, we propose a practical model for user mobility in FL across multiple BSs, and develop a user scheduling and resource allocation method to minimize the training delay with constrained communication resources. Specifically, we first formulate an optimization problem with user mobility that jointly considers user selection, BS assignment to users, and bandwidth allocation to minimize the latency in each communication round. This optimization problem turned out to be NP-hard and we proposed a delay-aware greedy search algorithm (DAGSA) to solve it. Simulation results show that the proposed algorithm achieves better performance than the state-of-the-art baselines and a certain level of user mobility could improve training performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge