Kat R. Agres

AffectMachine-Classical: A novel system for generating affective classical music

Apr 11, 2023

Abstract:This work introduces a new music generation system, called AffectMachine-Classical, that is capable of generating affective Classic music in real-time. AffectMachine was designed to be incorporated into biofeedback systems (such as brain-computer-interfaces) to help users become aware of, and ultimately mediate, their own dynamic affective states. That is, this system was developed for music-based MedTech to support real-time emotion self-regulation in users. We provide an overview of the rule-based, probabilistic system architecture, describing the main aspects of the system and how they are novel. We then present the results of a listener study that was conducted to validate the ability of the system to reliably convey target emotions to listeners. The findings indicate that AffectMachine-Classical is very effective in communicating various levels of Arousal ($R^2 = .96$) to listeners, and is also quite convincing in terms of Valence (R^2 = .90). Future work will embed AffectMachine-Classical into biofeedback systems, to leverage the efficacy of the affective music for emotional well-being in listeners.

AI-Based Affective Music Generation Systems: A Review of Methods, and Challenges

Jan 10, 2023Abstract:Music is a powerful medium for altering the emotional state of the listener. In recent years, with significant advancement in computing capabilities, artificial intelligence-based (AI-based) approaches have become popular for creating affective music generation (AMG) systems that are empowered with the ability to generate affective music. Entertainment, healthcare, and sensor-integrated interactive system design are a few of the areas in which AI-based affective music generation (AI-AMG) systems may have a significant impact. Given the surge of interest in this topic, this article aims to provide a comprehensive review of AI-AMG systems. The main building blocks of an AI-AMG system are discussed, and existing systems are formally categorized based on the core algorithm used for music generation. In addition, this article discusses the main musical features employed to compose affective music, along with the respective AI-based approaches used for tailoring them. Lastly, the main challenges and open questions in this field, as well as their potential solutions, are presented to guide future research. We hope that this review will be useful for readers seeking to understand the state-of-the-art in AI-AMG systems, and gain an overview of the methods used for developing them, thereby helping them explore this field in the future.

Generating Lead Sheets with Affect: A Novel Conditional seq2seq Framework

Apr 27, 2021

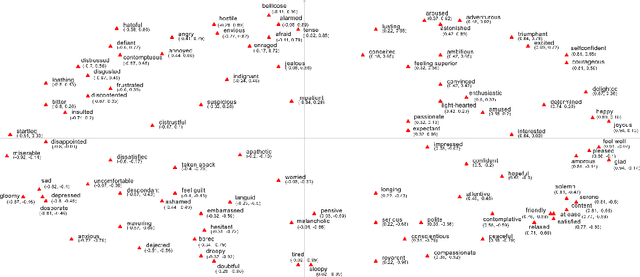

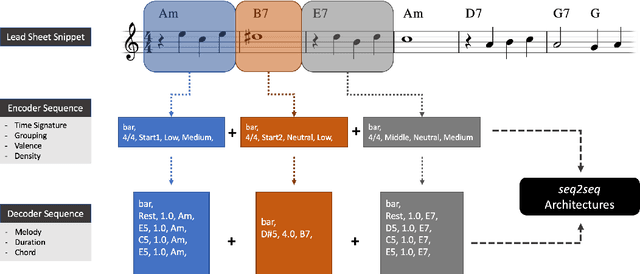

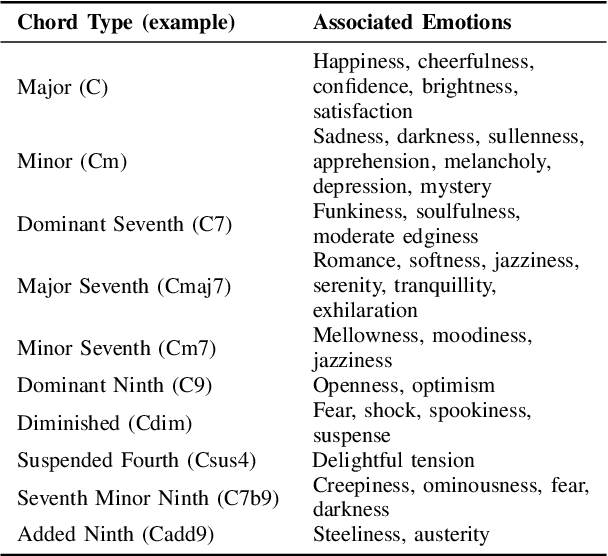

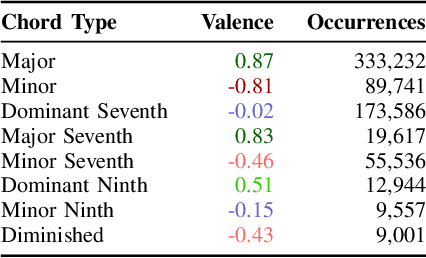

Abstract:The field of automatic music composition has seen great progress in the last few years, much of which can be attributed to advances in deep neural networks. There are numerous studies that present different strategies for generating sheet music from scratch. The inclusion of high-level musical characteristics (e.g., perceived emotional qualities), however, as conditions for controlling the generation output remains a challenge. In this paper, we present a novel approach for calculating the valence (the positivity or negativity of the perceived emotion) of a chord progression within a lead sheet, using pre-defined mood tags proposed by music experts. Based on this approach, we propose a novel strategy for conditional lead sheet generation that allows us to steer the music generation in terms of valence, phrasing, and time signature. Our approach is similar to a Neural Machine Translation (NMT) problem, as we include high-level conditions in the encoder part of the sequence-to-sequence architectures used (i.e., long-short term memory networks, and a Transformer network). We conducted experiments to thoroughly analyze these two architectures. The results show that the proposed strategy is able to generate lead sheets in a controllable manner, resulting in distributions of musical attributes similar to those of the training dataset. We also verified through a subjective listening test that our approach is effective in controlling the valence of a generated chord progression.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge