Kairui Fu

MALLOC: Benchmarking the Memory-aware Long Sequence Compression for Large Sequential Recommendation

Jan 29, 2026Abstract:The scaling law, which indicates that model performance improves with increasing dataset and model capacity, has fueled a growing trend in expanding recommendation models in both industry and academia. However, the advent of large-scale recommenders also brings significantly higher computational costs, particularly under the long-sequence dependencies inherent in the user intent of recommendation systems. Current approaches often rely on pre-storing the intermediate states of the past behavior for each user, thereby reducing the quadratic re-computation cost for the following requests. Despite their effectiveness, these methods often treat memory merely as a medium for acceleration, without adequately considering the space overhead it introduces. This presents a critical challenge in real-world recommendation systems with billions of users, each of whom might initiate thousands of interactions and require massive memory for state storage. Fortunately, there have been several memory management strategies examined for compression in LLM, while most have not been evaluated on the recommendation task. To mitigate this gap, we introduce MALLOC, a comprehensive benchmark for memory-aware long sequence compression. MALLOC presents a comprehensive investigation and systematic classification of memory management techniques applicable to large sequential recommendations. These techniques are integrated into state-of-the-art recommenders, enabling a reproducible and accessible evaluation platform. Through extensive experiments across accuracy, efficiency, and complexity, we demonstrate the holistic reliability of MALLOC in advancing large-scale recommendation. Code is available at https://anonymous.4open.science/r/MALLOC.

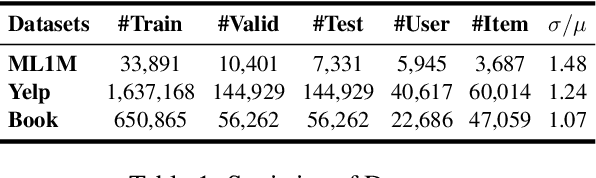

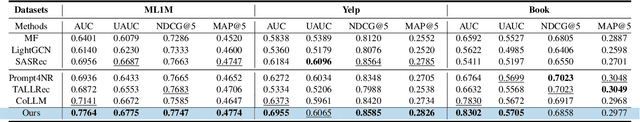

PI2I: A Personalized Item-Based Collaborative Filtering Retrieval Framework

Jan 23, 2026Abstract:Efficiently selecting relevant content from vast candidate pools is a critical challenge in modern recommender systems. Traditional methods, such as item-to-item collaborative filtering (CF) and two-tower models, often fall short in capturing the complex user-item interactions due to uniform truncation strategies and overdue user-item crossing. To address these limitations, we propose Personalized Item-to-Item (PI2I), a novel two-stage retrieval framework that enhances the personalization capabilities of CF. In the first Indexer Building Stage (IBS), we optimize the retrieval pool by relaxing truncation thresholds to maximize Hit Rate, thereby temporarily retaining more items users might be interested in. In the second Personalized Retrieval Stage (PRS), we introduce an interactive scoring model to overcome the limitations of inner product calculations, allowing for richer modeling of intricate user-item interactions. Additionally, we construct negative samples based on the trigger-target (item-to-item) relationship, ensuring consistency between offline training and online inference. Offline experiments on large-scale real-world datasets demonstrate that PI2I outperforms traditional CF methods and rivals Two-Tower models. Deployed in the "Guess You Like" section on Taobao, PI2I achieved a 1.05% increase in online transaction rates. In addition, we have released a large-scale recommendation dataset collected from Taobao, containing 130 million real-world user interactions used in the experiments of this paper. The dataset is publicly available at https://huggingface.co/datasets/PI2I/PI2I, which could serve as a valuable benchmark for the research community.

ThinkRec: Thinking-based recommendation via LLM

May 21, 2025

Abstract:Recent advances in large language models (LLMs) have enabled more semantic-aware recommendations through natural language generation. Existing LLM for recommendation (LLM4Rec) methods mostly operate in a System 1-like manner, relying on superficial features to match similar items based on click history, rather than reasoning through deeper behavioral logic. This often leads to superficial and erroneous recommendations. Motivated by this, we propose ThinkRec, a thinking-based framework that shifts LLM4Rec from System 1 to System 2 (rational system). Technically, ThinkRec introduces a thinking activation mechanism that augments item metadata with keyword summarization and injects synthetic reasoning traces, guiding the model to form interpretable reasoning chains that consist of analyzing interaction histories, identifying user preferences, and making decisions based on target items. On top of this, we propose an instance-wise expert fusion mechanism to reduce the reasoning difficulty. By dynamically assigning weights to expert models based on users' latent features, ThinkRec adapts its reasoning path to individual users, thereby enhancing precision and personalization. Extensive experiments on real-world datasets demonstrate that ThinkRec significantly improves the accuracy and interpretability of recommendations. Our implementations are available in anonymous Github: https://anonymous.4open.science/r/ThinkRec_LLM.

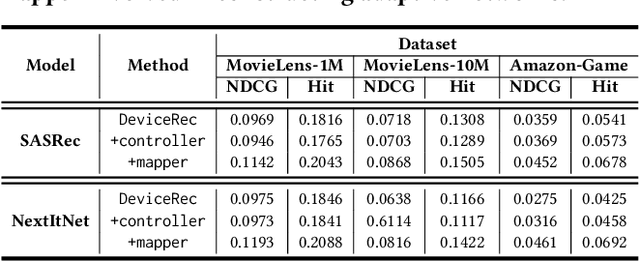

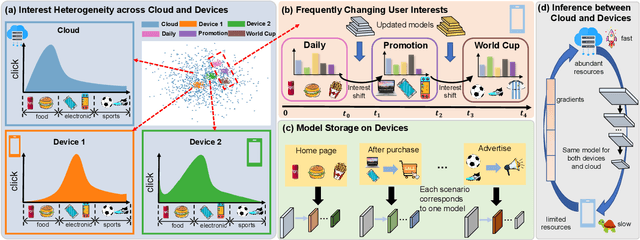

Forward Once for All: Structural Parameterized Adaptation for Efficient Cloud-coordinated On-device Recommendation

Jan 06, 2025

Abstract:In cloud-centric recommender system, regular data exchanges between user devices and cloud could potentially elevate bandwidth demands and privacy risks. On-device recommendation emerges as a viable solution by performing reranking locally to alleviate these concerns. Existing methods primarily focus on developing local adaptive parameters, while potentially neglecting the critical role of tailor-made model architecture. Insights from broader research domains suggest that varying data distributions might favor distinct architectures for better fitting. In addition, imposing a uniform model structure across heterogeneous devices may result in risking inefficacy on less capable devices or sub-optimal performance on those with sufficient capabilities. In response to these gaps, our paper introduces Forward-OFA, a novel approach for the dynamic construction of device-specific networks (both structure and parameters). Forward-OFA employs a structure controller to selectively determine whether each block needs to be assembled for a given device. However, during the training of the structure controller, these assembled heterogeneous structures are jointly optimized, where the co-adaption among blocks might encounter gradient conflicts. To mitigate this, Forward-OFA is designed to establish a structure-guided mapping of real-time behaviors to the parameters of assembled networks. Structure-related parameters and parallel components within the mapper prevent each part from receiving heterogeneous gradients from others, thus bypassing the gradient conflicts for coupled optimization. Besides, direct mapping enables Forward-OFA to achieve adaptation through only one forward pass, allowing for swift adaptation to changing interests and eliminating the requirement for on-device backpropagation. Experiments on real-world datasets demonstrate the effectiveness and efficiency of Forward-OFA.

DIET: Customized Slimming for Incompatible Networks in Sequential Recommendation

Jun 15, 2024

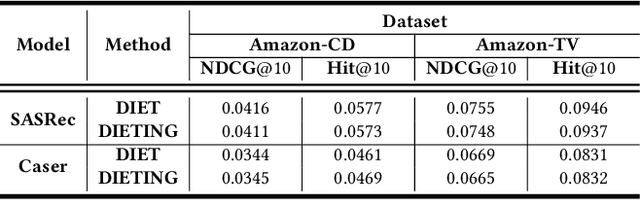

Abstract:Due to the continuously improving capabilities of mobile edges, recommender systems start to deploy models on edges to alleviate network congestion caused by frequent mobile requests. Several studies have leveraged the proximity of edge-side to real-time data, fine-tuning them to create edge-specific models. Despite their significant progress, these methods require substantial on-edge computational resources and frequent network transfers to keep the model up to date. The former may disrupt other processes on the edge to acquire computational resources, while the latter consumes network bandwidth, leading to a decrease in user satisfaction. In response to these challenges, we propose a customizeD slImming framework for incompatiblE neTworks(DIET). DIET deploys the same generic backbone (potentially incompatible for a specific edge) to all devices. To minimize frequent bandwidth usage and storage consumption in personalization, DIET tailors specific subnets for each edge based on its past interactions, learning to generate slimming subnets(diets) within incompatible networks for efficient transfer. It also takes the inter-layer relationships into account, empirically reducing inference time while obtaining more suitable diets. We further explore the repeated modules within networks and propose a more storage-efficient framework, DIETING, which utilizes a single layer of parameters to represent the entire network, achieving comparably excellent performance. The experiments across four state-of-the-art datasets and two widely used models demonstrate the superior accuracy in recommendation and efficiency in transmission and storage of our framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge