Juozas Vaicenavicius

Self-driving car safety quantification via component-level analysis

Sep 02, 2020

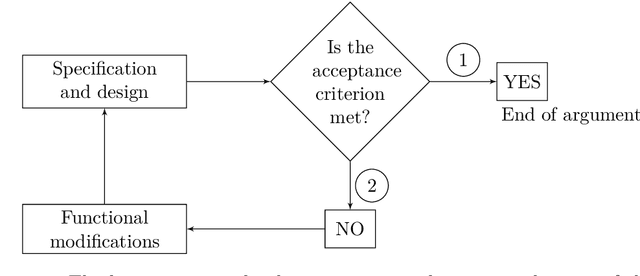

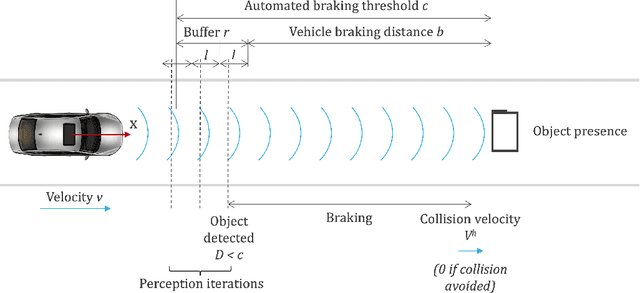

Abstract:In this paper, we present a rigorous modular statistical approach for arguing safety or its insufficiency of an autonomous vehicle through a concrete illustrative example. The methodology relies on making appropriate quantitative studies of the performance of constituent components. We explain the importance of sufficient and necessary conditions at component level for the overall safety of the vehicle. A simple concrete example studied illustrates how perception system analysis at component level can be used to prove or disprove safety at the vehicle level.

Evaluating model calibration in classification

Feb 19, 2019

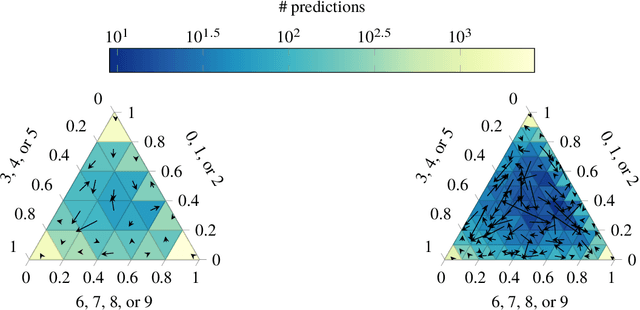

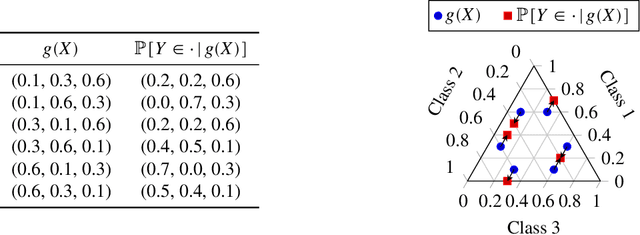

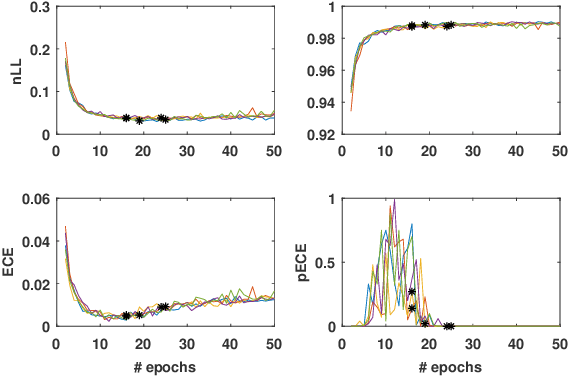

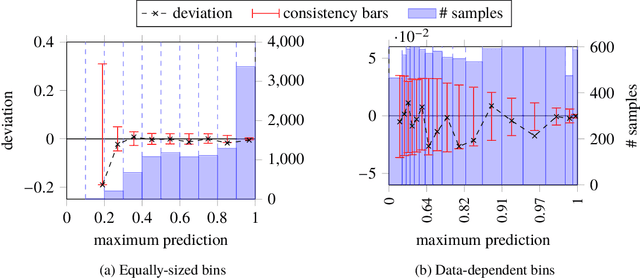

Abstract:Probabilistic classifiers output a probability distribution on target classes rather than just a class prediction. Besides providing a clear separation of prediction and decision making, the main advantage of probabilistic models is their ability to represent uncertainty about predictions. In safety-critical applications, it is pivotal for a model to possess an adequate sense of uncertainty, which for probabilistic classifiers translates into outputting probability distributions that are consistent with the empirical frequencies observed from realized outcomes. A classifier with such a property is called calibrated. In this work, we develop a general theoretical calibration evaluation framework grounded in probability theory, and point out subtleties present in model calibration evaluation that lead to refined interpretations of existing evaluation techniques. Lastly, we propose new ways to quantify and visualize miscalibration in probabilistic classification, including novel multidimensional reliability diagrams.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge