Josiah Smith

Cutting-Edge Techniques for Depth Map Super-Resolution

Jun 27, 2023Abstract:To overcome hardware limitations in commercially available depth sensors which result in low-resolution depth maps, depth map super-resolution (DMSR) is a practical and valuable computer vision task. DMSR requires upscaling a low-resolution (LR) depth map into a high-resolution (HR) space. Joint image filtering for DMSR has been applied using spatially-invariant and spatially-variant convolutional neural network (CNN) approaches. In this project, we propose a novel joint image filtering DMSR algorithm using a Swin transformer architecture. Furthermore, we introduce a Nonlinear Activation Free (NAF) network based on a conventional CNN model used in cutting-edge image restoration applications and compare the performance of the techniques. The proposed algorithms are validated through numerical studies and visual examples demonstrating improvements to state-of-the-art performance while maintaining competitive computation time for noisy depth map super-resolution.

Novel Hybrid-Learning Algorithms for Improved Millimeter-Wave Imaging Systems

Jun 27, 2023Abstract:Increasing attention is being paid to millimeter-wave (mmWave), 30 GHz to 300 GHz, and terahertz (THz), 300 GHz to 10 THz, sensing applications including security sensing, industrial packaging, medical imaging, and non-destructive testing. Traditional methods for perception and imaging are challenged by novel data-driven algorithms that offer improved resolution, localization, and detection rates. Over the past decade, deep learning technology has garnered substantial popularity, particularly in perception and computer vision applications. Whereas conventional signal processing techniques are more easily generalized to various applications, hybrid approaches where signal processing and learning-based algorithms are interleaved pose a promising compromise between performance and generalizability. Furthermore, such hybrid algorithms improve model training by leveraging the known characteristics of radio frequency (RF) waveforms, thus yielding more efficiently trained deep learning algorithms and offering higher performance than conventional methods. This dissertation introduces novel hybrid-learning algorithms for improved mmWave imaging systems applicable to a host of problems in perception and sensing. Various problem spaces are explored, including static and dynamic gesture classification; precise hand localization for human computer interaction; high-resolution near-field mmWave imaging using forward synthetic aperture radar (SAR); SAR under irregular scanning geometries; mmWave image super-resolution using deep neural network (DNN) and Vision Transformer (ViT) architectures; and data-level multiband radar fusion using a novel hybrid-learning architecture. Furthermore, we introduce several novel approaches for deep learning model training and dataset synthesis.

Deep Learning-Based Multiband Signal Fusion for 3-D SAR Super-Resolution

May 03, 2023Abstract:Three-dimensional (3-D) synthetic aperture radar (SAR) is widely used in many security and industrial applications requiring high-resolution imaging of concealed or occluded objects. The ability to resolve intricate 3-D targets is essential to the performance of such applications and depends directly on system bandwidth. However, because high-bandwidth systems face several prohibitive hurdles, an alternative solution is to operate multiple radars at distinct frequency bands and fuse the multiband signals. Current multiband signal fusion methods assume a simple target model and a small number of point reflectors, which is invalid for realistic security screening and industrial imaging scenarios wherein the target model effectively consists of a large number of reflectors. To the best of our knowledge, this study presents the first use of deep learning for multiband signal fusion. The proposed network, called kR-Net, employs a hybrid, dual-domain complex-valued convolutional neural network (CV-CNN) to fuse multiband signals and impute the missing samples in the frequency gaps between subbands. By exploiting the relationships in both the wavenumber domain and wavenumber spectral domain, the proposed framework overcomes the drawbacks of existing multiband imaging techniques for realistic scenarios at a fraction of the computation time of existing multiband fusion algorithms. Our method achieves high-resolution imaging of intricate targets previously impossible using conventional techniques and enables finer resolution capacity for concealed weapon detection and occluded object classification using multiband signaling without requiring more advanced hardware. Furthermore, a fully integrated multiband imaging system is developed using commercially available millimeter-wave (mmWave) radars for efficient multiband imaging.

Improved Static Hand Gesture Classification on Deep Convolutional Neural Networks using Novel Sterile Training Technique

May 03, 2023Abstract:In this paper, we investigate novel data collection and training techniques towards improving classification accuracy of non-moving (static) hand gestures using a convolutional neural network (CNN) and frequency-modulated-continuous-wave (FMCW) millimeter-wave (mmWave) radars. Recently, non-contact hand pose and static gesture recognition have received considerable attention in many applications ranging from human-computer interaction (HCI), augmented/virtual reality (AR/VR), and even therapeutic range of motion for medical applications. While most current solutions rely on optical or depth cameras, these methods require ideal lighting and temperature conditions. mmWave radar devices have recently emerged as a promising alternative offering low-cost system-on-chip sensors whose output signals contain precise spatial information even in non-ideal imaging conditions. Additionally, deep convolutional neural networks have been employed extensively in image recognition by learning both feature extraction and classification simultaneously. However, little work has been done towards static gesture recognition using mmWave radars and CNNs due to the difficulty involved in extracting meaningful features from the radar return signal, and the results are inferior compared with dynamic gesture classification. This article presents an efficient data collection approach and a novel technique for deep CNN training by introducing ``sterile'' images which aid in distinguishing distinct features among the static gestures and subsequently improve the classification accuracy. Applying the proposed data collection and training methods yields an increase in classification rate of static hand gestures from $85\%$ to $93\%$ and $90\%$ to $95\%$ for range and range-angle profiles, respectively.

* Accepted to IEEE Access

Efficient 3-D Near-Field MIMO-SAR Imaging for Irregular Scanning Geometries

May 03, 2023

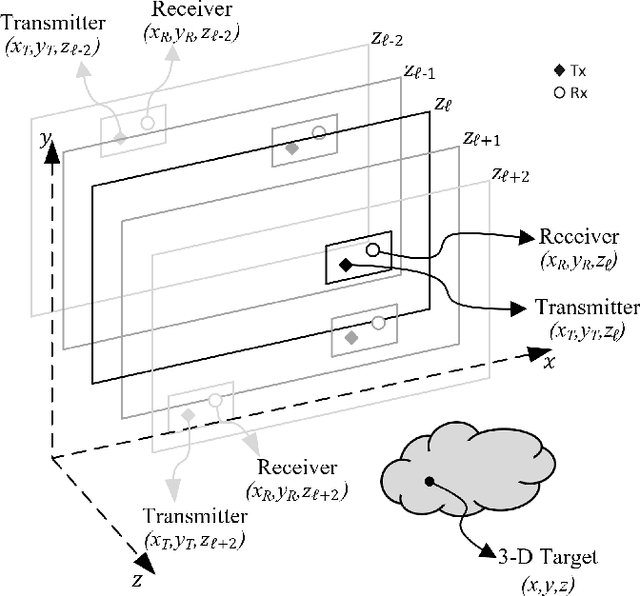

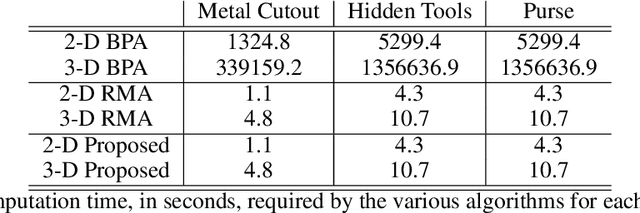

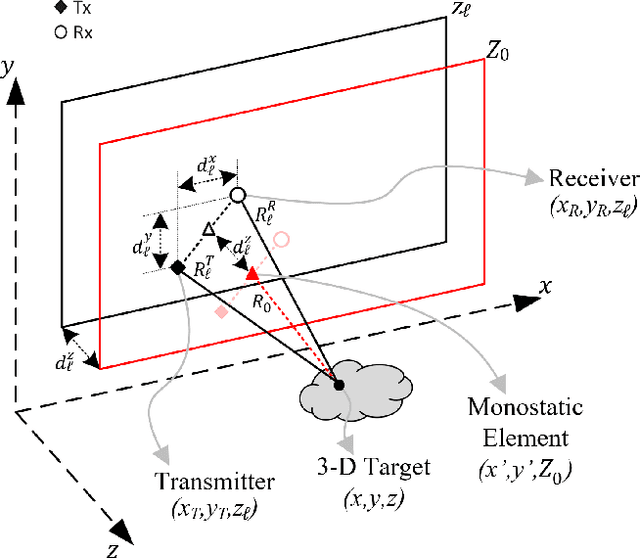

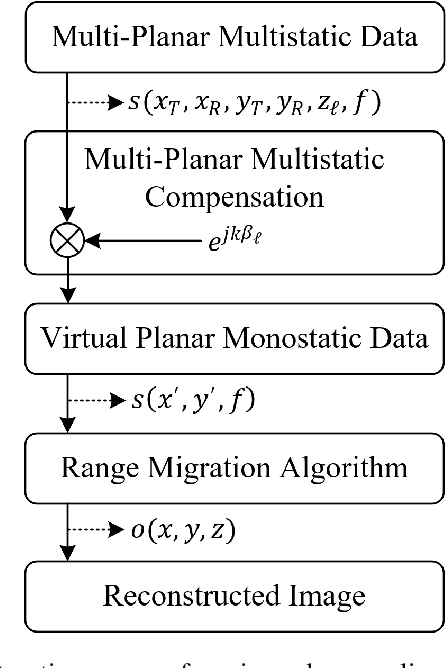

Abstract:In this article, we introduce a novel algorithm for efficient near-field synthetic aperture radar (SAR) imaging for irregular scanning geometries. With the emergence of fifth-generation (5G) millimeter-wave (mmWave) devices, near-field SAR imaging is no longer confined to laboratory environments. Recent advances in positioning technology have attracted significant interest for a diverse set of new applications in mmWave imaging. However, many use cases, such as automotive-mounted SAR imaging, unmanned aerial vehicle (UAV) imaging, and freehand imaging with smartphones, are constrained to irregular scanning geometries. Whereas traditional near-field SAR imaging systems and quick personnel security (QPS) scanners employ highly precise motion controllers to create ideal synthetic arrays, emerging applications, mentioned previously, inherently cannot achieve such ideal positioning. In addition, many Internet of Things (IoT) and 5G applications impose strict size and computational complexity limitations that must be considered for edge mmWave imaging technology. In this study, we propose a novel algorithm to leverage the advantages of non-cooperative SAR scanning patterns, small form-factor multiple-input multiple-output (MIMO) radars, and efficient monostatic planar image reconstruction algorithms. We propose a framework to mathematically decompose arbitrary and irregular sampling geometries and a joint solution to mitigate multistatic array imaging artifacts. The proposed algorithm is validated through simulations and an empirical study of arbitrary scanning scenarios. Our algorithm achieves high-resolution and high-efficiency near-field MIMO-SAR imaging, and is an elegant solution to computationally constrained irregularly sampled imaging problems.

* Accepted to IEEE Access

A Vision Transformer Approach for Efficient Near-Field Irregular SAR Super-Resolution

May 03, 2023Abstract:In this paper, we develop a novel super-resolution algorithm for near-field synthetic-aperture radar (SAR) under irregular scanning geometries. As fifth-generation (5G) millimeter-wave (mmWave) devices are becoming increasingly affordable and available, high-resolution SAR imaging is feasible for end-user applications and non-laboratory environments. Emerging applications such freehand imaging, wherein a handheld radar is scanned throughout space by a user, unmanned aerial vehicle (UAV) imaging, and automotive SAR face several unique challenges for high-resolution imaging. First, recovering a SAR image requires knowledge of the array positions throughout the scan. While recent work has introduced camera-based positioning systems capable of adequately estimating the position, recovering the algorithm efficiently is a requirement to enable edge and Internet of Things (IoT) technologies. Efficient algorithms for non-cooperative near-field SAR sampling have been explored in recent work, but suffer image defocusing under position estimation error and can only produce medium-fidelity images. In this paper, we introduce a mobile-friend vision transformer (ViT) architecture to address position estimation error and perform SAR image super-resolution (SR) under irregular sampling geometries. The proposed algorithm, Mobile-SRViT, is the first to employ a ViT approach for SAR image enhancement and is validated in simulation and via empirical studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge