Jonas Tebbe

Table tennis ball spin estimation with an event camera

Apr 15, 2024

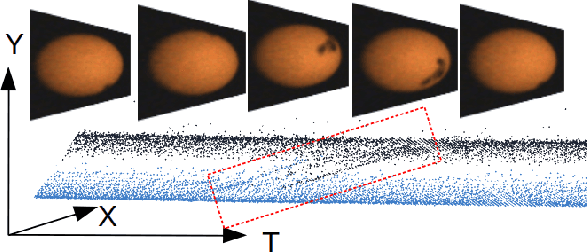

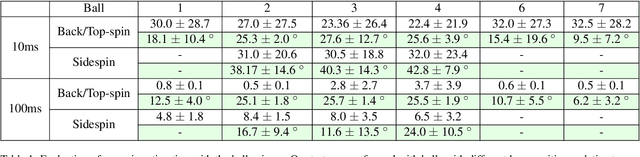

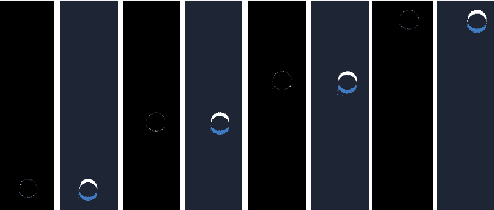

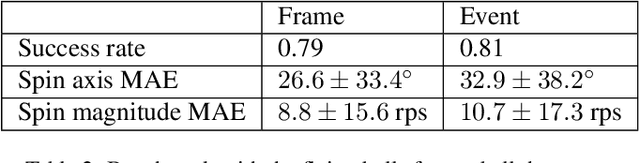

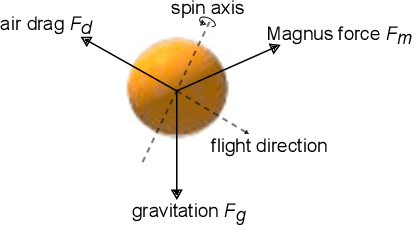

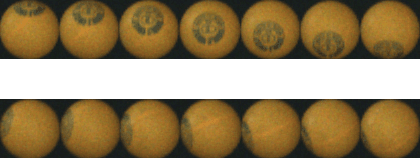

Abstract:Spin plays a pivotal role in ball-based sports. Estimating spin becomes a key skill due to its impact on the ball's trajectory and bouncing behavior. Spin cannot be observed directly, making it inherently challenging to estimate. In table tennis, the combination of high velocity and spin renders traditional low frame rate cameras inadequate for quickly and accurately observing the ball's logo to estimate the spin due to the motion blur. Event cameras do not suffer as much from motion blur, thanks to their high temporal resolution. Moreover, the sparse nature of the event stream solves communication bandwidth limitations many frame cameras face. To the best of our knowledge, we present the first method for table tennis spin estimation using an event camera. We use ordinal time surfaces to track the ball and then isolate the events generated by the logo on the ball. Optical flow is then estimated from the extracted events to infer the ball's spin. We achieved a spin magnitude mean error of $10.7 \pm 17.3$ rps and a spin axis mean error of $32.9 \pm 38.2\deg$ in real time for a flying ball.

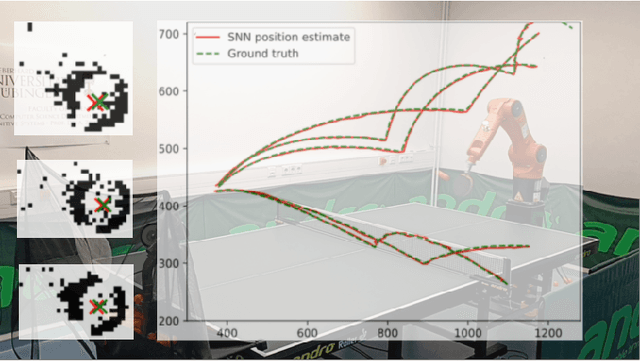

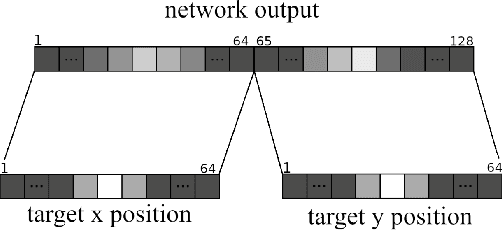

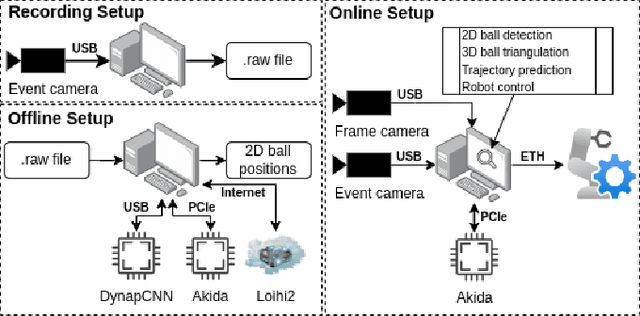

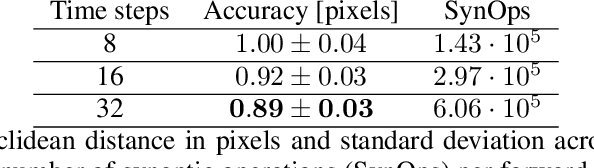

Spiking Neural Networks for Fast-Moving Object Detection on Neuromorphic Hardware Devices Using an Event-Based Camera

Mar 15, 2024

Abstract:Table tennis is a fast-paced and exhilarating sport that demands agility, precision, and fast reflexes. In recent years, robotic table tennis has become a popular research challenge for robot perception algorithms. Fast and accurate ball detection is crucial for enabling a robotic arm to rally the ball back successfully. Previous approaches have employed conventional frame-based cameras with Convolutional Neural Networks (CNNs) or traditional computer vision methods. In this paper, we propose a novel solution that combines an event-based camera with Spiking Neural Networks (SNNs) for ball detection. We use multiple state-of-the-art SNN frameworks and develop a SNN architecture for each of them, complying with their corresponding constraints. Additionally, we implement the SNN solution across multiple neuromorphic edge devices, conducting comparisons of their accuracies and run-times. This furnishes robotics researchers with a benchmark illustrating the capabilities achievable with each SNN framework and a corresponding neuromorphic edge device. Next to this comparison of SNN solutions for robots, we also show that an SNN on a neuromorphic edge device is able to run in real-time in a closed loop robotic system, a table tennis robot in our use case.

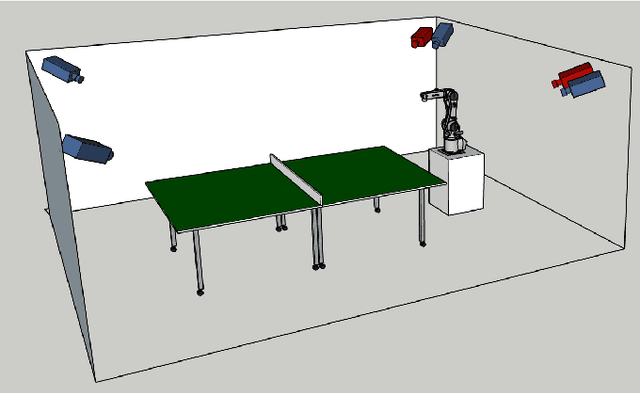

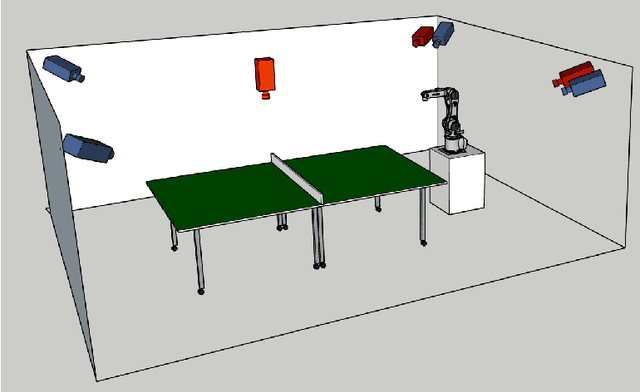

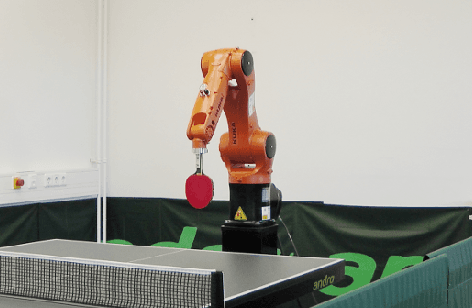

A multi-modal table tennis robot system

Oct 29, 2023

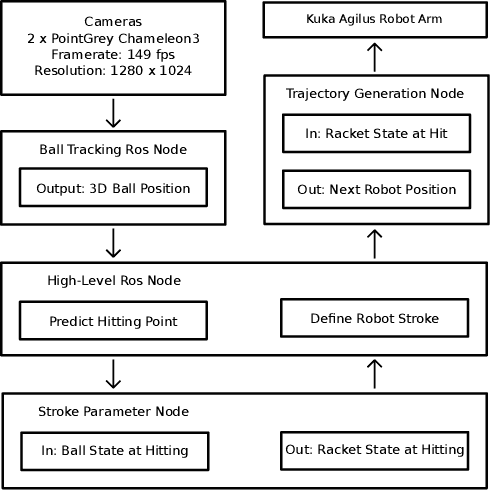

Abstract:In recent years, robotic table tennis has become a popular research challenge for perception and robot control. Here, we present an improved table tennis robot system with high accuracy vision detection and fast robot reaction. Based on previous work, our system contains a KUKA robot arm with 6 DOF, with four frame-based cameras and two additional event-based cameras. We developed a novel calibration approach to calibrate this multimodal perception system. For table tennis, spin estimation is crucial. Therefore, we introduced a novel, and more accurate spin estimation approach. Finally, we show how combining the output of an event-based camera and a Spiking Neural Network (SNN) can be used for accurate ball detection.

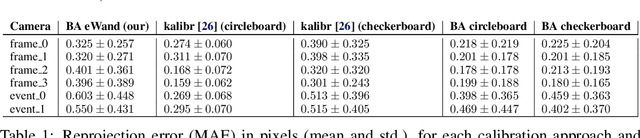

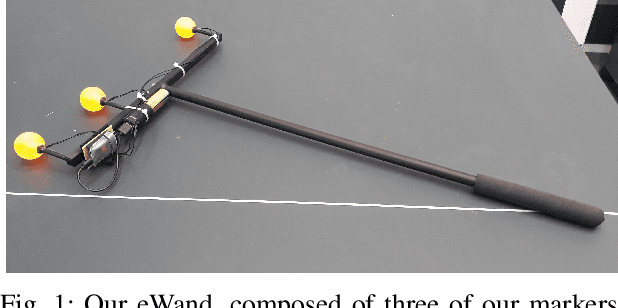

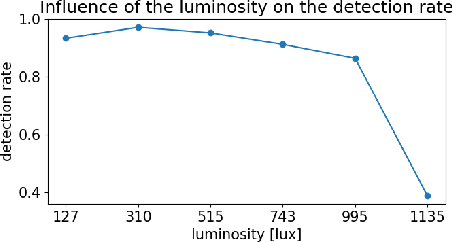

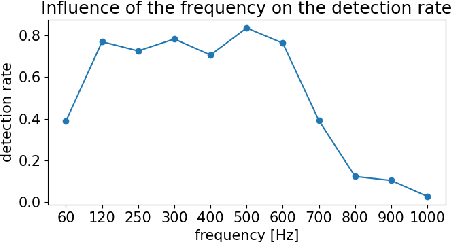

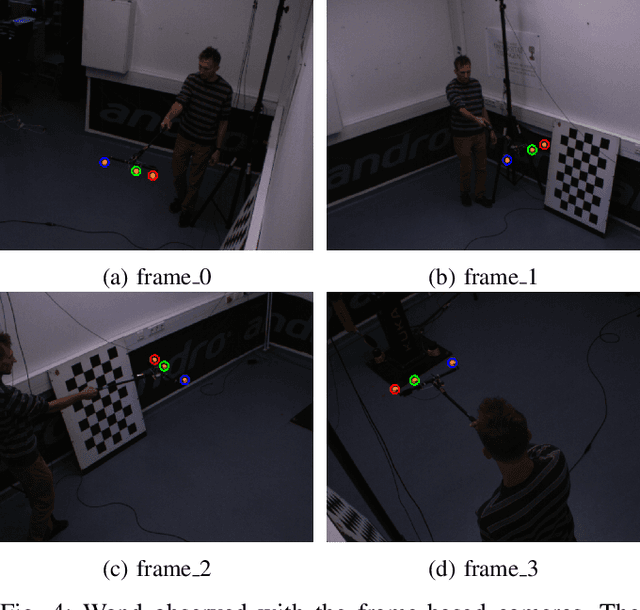

eWand: A calibration framework for wide baseline frame-based and event-based camera systems

Sep 22, 2023

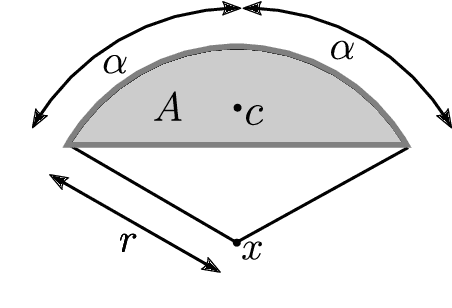

Abstract:Accurate calibration is crucial for using multiple cameras to triangulate the position of objects precisely. However, it is also a time-consuming process that needs to be repeated for every displacement of the cameras. The standard approach is to use a printed pattern with known geometry to estimate the intrinsic and extrinsic parameters of the cameras. The same idea can be applied to event-based cameras, though it requires extra work. By using frame reconstruction from events, a printed pattern can be detected. A blinking pattern can also be displayed on a screen. Then, the pattern can be directly detected from the events. Such calibration methods can provide accurate intrinsic calibration for both frame- and event-based cameras. However, using 2D patterns has several limitations for multi-camera extrinsic calibration, with cameras possessing highly different points of view and a wide baseline. The 2D pattern can only be detected from one direction and needs to be of significant size to compensate for its distance to the camera. This makes the extrinsic calibration time-consuming and cumbersome. To overcome these limitations, we propose eWand, a new method that uses blinking LEDs inside opaque spheres instead of a printed or displayed pattern. Our method provides a faster, easier-to-use extrinsic calibration approach that maintains high accuracy for both event- and frame-based cameras.

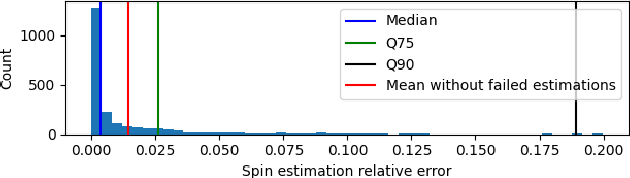

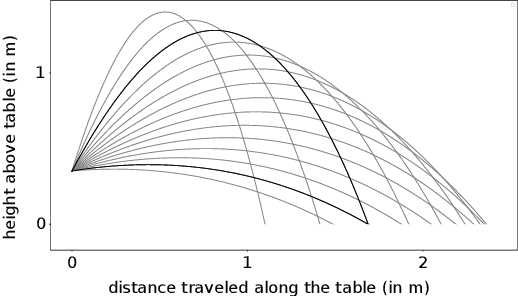

SpinDOE: A ball spin estimation method for table tennis robot

Mar 07, 2023Abstract:Spin plays a considerable role in table tennis, making a shot's trajectory harder to read and predict. However, the spin is challenging to measure because of the ball's high velocity and the magnitude of the spin values. Existing methods either require extremely high framerate cameras or are unreliable because they use the ball's logo, which may not always be visible. Because of this, many table tennis-playing robots ignore the spin, which severely limits their capabilities. This paper proposes an easily implementable and reliable spin estimation method. We developed a dotted-ball orientation estimation (DOE) method, that can then be used to estimate the spin. The dots are first localized on the image using a CNN and then identified using geometric hashing. The spin is finally regressed from the estimated orientations. Using our algorithm, the ball's orientation can be estimated with a mean error of 2.4{\deg} and the spin estimation has an relative error lower than 1%. Spins up to 175 rps are measurable with a camera of 350 fps in real time. Using our method, we generated a dataset of table tennis ball trajectories with position and spin, available on our project page.

Real-time event simulation with frame-based cameras

Sep 10, 2022

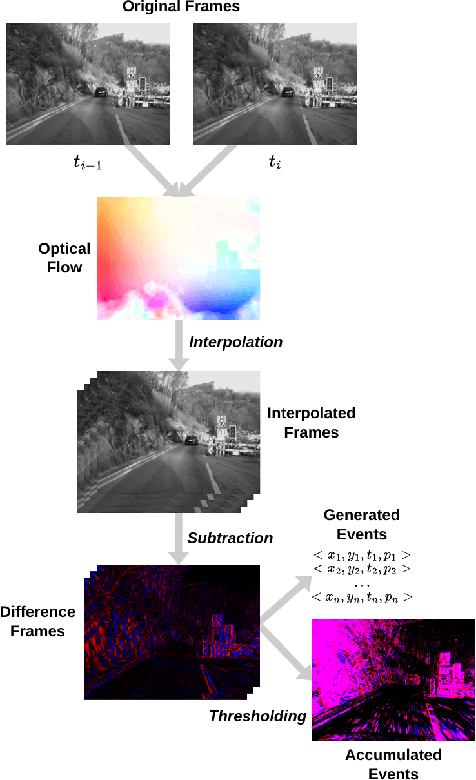

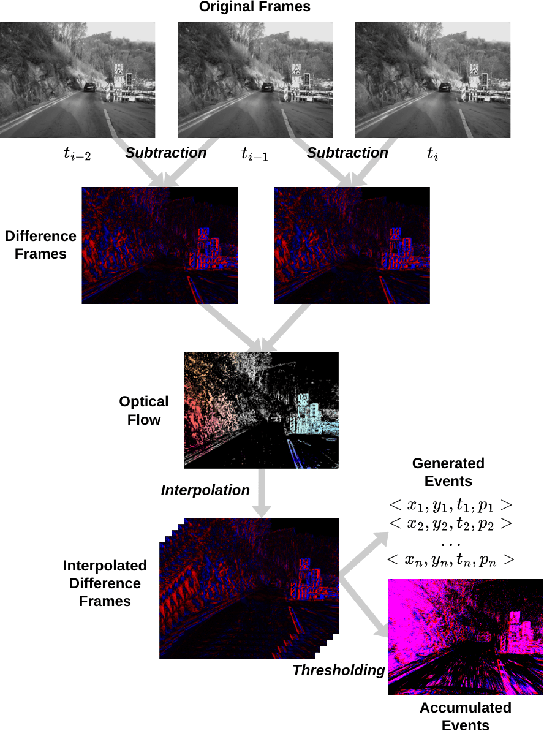

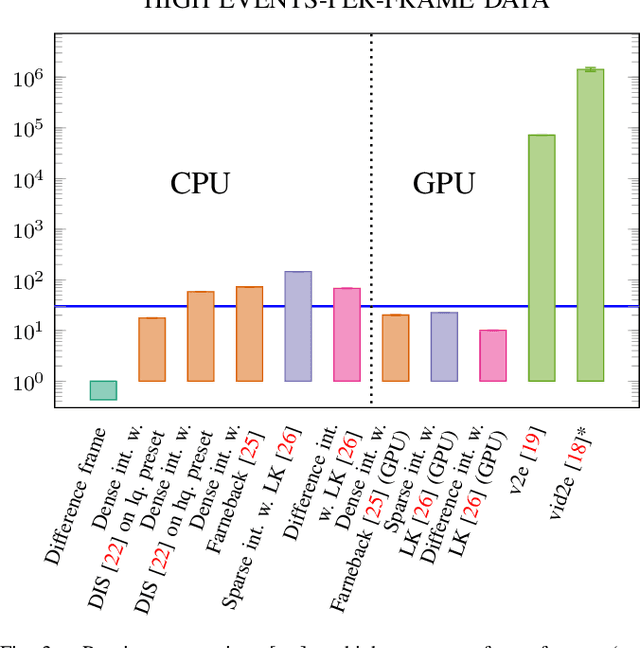

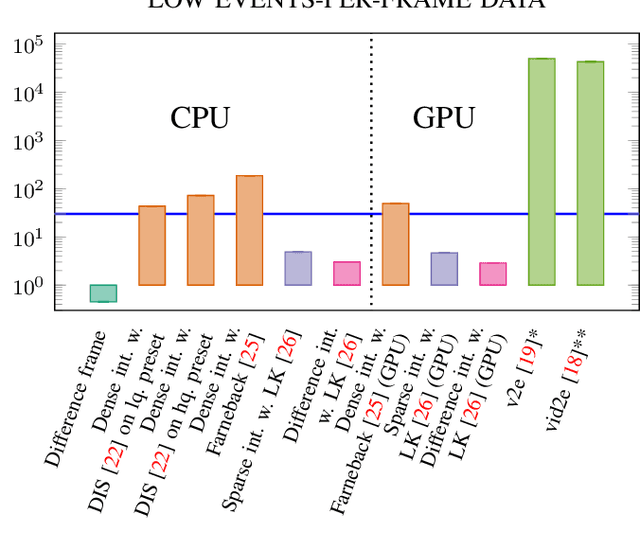

Abstract:Event cameras are becoming increasingly popular in robotics and computer vision due to their beneficial properties, e.g., high temporal resolution, high bandwidth, almost no motion blur, and low power consumption. However, these cameras remain expensive and scarce in the market, making them inaccessible to the majority. Using event simulators minimizes the need for real event cameras to develop novel algorithms. However, due to the computational complexity of the simulation, the event streams of existing simulators cannot be generated in real-time but rather have to be pre-calculated from existing video sequences or pre-rendered and then simulated from a virtual 3D scene. Although these offline generated event streams can be used as training data for learning tasks, all response time dependent applications cannot benefit from these simulators yet, as they still require an actual event camera. This work proposes simulation methods that improve the performance of event simulation by two orders of magnitude (making them real-time capable) while remaining competitive in the quality assessment.

Optimal Stroke Learning with Policy Gradient Approach for Robotic Table Tennis

Sep 07, 2021

Abstract:Learning to play table tennis is a challenging task for robots, due to the variety of the strokes required. Current advances in deep Reinforcement Learning (RL) have shown potential in learning the optimal strokes. However, the large amount of exploration still limits the applicability when utilizing RL in real scenarios. In this paper, we first propose a realistic simulation environment where several models are built for the ball's dynamics and the robot's kinematics. Instead of training an end-to-end RL model, we decompose it into two stages: the ball's hitting state prediction and consequently learning the racket strokes from it. A novel policy gradient approach with TD3 backbone is proposed for the second stage. In the experiments, we show that the proposed approach significantly outperforms the existing RL methods in simulation. To cross the domain from simulation to reality, we develop an efficient retraining method and test in three real scenarios with a success rate of 98%.

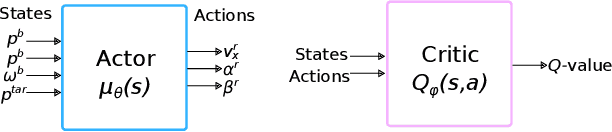

Sample-efficient Reinforcement Learning in Robotic Table Tennis

Nov 11, 2020

Abstract:Reinforcement learning (RL) has recently shown impressive success in various computer games and simulations. Most of these successes are based on numerous episodes to be learned from. For typical robotic applications, however, the number of feasible attempts is very limited. In this paper we present a sample-efficient RL algorithm applied to the example of a table tennis robot. In table tennis every stroke is different, of varying placement, speed and spin. Therefore, an accurate return has be found depending on a high-dimensional continuous state space. To make learning in few trials possible the method is embedded into our robot system. In this way we can use a one-step environment. The state space depends on the ball at hitting time (position, velocity, spin) and the action is the racket state (orientation, velocity) at hitting. An actor-critic based deterministic policy gradient algorithm was developed for accelerated learning. Our approach shows competitive performance in both simulation and on the real robot in different challenging scenarios. Accurate results are always obtained within under 200 episodes of training. The video presenting our experiments is available at https://youtu.be/uRAtdoL6Wpw.

Spin Detection in Robotic Table Tennis

May 20, 2019

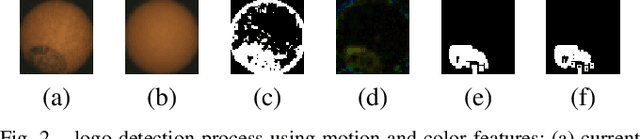

Abstract:In table tennis the rotation (spin) of the ball plays a crucial role. A table tennis match will feature a variety of strokes. Each generates different amounts and types of spin. To develop a robot which can compete with a human player, the robot needs to be able to detect spin, so that it can plan an appropriate return stroke. In this paper we compare three methods for estimating spin. The first two approaches use a high-speed camera that captures the ball in flight at a frame rate of 380 Hz. This camera allows the movement of the circular brand logo printed on the ball to be seen. The first approach uses background difference to determine the position of the logo. In a second alternative, a CNN is trained to predict the orientation of the logo. The third method evaluates the trajectory of the ball and derives the rotation from the effect of the Magnus force. In a demonstration, our robot must respond to different spin types in a real table tennis rally against a human opponent.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge