Jonas Krumme

A Two-Stage System for Layout-Controlled Image Generation using Large Language Models and Diffusion Models

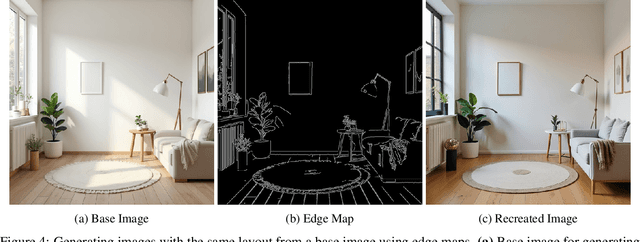

Nov 11, 2025Abstract:Text-to-image diffusion models exhibit remarkable generative capabilities, but lack precise control over object counts and spatial arrangements. This work introduces a two-stage system to address these compositional limitations. The first stage employs a Large Language Model (LLM) to generate a structured layout from a list of objects. The second stage uses a layout-conditioned diffusion model to synthesize a photorealistic image adhering to this layout. We find that task decomposition is critical for LLM-based spatial planning; by simplifying the initial generation to core objects and completing the layout with rule-based insertion, we improve object recall from 57.2% to 99.9% for complex scenes. For image synthesis, we compare two leading conditioning methods: ControlNet and GLIGEN. After domain-specific finetuning on table-setting datasets, we identify a key trade-off: ControlNet preserves text-based stylistic control but suffers from object hallucination, while GLIGEN provides superior layout fidelity at the cost of reduced prompt-based controllability. Our end-to-end system successfully generates images with specified object counts and plausible spatial arrangements, demonstrating the viability of a decoupled approach for compositionally controlled synthesis.

World Knowledge from AI Image Generation for Robot Control

Mar 20, 2025

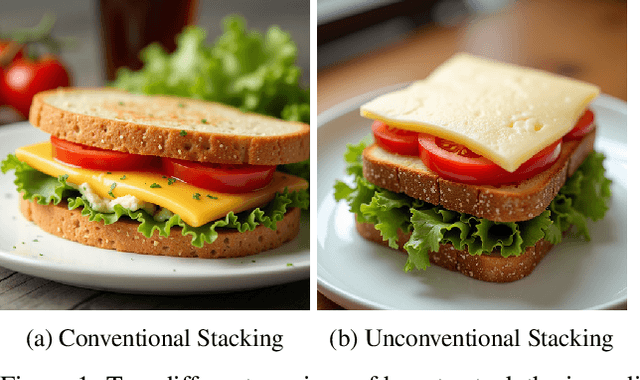

Abstract:When interacting with the world robots face a number of difficult questions, having to make decisions when given under-specified tasks where they need to make choices, often without clearly defined right and wrong answers. Humans, on the other hand, can often rely on their knowledge and experience to fill in the gaps. For example, the simple task of organizing newly bought produce into the fridge involves deciding where to put each thing individually, how to arrange them together meaningfully, e.g. putting related things together, all while there is no clear right and wrong way to accomplish this task. We could encode all this information on how to do such things explicitly into the robots' knowledge base, but this can quickly become overwhelming, considering the number of potential tasks and circumstances the robot could encounter. However, images of the real world often implicitly encode answers to such questions and can show which configurations of objects are meaningful or are usually used by humans. An image of a full fridge can give a lot of information about how things are usually arranged in relation to each other and the full fridge at large. Modern generative systems are capable of generating plausible images of the real world and can be conditioned on the environment in which the robot operates. Here we investigate the idea of using the implicit knowledge about the world of modern generative AI systems given by their ability to generate convincing images of the real world to solve under-specified tasks.

Robot Pouring: Identifying Causes of Spillage and Selecting Alternative Action Parameters Using Probabilistic Actual Causation

Feb 13, 2025Abstract:In everyday life, we perform tasks (e.g., cooking or cleaning) that involve a large variety of objects and goals. When confronted with an unexpected or unwanted outcome, we take corrective actions and try again until achieving the desired result. The reasoning performed to identify a cause of the observed outcome and to select an appropriate corrective action is a crucial aspect of human reasoning for successful task execution. Central to this reasoning is the assumption that a factor is responsible for producing the observed outcome. In this paper, we investigate the use of probabilistic actual causation to determine whether a factor is the cause of an observed undesired outcome. Furthermore, we show how the actual causation probabilities can be used to find alternative actions to change the outcome. We apply the probabilistic actual causation analysis to a robot pouring task. When spillage occurs, the analysis indicates whether a task parameter is the cause and how it should be changed to avoid spillage. The analysis requires a causal graph of the task and the corresponding conditional probability distributions. To fulfill these requirements, we perform a complete causal modeling procedure (i.e., task analysis, definition of variables, determination of the causal graph structure, and estimation of conditional probability distributions) using data from a realistic simulation of the robot pouring task, covering a large combinatorial space of task parameters. Based on the results, we discuss the implications of the variables' representation and how the alternative actions suggested by the actual causation analysis would compare to the alternative solutions proposed by a human observer. The practical use of the analysis of probabilistic actual causation to select alternative action parameters is demonstrated.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge