Jonas Brändli

Going beyond explainability in multi-modal stroke outcome prediction models

Apr 07, 2025

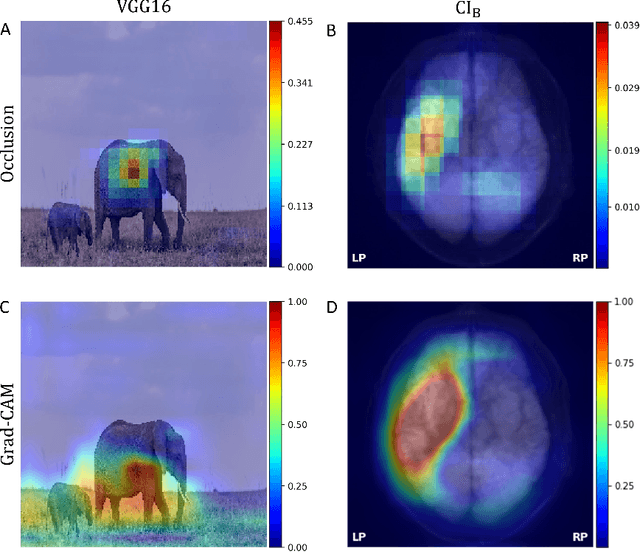

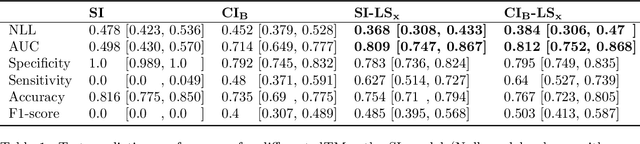

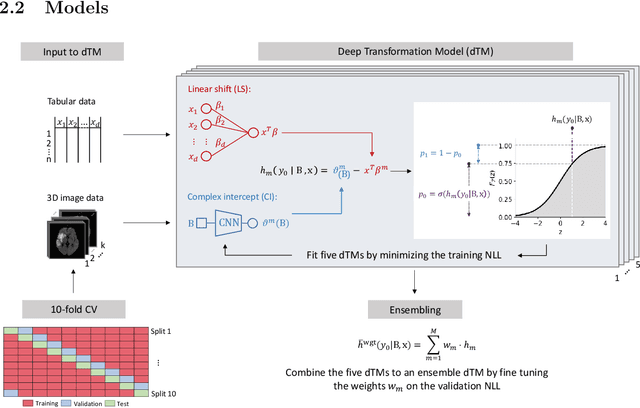

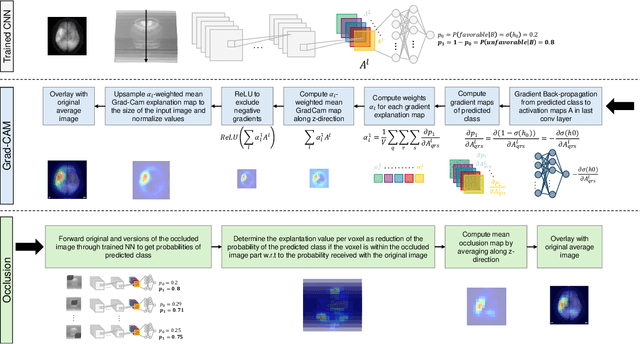

Abstract:Aim: This study aims to enhance interpretability and explainability of multi-modal prediction models integrating imaging and tabular patient data. Methods: We adapt the xAI methods Grad-CAM and Occlusion to multi-modal, partly interpretable deep transformation models (dTMs). DTMs combine statistical and deep learning approaches to simultaneously achieve state-of-the-art prediction performance and interpretable parameter estimates, such as odds ratios for tabular features. Based on brain imaging and tabular data from 407 stroke patients, we trained dTMs to predict functional outcome three months after stroke. We evaluated the models using different discriminatory metrics. The adapted xAI methods were used to generated explanation maps for identification of relevant image features and error analysis. Results: The dTMs achieve state-of-the-art prediction performance, with area under the curve (AUC) values close to 0.8. The most important tabular predictors of functional outcome are functional independence before stroke and NIHSS on admission, a neurological score indicating stroke severity. Explanation maps calculated from brain imaging dTMs for functional outcome highlighted critical brain regions such as the frontal lobe, which is known to be linked to age which in turn increases the risk for unfavorable outcomes. Similarity plots of the explanation maps revealed distinct patterns which give insight into stroke pathophysiology, support developing novel predictors of stroke outcome and enable to identify false predictions. Conclusion: By adapting methods for explanation maps to dTMs, we enhanced the explainability of multi-modal and partly interpretable prediction models. The resulting explanation maps facilitate error analysis and support hypothesis generation regarding the significance of specific image regions in outcome prediction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge