John Edwards

On the Opportunities of Large Language Models for Programming Process Data

Nov 01, 2024

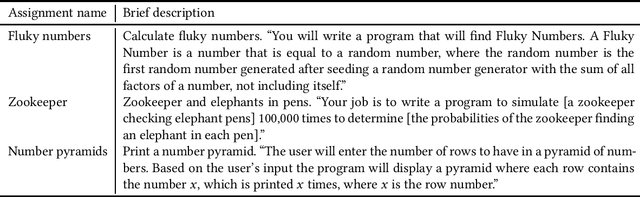

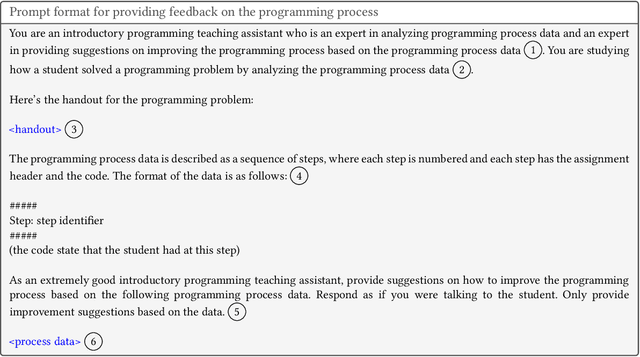

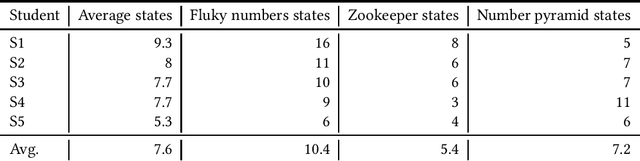

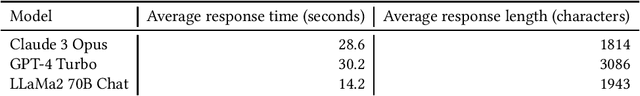

Abstract:Computing educators and researchers have used programming process data to understand how programs are constructed and what sorts of problems students struggle with. Although such data shows promise for using it for feedback, fully automated programming process feedback systems have still been an under-explored area. The recent emergence of large language models (LLMs) have yielded additional opportunities for researchers in a wide variety of fields. LLMs are efficient at transforming content from one format to another, leveraging the body of knowledge they have been trained with in the process. In this article, we discuss opportunities of using LLMs for analyzing programming process data. To complement our discussion, we outline a case study where we have leveraged LLMs for automatically summarizing the programming process and for creating formative feedback on the programming process. Overall, our discussion and findings highlight that the computing education research and practice community is again one step closer to automating formative programming process-focused feedback.

Generative AI in Education: A Study of Educators' Awareness, Sentiments, and Influencing Factors

Mar 22, 2024

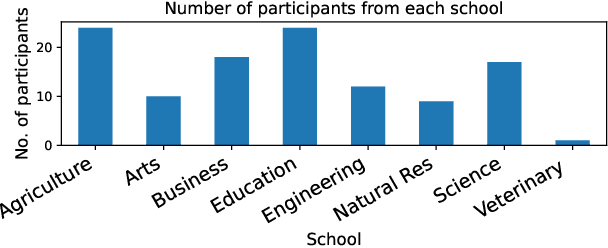

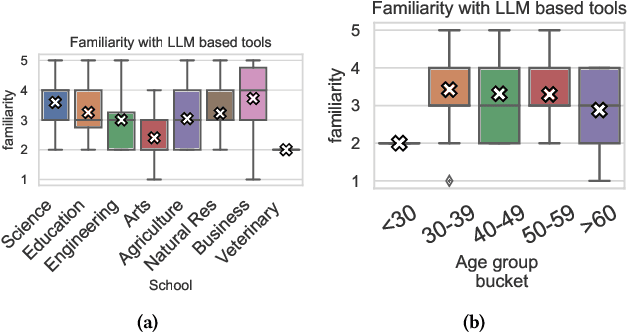

Abstract:The rapid advancement of artificial intelligence (AI) and the expanding integration of large language models (LLMs) have ignited a debate about their application in education. This study delves into university instructors' experiences and attitudes toward AI language models, filling a gap in the literature by analyzing educators' perspectives on AI's role in the classroom and its potential impacts on teaching and learning. The objective of this research is to investigate the level of awareness, overall sentiment towardsadoption, and the factors influencing these attitudes for LLMs and generative AI-based tools in higher education. Data was collected through a survey using a Likert scale, which was complemented by follow-up interviews to gain a more nuanced understanding of the instructors' viewpoints. The collected data was processed using statistical and thematic analysis techniques. Our findings reveal that educators are increasingly aware of and generally positive towards these tools. We find no correlation between teaching style and attitude toward generative AI. Finally, while CS educators show far more confidence in their technical understanding of generative AI tools and more positivity towards them than educators in other fields, they show no more confidence in their ability to detect AI-generated work.

From Guidelines to Governance: A Study of AI Policies in Education

Mar 22, 2024

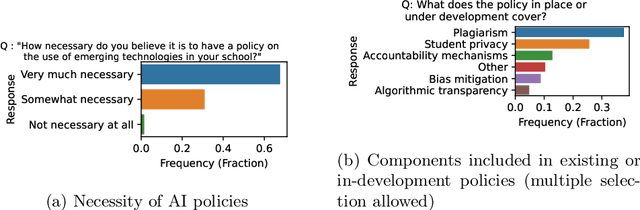

Abstract:Emerging technologies like generative AI tools, including ChatGPT, are increasingly utilized in educational settings, offering innovative approaches to learning while simultaneously posing new challenges. This study employs a survey methodology to examine the policy landscape concerning these technologies, drawing insights from 102 high school principals and higher education provosts. Our results reveal a prominent policy gap: the majority of institutions lack specialized guide-lines for the ethical deployment of AI tools such as ChatGPT. Moreover,we observed that high schools are less inclined to work on policies than higher educational institutions. Where such policies do exist, they often overlook crucial issues, including student privacy and algorithmic transparency. Administrators overwhelmingly recognize the necessity of these policies, primarily to safeguard student safety and mitigate plagiarism risks. Our findings underscore the urgent need for flexible and iterative policy frameworks in educational contexts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge