Jinxuan Sun

Progressively Unfreezing Perceptual GAN

Jun 18, 2020

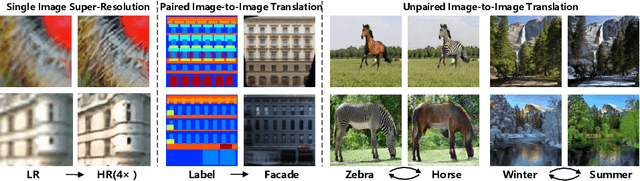

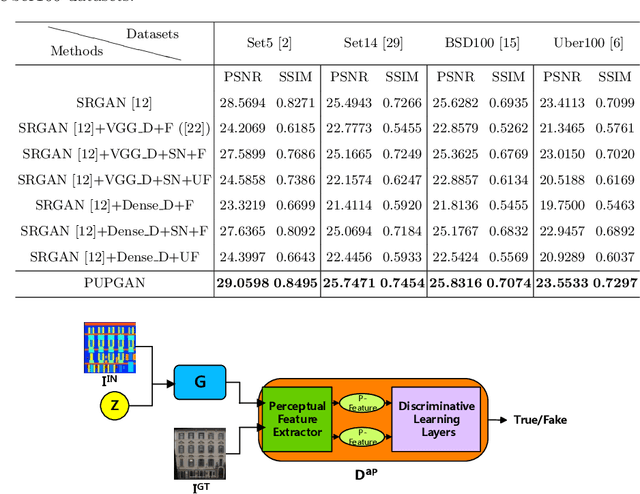

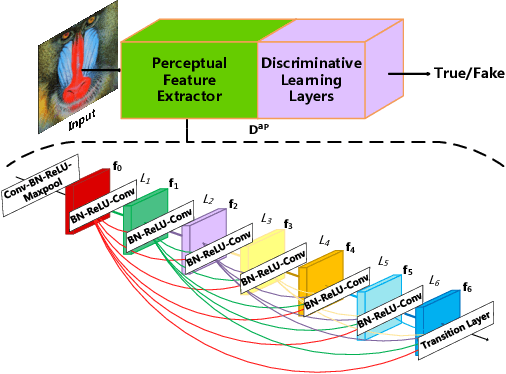

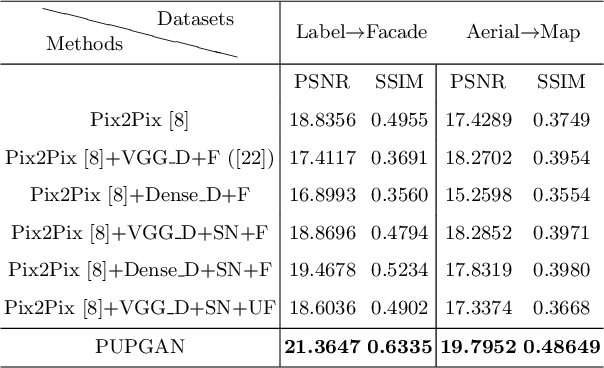

Abstract:Generative adversarial networks (GANs) are widely used in image generation tasks, yet the generated images are usually lack of texture details. In this paper, we propose a general framework, called Progressively Unfreezing Perceptual GAN (PUPGAN), which can generate images with fine texture details. Particularly, we propose an adaptive perceptual discriminator with a pre-trained perceptual feature extractor, which can efficiently measure the discrepancy between multi-level features of the generated and real images. In addition, we propose a progressively unfreezing scheme for the adaptive perceptual discriminator, which ensures a smooth transfer process from a large scale classification task to a specified image generation task. The qualitative and quantitative experiments with comparison to the classical baselines on three image generation tasks, i.e. single image super-resolution, paired image-to-image translation and unpaired image-to-image translation demonstrate the superiority of PUPGAN over the compared approaches.

Student's t-Generative Adversarial Networks

Nov 06, 2018

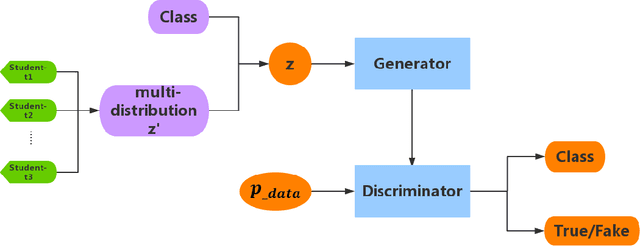

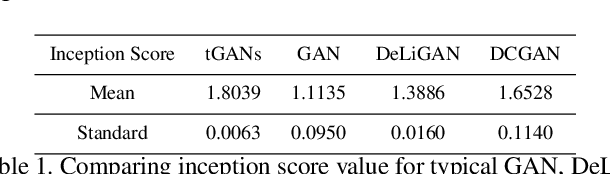

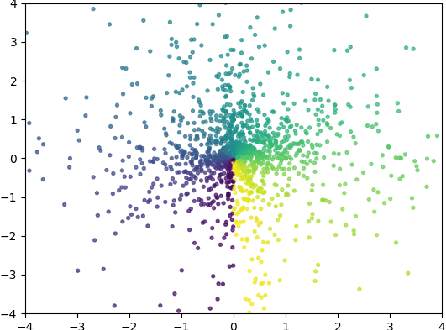

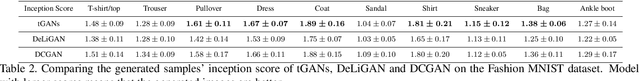

Abstract:Generative Adversarial Networks (GANs) have a great performance in image generation, but they need a large scale of data to train the entire framework, and often result in nonsensical results. We propose a new method referring to conditional GAN, which equipments the latent noise with mixture of Student's t-distribution with attention mechanism in addition to class information. Student's t-distribution has long tails that can provide more diversity to the latent noise. Meanwhile, the discriminator in our model implements two tasks simultaneously, judging whether the images come from the true data distribution, and identifying the class of each generated images. The parameters of the mixture model can be learned along with those of GANs. Moreover, we mathematically prove that any multivariate Student's t-distribution can be obtained by a linear transformation of a normal multivariate Student's t-distribution. Experiments comparing the proposed method with typical GAN, DeliGAN and DCGAN indicate that, our method has a great performance on generating diverse and legible objects with limited data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge