Jingyao Zhou

Dialogue State Tracking with Multi-Level Fusion of Predicted Dialogue States and Conversations

Jul 12, 2021

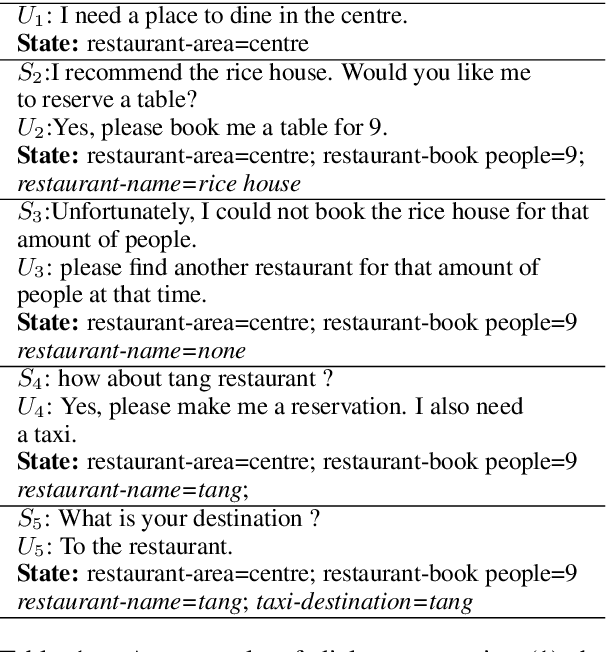

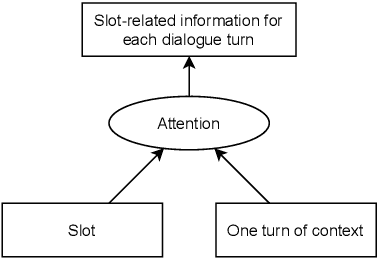

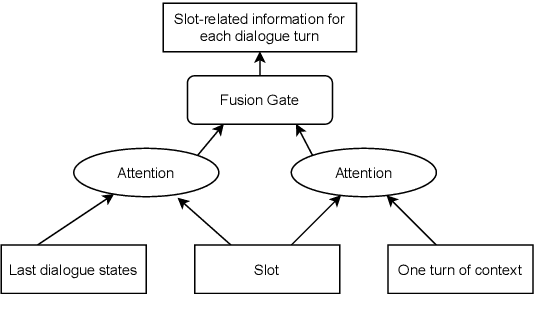

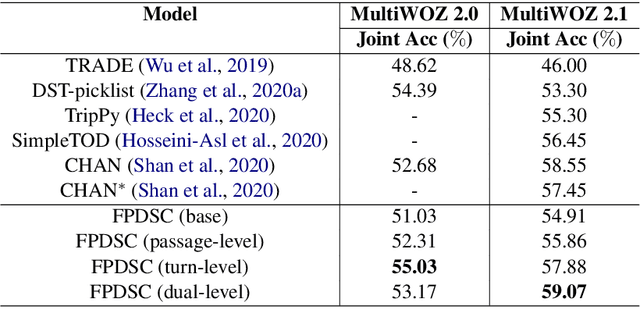

Abstract:Most recently proposed approaches in dialogue state tracking (DST) leverage the context and the last dialogue states to track current dialogue states, which are often slot-value pairs. Although the context contains the complete dialogue information, the information is usually indirect and even requires reasoning to obtain. The information in the lastly predicted dialogue states is direct, but when there is a prediction error, the dialogue information from this source will be incomplete or erroneous. In this paper, we propose the Dialogue State Tracking with Multi-Level Fusion of Predicted Dialogue States and Conversations network (FPDSC). This model extracts information of each dialogue turn by modeling interactions among each turn utterance, the corresponding last dialogue states, and dialogue slots. Then the representation of each dialogue turn is aggregated by a hierarchical structure to form the passage information, which is utilized in the current turn of DST. Experimental results validate the effectiveness of the fusion network with 55.03% and 59.07% joint accuracy on MultiWOZ 2.0 and MultiWOZ 2.1 datasets, which reaches the state-of-the-art performance. Furthermore, we conduct the deleted-value and related-slot experiments on MultiWOZ 2.1 to evaluate our model.

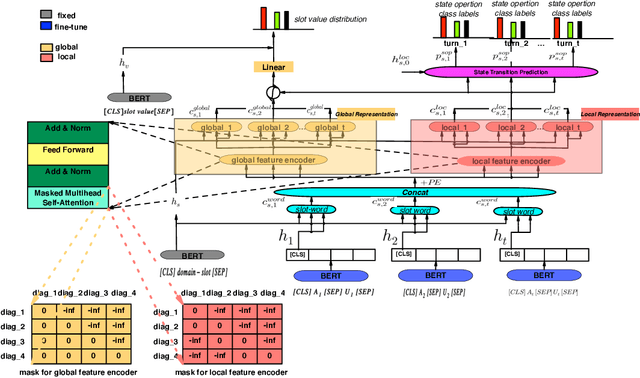

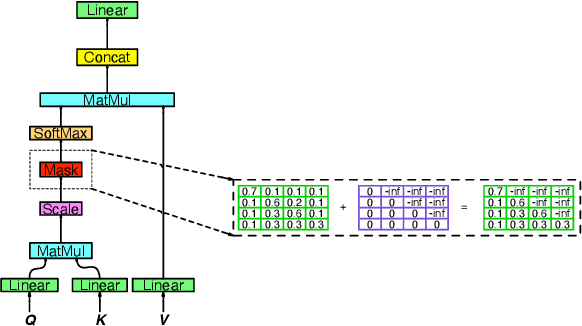

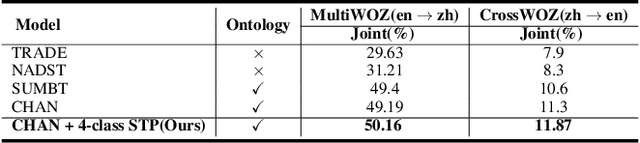

Efficient Dialogue State Tracking by Masked Hierarchical Transformer

Jun 28, 2021

Abstract:This paper describes our approach to DSTC 9 Track 2: Cross-lingual Multi-domain Dialog State Tracking, the task goal is to build a Cross-lingual dialog state tracker with a training set in rich resource language and a testing set in low resource language. We formulate a method for joint learning of slot operation classification task and state tracking task respectively. Furthermore, we design a novel mask mechanism for fusing contextual information about dialogue, the results show the proposed model achieves excellent performance on DSTC Challenge II with a joint accuracy of 62.37% and 23.96% in MultiWOZ(en - zh) dataset and CrossWOZ(zh - en) dataset, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge