Jingjie Yi

Contextual Information and Commonsense Based Prompt for Emotion Recognition in Conversation

Jul 27, 2022

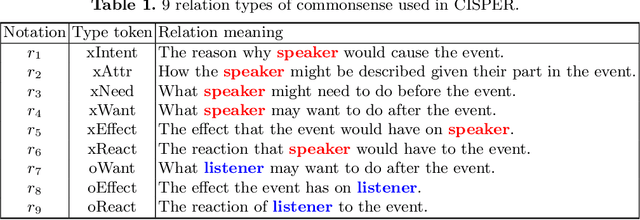

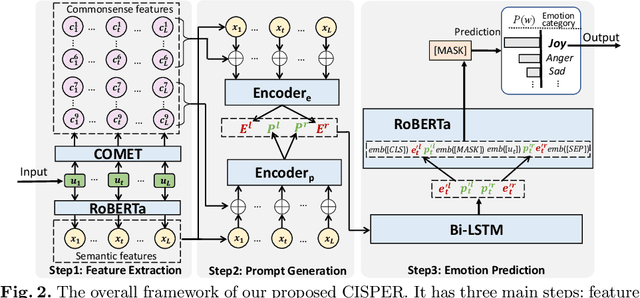

Abstract:Emotion recognition in conversation (ERC) aims to detect the emotion for each utterance in a given conversation. The newly proposed ERC models have leveraged pre-trained language models (PLMs) with the paradigm of pre-training and fine-tuning to obtain good performance. However, these models seldom exploit PLMs' advantages thoroughly, and perform poorly for the conversations lacking explicit emotional expressions. In order to fully leverage the latent knowledge related to the emotional expressions in utterances, we propose a novel ERC model CISPER with the new paradigm of prompt and language model (LM) tuning. Specifically, CISPER is equipped with the prompt blending the contextual information and commonsense related to the interlocutor's utterances, to achieve ERC more effectively. Our extensive experiments demonstrate CISPER's superior performance over the state-of-the-art ERC models, and the effectiveness of leveraging these two kinds of significant prompt information for performance gains. To reproduce our experimental results conveniently, CISPER's sourcecode and the datasets have been shared at https://github.com/DeqingYang/CISPER.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge