Jigyasa Singh Katrolia

Achieving RGB-D level Segmentation Performance from a Single ToF Camera

Jun 30, 2023

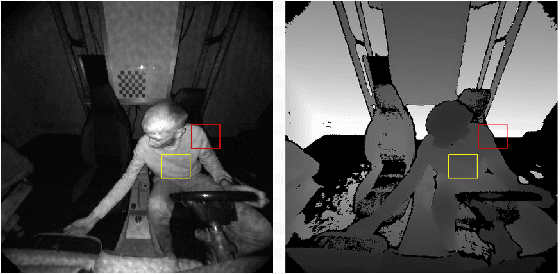

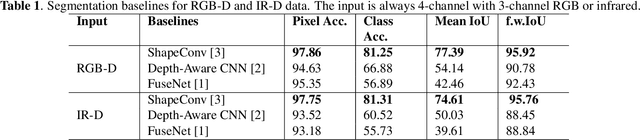

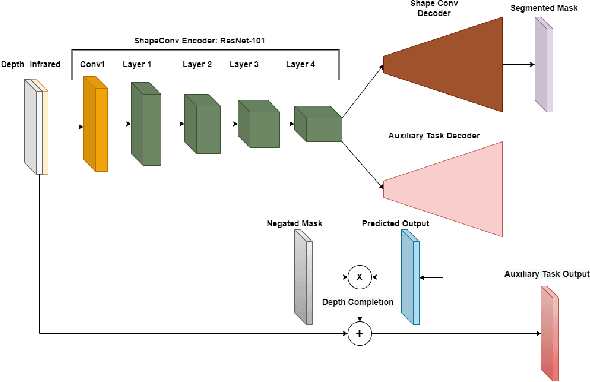

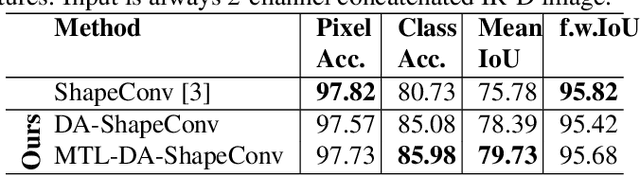

Abstract:Depth is a very important modality in computer vision, typically used as complementary information to RGB, provided by RGB-D cameras. In this work, we show that it is possible to obtain the same level of accuracy as RGB-D cameras on a semantic segmentation task using infrared (IR) and depth images from a single Time-of-Flight (ToF) camera. In order to fuse the IR and depth modalities of the ToF camera, we introduce a method utilizing depth-specific convolutions in a multi-task learning framework. In our evaluation on an in-car segmentation dataset, we demonstrate the competitiveness of our method against the more costly RGB-D approaches.

TICaM: A Time-of-flight In-car Cabin Monitoring Dataset

Mar 23, 2021

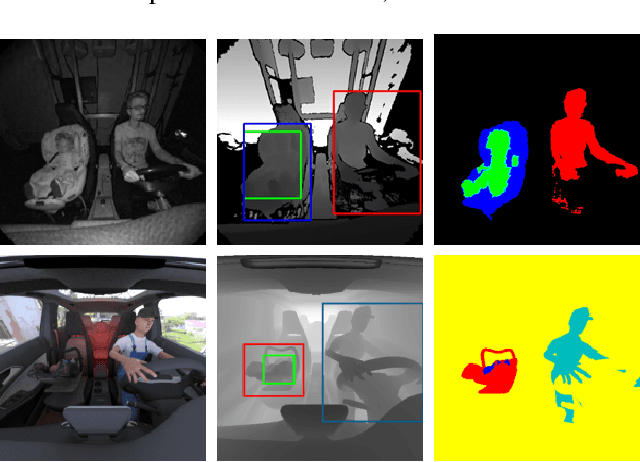

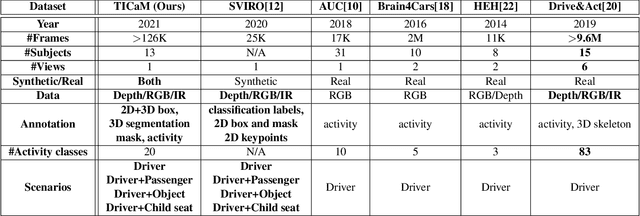

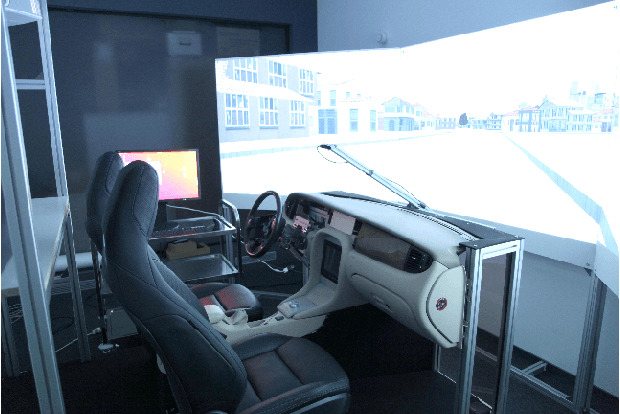

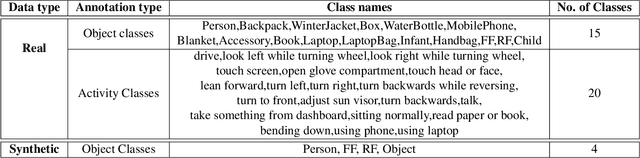

Abstract:We present TICaM, a Time-of-flight In-car Cabin Monitoring dataset for vehicle interior monitoring using a single wide-angle depth camera. Our dataset addresses the deficiencies of currently available in-car cabin datasets in terms of the ambit of labeled classes, recorded scenarios and provided annotations; all at the same time. We record an exhaustive list of actions performed while driving and provide for them multi-modal labeled images (depth, RGB and IR), with complete annotations for 2D and 3D object detection, instance and semantic segmentation as well as activity annotations for RGB frames. Additional to real recordings, we provide a synthetic dataset of in-car cabin images with same multi-modality of images and annotations, providing a unique and extremely beneficial combination of synthetic and real data for effectively training cabin monitoring systems and evaluating domain adaptation approaches. The dataset is available at https://vizta-tof.kl.dfki.de/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge