Jianxin Wei

DPIS: An Enhanced Mechanism for Differentially Private SGD with Importance Sampling

Oct 19, 2022

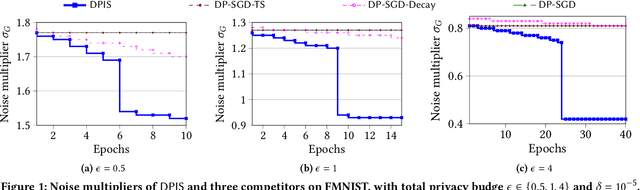

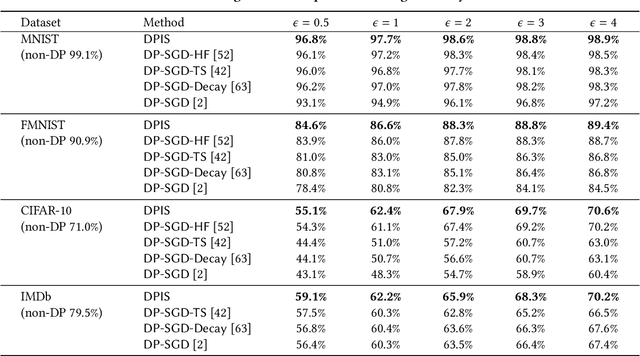

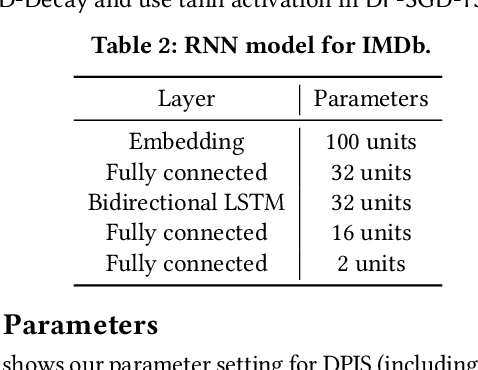

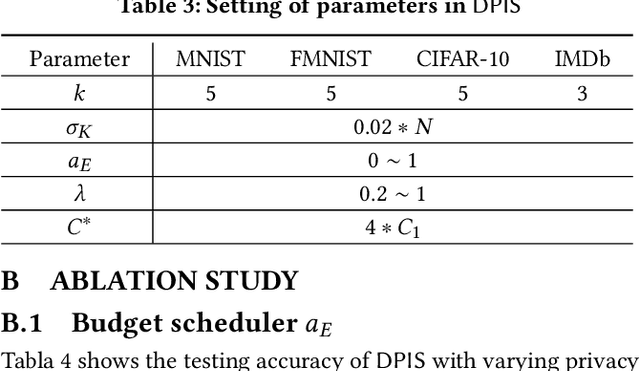

Abstract:Nowadays, differential privacy (DP) has become a well-accepted standard for privacy protection, and deep neural networks (DNN) have been immensely successful in machine learning. The combination of these two techniques, i.e., deep learning with differential privacy, promises the privacy-preserving release of high-utility models trained with sensitive data such as medical records. A classic mechanism for this purpose is DP-SGD, which is a differentially private version of the stochastic gradient descent (SGD) optimizer commonly used for DNN training. Subsequent approaches have improved various aspects of the model training process, including noise decay schedule, model architecture, feature engineering, and hyperparameter tuning. However, the core mechanism for enforcing DP in the SGD optimizer remains unchanged ever since the original DP-SGD algorithm, which has increasingly become a fundamental barrier limiting the performance of DP-compliant machine learning solutions. Motivated by this, we propose DPIS, a novel mechanism for differentially private SGD training that can be used as a drop-in replacement of the core optimizer of DP-SGD, with consistent and significant accuracy gains over the latter. The main idea is to employ importance sampling (IS) in each SGD iteration for mini-batch selection, which reduces both sampling variance and the amount of random noise injected to the gradients that is required to satisfy DP. Integrating IS into the complex mathematical machinery of DP-SGD is highly non-trivial. DPIS addresses the challenge through novel mechanism designs, fine-grained privacy analysis, efficiency enhancements, and an adaptive gradient clipping optimization. Extensive experiments on four benchmark datasets, namely MNIST, FMNIST, CIFAR-10 and IMDb, demonstrate the superior effectiveness of DPIS over existing solutions for deep learning with differential privacy.

* A short version of this paper will appear in CCS 2022

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge