Jeremy Wayland

No Metric to Rule Them All: Toward Principled Evaluations of Graph-Learning Datasets

Feb 04, 2025

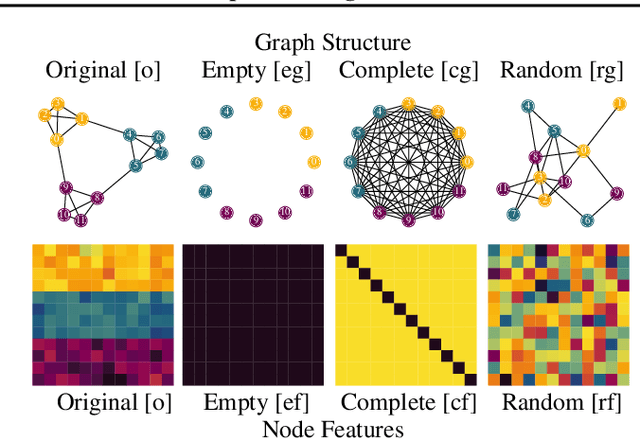

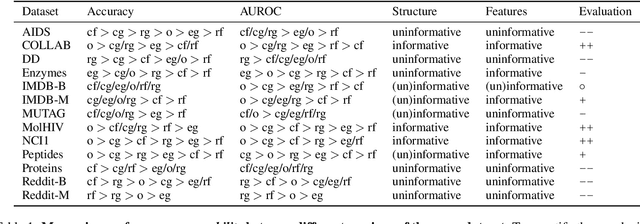

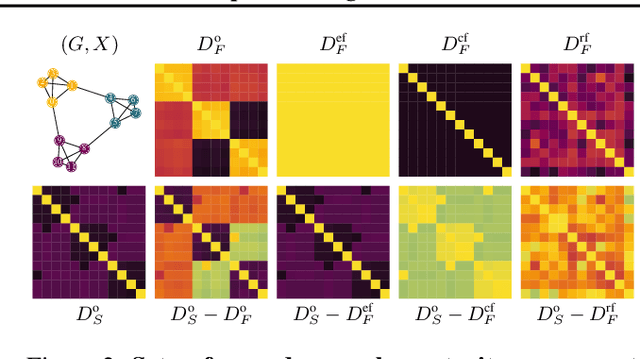

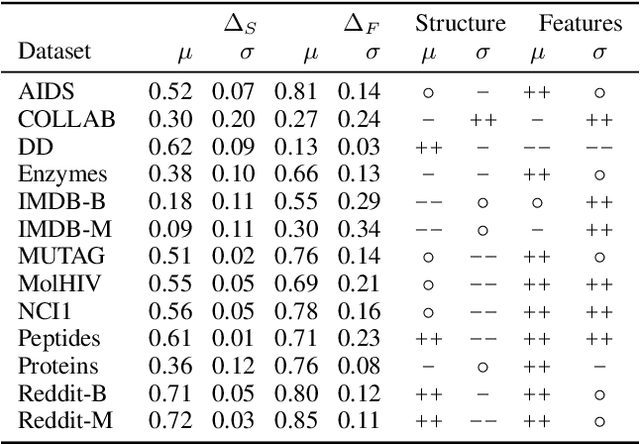

Abstract:Benchmark datasets have proved pivotal to the success of graph learning, and good benchmark datasets are crucial to guide the development of the field. Recent research has highlighted problems with graph-learning datasets and benchmarking practices -- revealing, for example, that methods which ignore the graph structure can outperform graph-based approaches on popular benchmark datasets. Such findings raise two questions: (1) What makes a good graph-learning dataset, and (2) how can we evaluate dataset quality in graph learning? Our work addresses these questions. As the classic evaluation setup uses datasets to evaluate models, it does not apply to dataset evaluation. Hence, we start from first principles. Observing that graph-learning datasets uniquely combine two modes -- the graph structure and the node features -- , we introduce RINGS, a flexible and extensible mode-perturbation framework to assess the quality of graph-learning datasets based on dataset ablations -- i.e., by quantifying differences between the original dataset and its perturbed representations. Within this framework, we propose two measures -- performance separability and mode complementarity -- as evaluation tools, each assessing, from a distinct angle, the capacity of a graph dataset to benchmark the power and efficacy of graph-learning methods. We demonstrate the utility of our framework for graph-learning dataset evaluation in an extensive set of experiments and derive actionable recommendations for improving the evaluation of graph-learning methods. Our work opens new research directions in data-centric graph learning, and it constitutes a first step toward the systematic evaluation of evaluations.

Characterizing Physician Referral Networks with Ricci Curvature

Aug 27, 2024

Abstract:Identifying (a) systemic barriers to quality healthcare access and (b) key indicators of care efficacy in the United States remains a significant challenge. To improve our understanding of regional disparities in care delivery, we introduce a novel application of curvature, a geometrical-topological property of networks, to Physician Referral Networks. Our initial findings reveal that Forman-Ricci and Ollivier-Ricci curvature measures, which are known for their expressive power in characterizing network structure, offer promising indicators for detecting variations in healthcare efficacy while capturing a range of significant regional demographic features. We also present APPARENT, an open-source tool that leverages Ricci curvature and other network features to examine correlations between regional Physician Referral Networks structure, local census data, healthcare effectiveness, and patient outcomes.

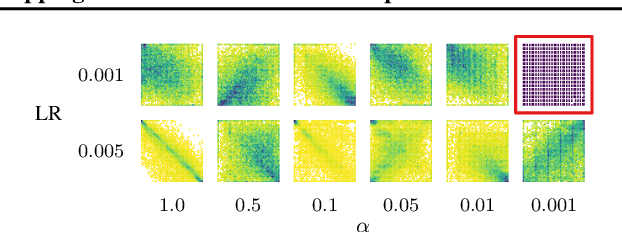

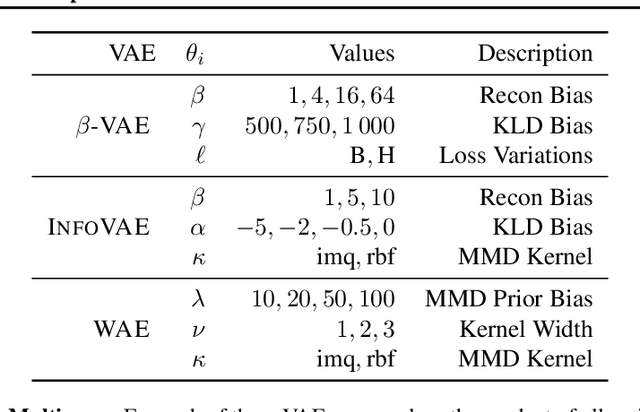

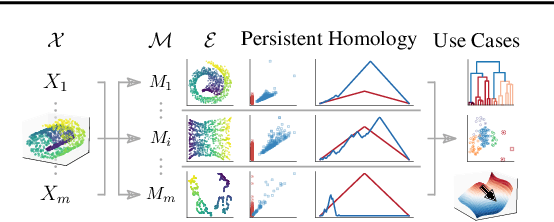

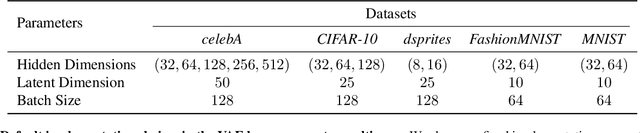

Mapping the Multiverse of Latent Representations

Feb 02, 2024

Abstract:Echoing recent calls to counter reliability and robustness concerns in machine learning via multiverse analysis, we present PRESTO, a principled framework for mapping the multiverse of machine-learning models that rely on latent representations. Although such models enjoy widespread adoption, the variability in their embeddings remains poorly understood, resulting in unnecessary complexity and untrustworthy representations. Our framework uses persistent homology to characterize the latent spaces arising from different combinations of diverse machine-learning methods, (hyper)parameter configurations, and datasets, allowing us to measure their pairwise (dis)similarity and statistically reason about their distributions. As we demonstrate both theoretically and empirically, our pipeline preserves desirable properties of collections of latent representations, and it can be leveraged to perform sensitivity analysis, detect anomalous embeddings, or efficiently and effectively navigate hyperparameter search spaces.

Curvature Filtrations for Graph Generative Model Evaluation

Jan 30, 2023

Abstract:Graph generative model evaluation necessitates understanding differences between graphs on the distributional level. This entails being able to harness salient attributes of graphs in an efficient manner. Curvature constitutes one such property of graphs, and has recently started to prove useful in characterising graphs. Its expressive properties, stability, and practical utility in model evaluation remain largely unexplored, however. We combine graph curvature descriptors with cutting-edge methods from topological data analysis to obtain robust, expressive descriptors for evaluating graph generative models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge