Jenny Xu

HyperMODEST: Self-Supervised 3D Object Detection with Confidence Score Filtering

Apr 27, 2023

Abstract:Current LiDAR-based 3D object detectors for autonomous driving are almost entirely trained on human-annotated data collected in specific geographical domains with specific sensor setups, making it difficult to adapt to a different domain. MODEST is the first work to train 3D object detectors without any labels. Our work, HyperMODEST, proposes a universal method implemented on top of MODEST that can largely accelerate the self-training process and does not require tuning on a specific dataset. We filter intermediate pseudo-labels used for data augmentation with low confidence scores. On the nuScenes dataset, we observe a significant improvement of 1.6% in AP BEV in 0-80m range at IoU=0.25 and an improvement of 1.7% in AP BEV in 0-80m range at IoU=0.5 while only using one-fifth of the training time in the original approach by MODEST. On the Lyft dataset, we also observe an improvement over the baseline during the first round of iterative self-training. We explore the trade-off between high precision and high recall in the early stage of the self-training process by comparing our proposed method with two other score filtering methods: confidence score filtering for pseudo-labels with and without static label retention. The code and models of this work are available at https://github.com/TRAILab/HyperMODEST

VoxelCache: Accelerating Online Mapping in Robotics and 3D Reconstruction Tasks

Oct 17, 2022

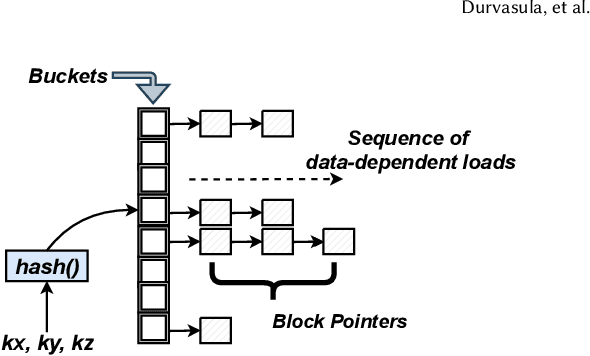

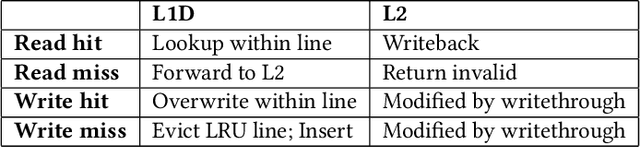

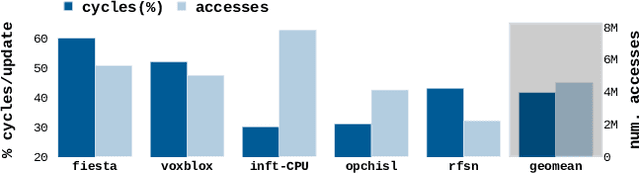

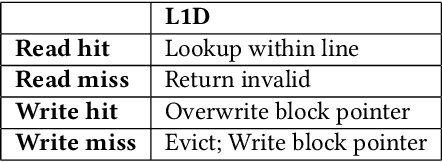

Abstract:Real-time 3D mapping is a critical component in many important applications today including robotics, AR/VR, and 3D visualization. 3D mapping involves continuously fusing depth maps obtained from depth sensors in phones, robots, and autonomous vehicles into a single 3D representative model of the scene. Many important applications, e.g., global path planning and trajectory generation in micro aerial vehicles, require the construction of large maps at high resolutions. In this work, we identify mapping, i.e., construction and updates of 3D maps to be a critical bottleneck in these applications. The memory required and access times of these maps limit the size of the environment and the resolution with which the environment can be feasibly mapped, especially in resource constrained environments such as autonomous robot platforms and portable devices. To address this challenge, we propose VoxelCache: a hardware-software technique to accelerate map data access times in 3D mapping applications. We observe that mapping applications typically access voxels in the map that are spatially co-located to each other. We leverage this temporal locality in voxel accesses to cache indices to blocks of voxels to enable quick lookup and avoid expensive access times. We evaluate VoxelCache on popularly used mapping and reconstruction applications on both GPUs and CPUs. We demonstrate an average speedup of 1.47X (up to 1.66X) and 1.79X (up to 1.91X) on CPUs and GPUs respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge