Jeffrey Redondo

Bridge to Real Environment with Hardware-in-the-loop for Wireless Artificial Intelligence Paradigms

Sep 25, 2024

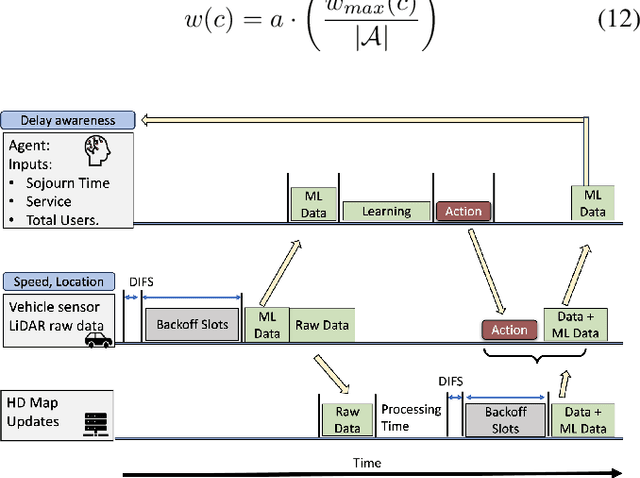

Abstract:Nowadays, many machine learning (ML) solutions to improve the wireless standard IEEE802.11p for Vehicular Adhoc Network (VANET) are commonly evaluated in the simulated world. At the same time, this approach could be cost-effective compared to real-world testing due to the high cost of vehicles. There is a risk of unexpected outcomes when these solutions are implemented in the real world, potentially leading to wasted resources. To mitigate this challenge, the hardware-in-the-loop is the way to move forward as it enables the opportunity to test in the real world and simulated worlds together. Therefore, we have developed what we believe is the pioneering hardware-in-the-loop for testing artificial intelligence, multiple services, and HD map data (LiDAR), in both simulated and real-world settings.

Multi-agent Assessment with QoS Enhancement for HD Map Updates in a Vehicular Network

Jul 31, 2024

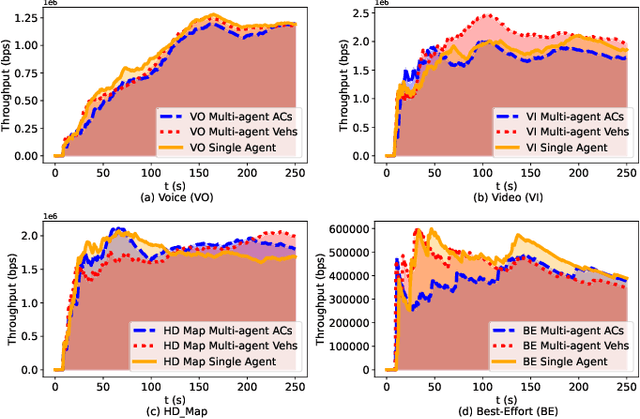

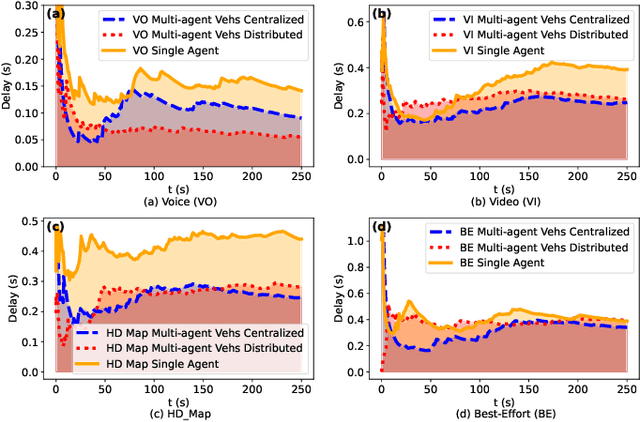

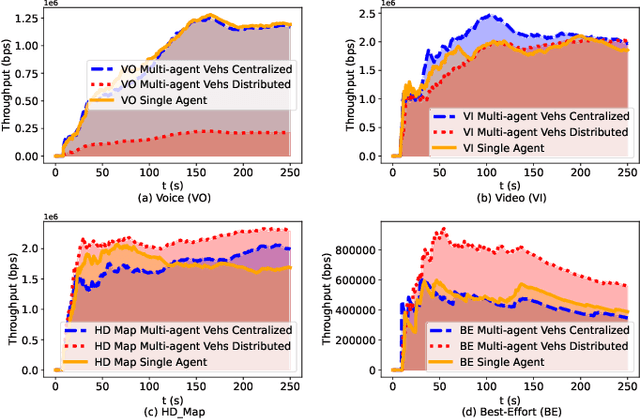

Abstract:Reinforcement Learning (RL) algorithms have been used to address the challenging problems in the offloading process of vehicular ad hoc networks (VANET). More recently, they have been utilized to improve the dissemination of high-definition (HD) Maps. Nevertheless, implementing solutions such as deep Q-learning (DQN) and Actor-critic at the autonomous vehicle (AV) may lead to an increase in the computational load, causing a heavy burden on the computational devices and higher costs. Moreover, their implementation might raise compatibility issues between technologies due to the required modifications to the standards. Therefore, in this paper, we assess the scalability of an application utilizing a Q-learning single-agent solution in a distributed multi-agent environment. This application improves the network performance by taking advantage of a smaller state, and action space whilst using a multi-agent approach. The proposed solution is extensively evaluated with different test cases involving reward function considering individual or overall network performance, number of agents, and centralized and distributed learning comparison. The experimental results demonstrate that the time latencies of our proposed solution conducted in voice, video, HD Map, and best-effort cases have significant improvements, with 40.4%, 36%, 43%, and 12% respectively, compared to the performances with the single-agent approach.

Coverage-aware and Reinforcement Learning Using Multi-agent Approach for HD Map QoS in a Realistic Environment

Jul 19, 2024

Abstract:One effective way to optimize the offloading process is by minimizing the transmission time. This is particularly true in a Vehicular Adhoc Network (VANET) where vehicles frequently download and upload High-definition (HD) map data which requires constant updates. This implies that latency and throughput requirements must be guaranteed by the wireless system. To achieve this, adjustable contention windows (CW) allocation strategies in the standard IEEE802.11p have been explored by numerous researchers. Nevertheless, their implementations demand alterations to the existing standard which is not always desirable. To address this issue, we proposed a Q-Learning algorithm that operates at the application layer. Moreover, it could be deployed in any wireless network thereby mitigating the compatibility issues. The solution has demonstrated a better network performance with relatively fewer optimization requirements as compared to the Deep Q Network (DQN) and Actor-Critic algorithms. The same is observed while evaluating the model in a multi-agent setup showing higher performance compared to the single-agent setup.

Enhancement of High-definition Map Update Service Through Coverage-aware and Reinforcement Learning

Feb 08, 2024

Abstract:High-definition (HD) Map systems will play a pivotal role in advancing autonomous driving to a higher level, thanks to the significant improvement over traditional two-dimensional (2D) maps. Creating an HD Map requires a huge amount of on-road and off-road data. Typically, these raw datasets are collected and uploaded to cloud-based HD map service providers through vehicular networks. Nevertheless, there are challenges in transmitting the raw data over vehicular wireless channels due to the dynamic topology. As the number of vehicles increases, there is a detrimental impact on service quality, which acts as a barrier to a real-time HD Map system for collaborative driving in Autonomous Vehicles (AV). In this paper, to overcome network congestion, a Q-learning coverage-time-awareness algorithm is presented to optimize the quality of service for vehicular networks and HD map updates. The algorithm is evaluated in an environment that imitates a dynamic scenario where vehicles enter and leave. Results showed an improvement in latency for HD map data of $75\%$, $73\%$, and $10\%$ compared with IEEE802.11p without Quality of Service (QoS), IEEE802.11 with QoS, and IEEE802.11p with new access category (AC) for HD map, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge