Je Yang

LearningGroup: A Real-Time Sparse Training on FPGA via Learnable Weight Grouping for Multi-Agent Reinforcement Learning

Oct 29, 2022

Abstract:Multi-agent reinforcement learning (MARL) is a powerful technology to construct interactive artificial intelligent systems in various applications such as multi-robot control and self-driving cars. Unlike supervised model or single-agent reinforcement learning, which actively exploits network pruning, it is obscure that how pruning will work in multi-agent reinforcement learning with its cooperative and interactive characteristics. \par In this paper, we present a real-time sparse training acceleration system named LearningGroup, which adopts network pruning on the training of MARL for the first time with an algorithm/architecture co-design approach. We create sparsity using a weight grouping algorithm and propose on-chip sparse data encoding loop (OSEL) that enables fast encoding with efficient implementation. Based on the OSEL's encoding format, LearningGroup performs efficient weight compression and computation workload allocation to multiple cores, where each core handles multiple sparse rows of the weight matrix simultaneously with vector processing units. As a result, LearningGroup system minimizes the cycle time and memory footprint for sparse data generation up to 5.72x and 6.81x. Its FPGA accelerator shows 257.40-3629.48 GFLOPS throughput and 7.10-100.12 GFLOPS/W energy efficiency for various conditions in MARL, which are 7.13x higher and 12.43x more energy efficient than Nvidia Titan RTX GPU, thanks to the fully on-chip training and highly optimized dataflow/data format provided by FPGA. Most importantly, the accelerator shows speedup up to 12.52x for processing sparse data over the dense case, which is the highest among state-of-the-art sparse training accelerators.

FIXAR: A Fixed-Point Deep Reinforcement Learning Platform with Quantization-Aware Training and Adaptive Parallelism

Feb 24, 2021

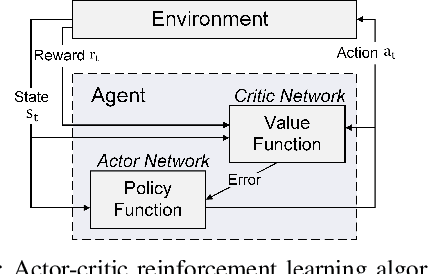

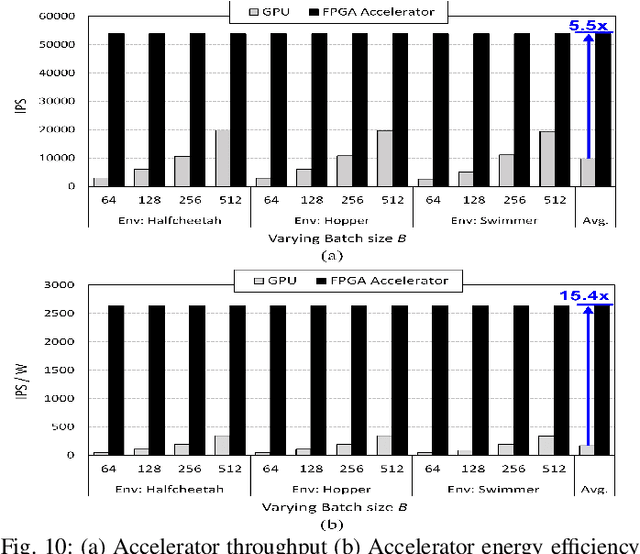

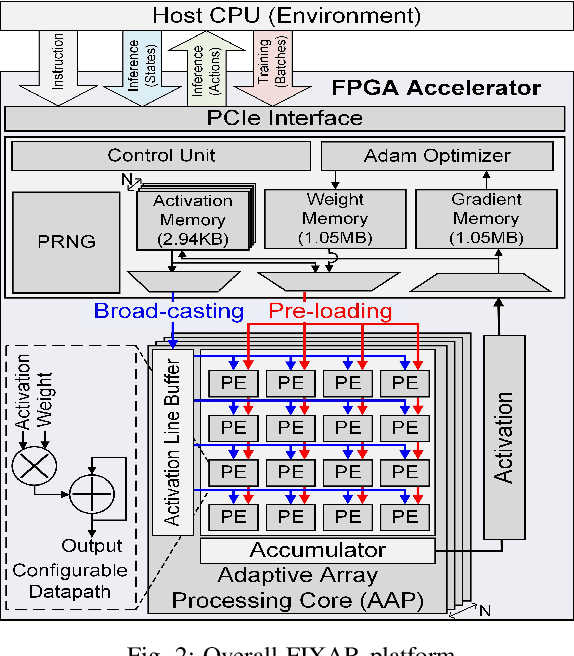

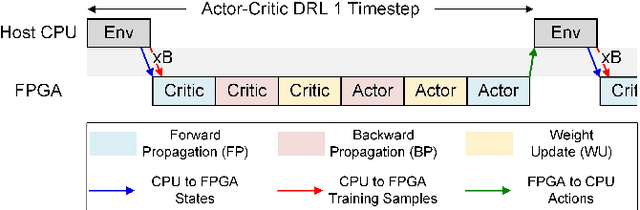

Abstract:In this paper, we present a deep reinforcement learning platform named FIXAR which employs fixed-point data types and arithmetic units for the first time using a SW/HW co-design approach. Starting from 32-bit fixed-point data, Quantization-Aware Training (QAT) reduces its data precision based on the range of activations and performs retraining to minimize the reward degradation. FIXAR proposes the adaptive array processing core composed of configurable processing elements to support both intra-layer parallelism and intra-batch parallelism for high-throughput inference and training. Finally, FIXAR was implemented on Xilinx U50 and achieves 25293.3 inferences per second (IPS) training throughput and 2638.0 IPS/W accelerator efficiency, which is 2.7 times faster and 15.4 times more energy efficient than those of the CPU-GPU platform without any accuracy degradation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge