Javad Salimi Sartakhti

CTRAN: CNN-Transformer-based Network for Natural Language Understanding

Mar 19, 2023

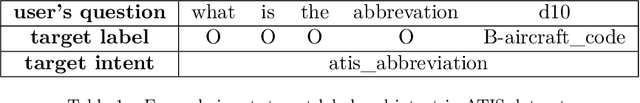

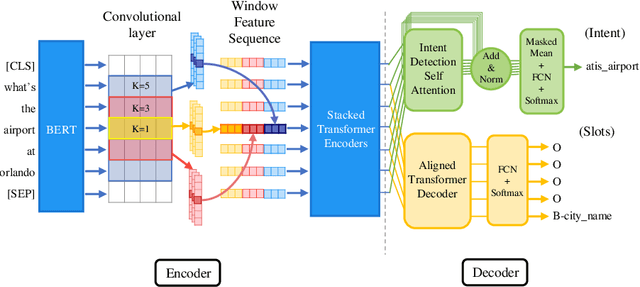

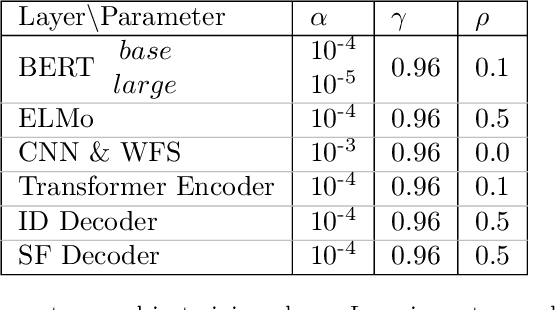

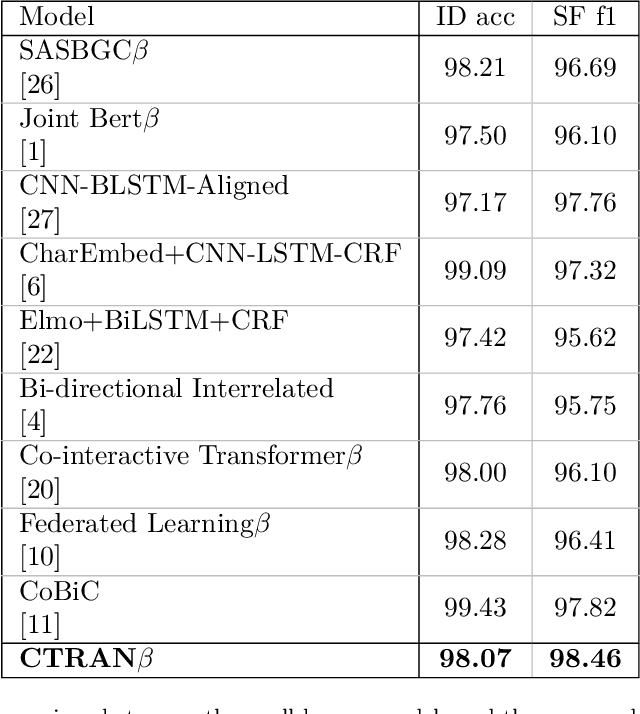

Abstract:Intent-detection and slot-filling are the two main tasks in natural language understanding. In this study, we propose CTRAN, a novel encoder-decoder CNN-Transformer-based architecture for intent-detection and slot-filling. In the encoder, we use BERT, followed by several convolutional layers, and rearrange the output using window feature sequence. We use stacked Transformer encoders after the window feature sequence. For the intent-detection decoder, we utilize self-attention followed by a linear layer. In the slot-filling decoder, we introduce the aligned Transformer decoder, which utilizes a zero diagonal mask, aligning output tags with input tokens. We apply our network on ATIS and SNIPS, and surpass the current state-of-the-art in slot-filling on both datasets. Furthermore, we incorporate the language model as word embeddings, and show that this strategy yields a better result when compared to the language model as an encoder.

Fuzzy Least Squares Twin Support Vector Machines

Jul 22, 2016

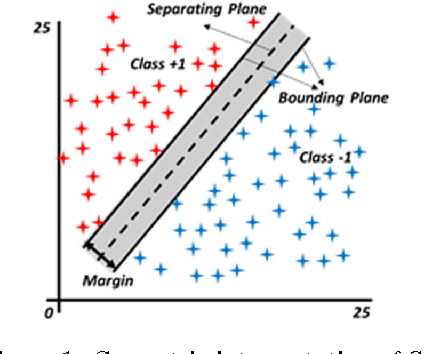

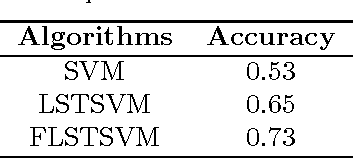

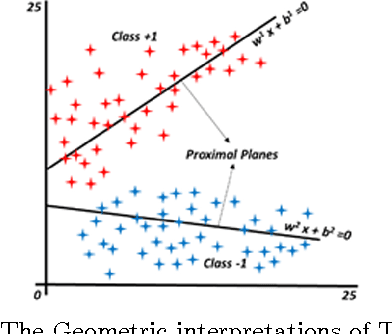

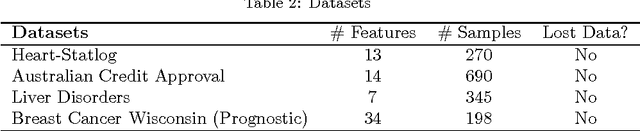

Abstract:Least Squares Twin Support Vector Machine (LSTSVM) is an extremely efficient and fast version of SVM algorithm for binary classification. LSTSVM combines the idea of Least Squares SVM and Twin SVM in which two non-parallel hyperplanes are found by solving two systems of linear equations. Although the algorithm is very fast and efficient in many classification tasks, it is unable to cope with two features of real-world problems. First, in many real-world classification problems, it is almost impossible to assign data points to a single class. Second, data points in real-world problems may have different importance. In this study, we propose a novel version of LSTSVM based on fuzzy concepts to deal with these two characteristics of real-world data. The algorithm is called Fuzzy LSTSVM (FLSTSVM) which provides more flexibility than the binary classification of LSTSVM. Two models are proposed for the algorithm. In the first model, a fuzzy membership value is assigned to each data point and the hyperplanes are optimized based on these fuzzy samples. In the second model we construct fuzzy hyperplanes to classify data. Finally, we apply our proposed FLSTSVM to an artificial as well as three real-world datasets. Results demonstrate that FLSTSVM obtains better performance than SVM and LSTSVM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge