Jason Wong

Evaluating Fairness in Transaction Fraud Models: Fairness Metrics, Bias Audits, and Challenges

Sep 06, 2024Abstract:Ensuring fairness in transaction fraud detection models is vital due to the potential harms and legal implications of biased decision-making. Despite extensive research on algorithmic fairness, there is a notable gap in the study of bias in fraud detection models, mainly due to the field's unique challenges. These challenges include the need for fairness metrics that account for fraud data's imbalanced nature and the tradeoff between fraud protection and service quality. To address this gap, we present a comprehensive fairness evaluation of transaction fraud models using public synthetic datasets, marking the first algorithmic bias audit in this domain. Our findings reveal three critical insights: (1) Certain fairness metrics expose significant bias only after normalization, highlighting the impact of class imbalance. (2) Bias is significant in both service quality-related parity metrics and fraud protection-related parity metrics. (3) The fairness through unawareness approach, which involved removing sensitive attributes such as gender, does not improve bias mitigation within these datasets, likely due to the presence of correlated proxies. We also discuss socio-technical fairness-related challenges in transaction fraud models. These insights underscore the need for a nuanced approach to fairness in fraud detection, balancing protection and service quality, and moving beyond simple bias mitigation strategies. Future work must focus on refining fairness metrics and developing methods tailored to the unique complexities of the transaction fraud domain.

Towards a Foundation Purchasing Model: Pretrained Generative Autoregression on Transaction Sequences

Jan 04, 2024

Abstract:Machine learning models underpin many modern financial systems for use cases such as fraud detection and churn prediction. Most are based on supervised learning with hand-engineered features, which relies heavily on the availability of labelled data. Large self-supervised generative models have shown tremendous success in natural language processing and computer vision, yet so far they haven't been adapted to multivariate time series of financial transactions. In this paper, we present a generative pretraining method that can be used to obtain contextualised embeddings of financial transactions. Benchmarks on public datasets demonstrate that it outperforms state-of-the-art self-supervised methods on a range of downstream tasks. We additionally perform large-scale pretraining of an embedding model using a corpus of data from 180 issuing banks containing 5.1 billion transactions and apply it to the card fraud detection problem on hold-out datasets. The embedding model significantly improves value detection rate at high precision thresholds and transfers well to out-of-domain distributions.

Locally Differentially Private Embedding Models in Distributed Fraud Prevention Systems

Jan 03, 2024

Abstract:Global financial crime activity is driving demand for machine learning solutions in fraud prevention. However, prevention systems are commonly serviced to financial institutions in isolation, and few provisions exist for data sharing due to fears of unintentional leaks and adversarial attacks. Collaborative learning advances in finance are rare, and it is hard to find real-world insights derived from privacy-preserving data processing systems. In this paper, we present a collaborative deep learning framework for fraud prevention, designed from a privacy standpoint, and awarded at the recent PETs Prize Challenges. We leverage latent embedded representations of varied-length transaction sequences, along with local differential privacy, in order to construct a data release mechanism which can securely inform externally hosted fraud and anomaly detection models. We assess our contribution on two distributed data sets donated by large payment networks, and demonstrate robustness to popular inference-time attacks, along with utility-privacy trade-offs analogous to published work in alternative application domains.

Semi-Equivariant GNN Architectures for Jet Tagging

Feb 14, 2022

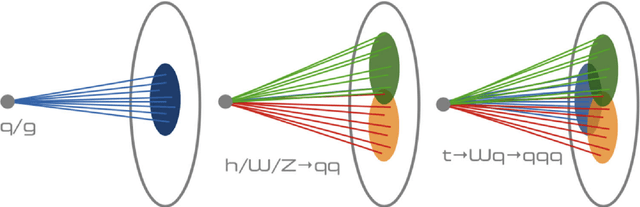

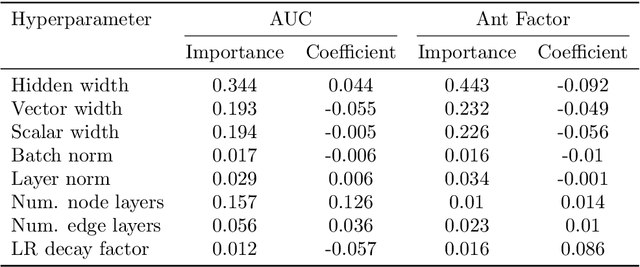

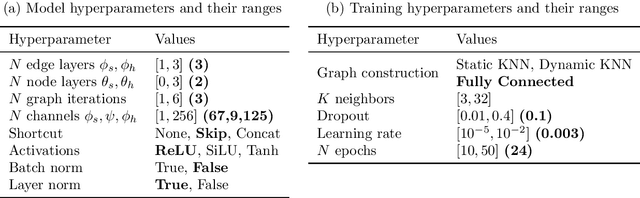

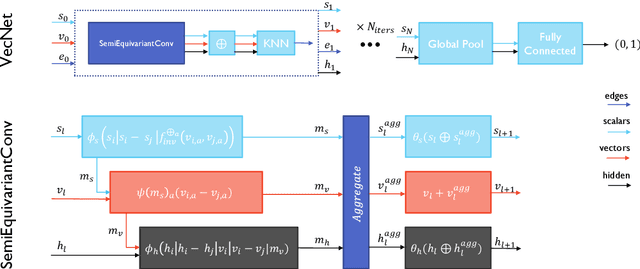

Abstract:Composing Graph Neural Networks (GNNs) of operations that respect physical symmetries has been suggested to give better model performance with a smaller number of learnable parameters. However, real-world applications, such as in high energy physics have not born this out. We present the novel architecture VecNet that combines both symmetry-respecting and unconstrained operations to study and tune the degree of physics-informed GNNs. We introduce a novel metric, the \textit{ant factor}, to quantify the resource-efficiency of each configuration in the search-space. We find that a generalized architecture such as ours can deliver optimal performance in resource-constrained applications.

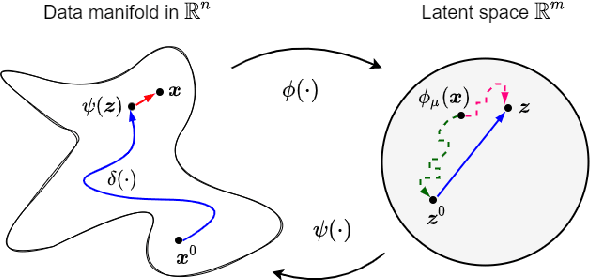

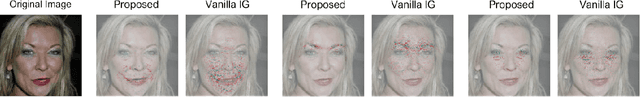

Path Integrals for the Attribution of Model Uncertainties

Jul 20, 2021

Abstract:Enabling interpretations of model uncertainties is of key importance in Bayesian machine learning applications. Often, this requires to meaningfully attribute predictive uncertainties to source features in an image, text or categorical array. However, popular attribution methods are particularly designed for classification and regression scores. In order to explain uncertainties, state of the art alternatives commonly procure counterfactual feature vectors, and proceed by making direct comparisons. In this paper, we leverage path integrals to attribute uncertainties in Bayesian differentiable models. We present a novel algorithm that relies on in-distribution curves connecting a feature vector to some counterfactual counterpart, and we retain desirable properties of interpretability methods. We validate our approach on benchmark image data sets with varying resolution, and show that it significantly simplifies interpretability over the existing alternatives.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge