Jake Daly

Generating GPU Compiler Heuristics using Reinforcement Learning

Nov 23, 2021

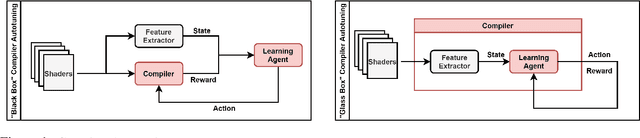

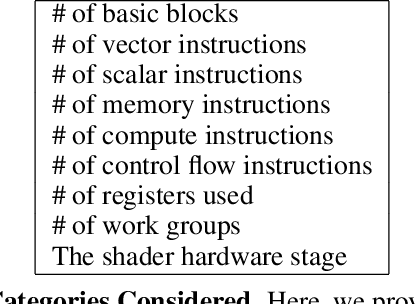

Abstract:GPU compilers are complex software programs with many optimizations specific to target hardware. These optimizations are often controlled by heuristics hand-designed by compiler experts using time- and resource-intensive processes. In this paper, we developed a GPU compiler autotuning framework that uses off-policy deep reinforcement learning to generate heuristics that improve the frame rates of graphics applications. Furthermore, we demonstrate the resilience of these learned heuristics to frequent compiler updates by analyzing their stability across a year of code check-ins without retraining. We show that our machine learning-based compiler autotuning framework matches or surpasses the frame rates for 98% of graphics benchmarks with an average uplift of 1.6% up to 15.8%.

A Competitive Edge: Can FPGAs Beat GPUs at DCNN Inference Acceleration in Resource-Limited Edge Computing Applications?

Jan 30, 2021

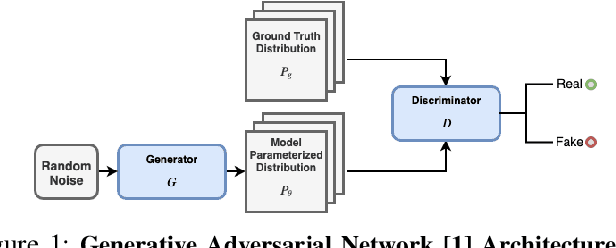

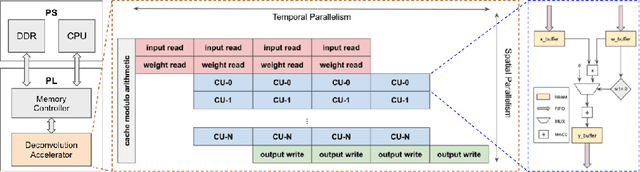

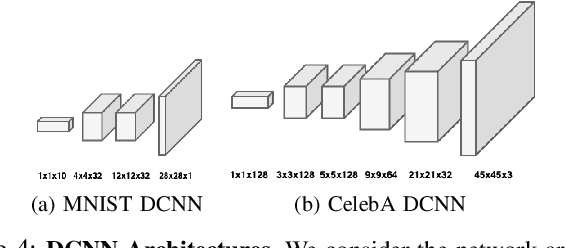

Abstract:When trained as generative models, Deep Learning algorithms have shown exceptional performance on tasks involving high dimensional data such as image denoising and super-resolution. In an increasingly connected world dominated by mobile and edge devices, there is surging demand for these algorithms to run locally on embedded platforms. FPGAs, by virtue of their reprogrammability and low-power characteristics, are ideal candidates for these edge computing applications. As such, we design a spatio-temporally parallelized hardware architecture capable of accelerating a deconvolution algorithm optimized for power-efficient inference on a resource-limited FPGA. We propose this FPGA-based accelerator to be used for Deconvolutional Neural Network (DCNN) inference in low-power edge computing applications. To this end, we develop methods that systematically exploit micro-architectural innovations, design space exploration, and statistical analysis. Using a Xilinx PYNQ-Z2 FPGA, we leverage our architecture to accelerate inference for two DCNNs trained on the MNIST and CelebA datasets using the Wasserstein GAN framework. On these networks, our FPGA design achieves a higher throughput to power ratio with lower run-to-run variation when compared to the NVIDIA Jetson TX1 edge computing GPU.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge