Jacqueline Yau

A Study of Face Obfuscation in ImageNet

Mar 14, 2021

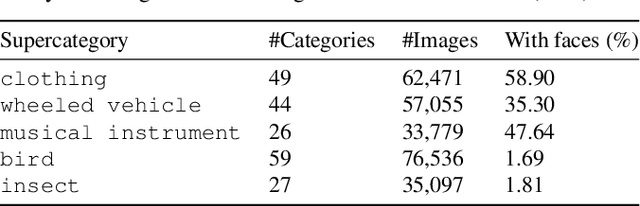

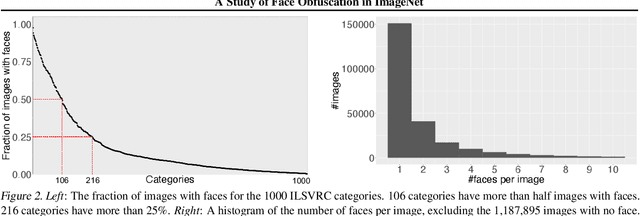

Abstract:Face obfuscation (blurring, mosaicing, etc.) has been shown to be effective for privacy protection; nevertheless, object recognition research typically assumes access to complete, unobfuscated images. In this paper, we explore the effects of face obfuscation on the popular ImageNet challenge visual recognition benchmark. Most categories in the ImageNet challenge are not people categories; however, many incidental people appear in the images, and their privacy is a concern. We first annotate faces in the dataset. Then we demonstrate that face blurring -- a typical obfuscation technique -- has minimal impact on the accuracy of recognition models. Concretely, we benchmark multiple deep neural networks on face-blurred images and observe that the overall recognition accuracy drops only slightly (no more than 0.68%). Further, we experiment with transfer learning to 4 downstream tasks (object recognition, scene recognition, face attribute classification, and object detection) and show that features learned on face-blurred images are equally transferable. Our work demonstrates the feasibility of privacy-aware visual recognition, improves the highly-used ImageNet challenge benchmark, and suggests an important path for future visual datasets. Data and code are available at https://github.com/princetonvisualai/imagenet-face-obfuscation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge