J. P. Dym

CompareNet: Anatomical Segmentation Network with Deep Non-local Label Fusion

Oct 10, 2019

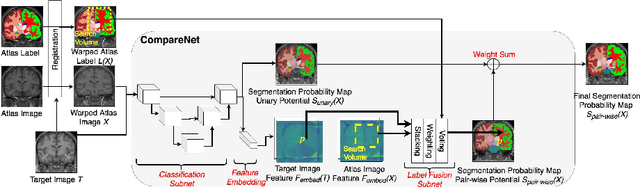

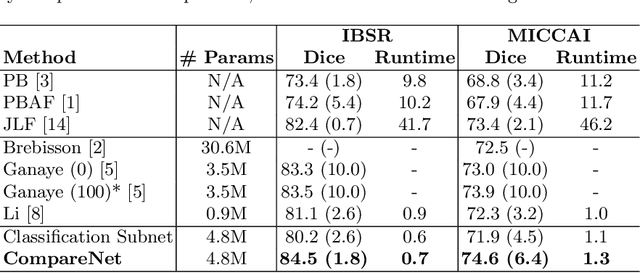

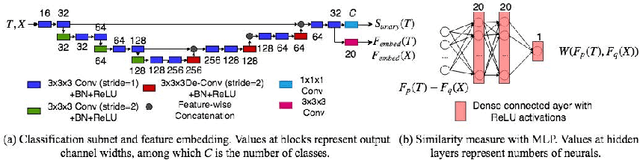

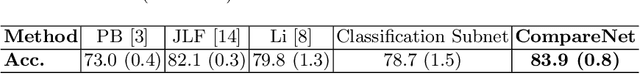

Abstract:Label propagation is a popular technique for anatomical segmentation. In this work, we propose a novel deep framework for label propagation based on non-local label fusion. Our framework, named CompareNet, incorporates subnets for both extracting discriminating features, and learning the similarity measure, which lead to accurate segmentation. We also introduce the voxel-wise classification as an unary potential to the label fusion function, for alleviating the search failure issue of the existing non-local fusion strategies. Moreover, CompareNet is end-to-end trainable, and all the parameters are learnt together for the optimal performance. By evaluating CompareNet on two public datasets IBSRv2 and MICCAI 2012 for brain segmentation, we show it outperforms state-of-the-art methods in accuracy, while being robust to pathologies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge