Isabelle Tellier

LIFO

Effective Spoken Language Labeling with Deep Recurrent Neural Networks

Jun 20, 2017

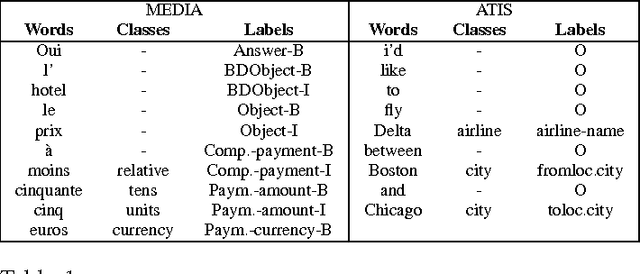

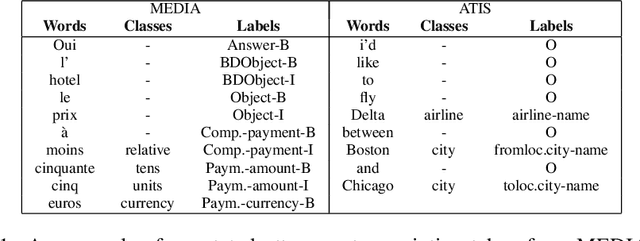

Abstract:Understanding spoken language is a highly complex problem, which can be decomposed into several simpler tasks. In this paper, we focus on Spoken Language Understanding (SLU), the module of spoken dialog systems responsible for extracting a semantic interpretation from the user utterance. The task is treated as a labeling problem. In the past, SLU has been performed with a wide variety of probabilistic models. The rise of neural networks, in the last couple of years, has opened new interesting research directions in this domain. Recurrent Neural Networks (RNNs) in particular are able not only to represent several pieces of information as embeddings but also, thanks to their recurrent architecture, to encode as embeddings relatively long contexts. Such long contexts are in general out of reach for models previously used for SLU. In this paper we propose novel RNNs architectures for SLU which outperform previous ones. Starting from a published idea as base block, we design new deep RNNs achieving state-of-the-art results on two widely used corpora for SLU: ATIS (Air Traveling Information System), in English, and MEDIA (Hotel information and reservation in France), in French.

Label-Dependencies Aware Recurrent Neural Networks

Jun 06, 2017

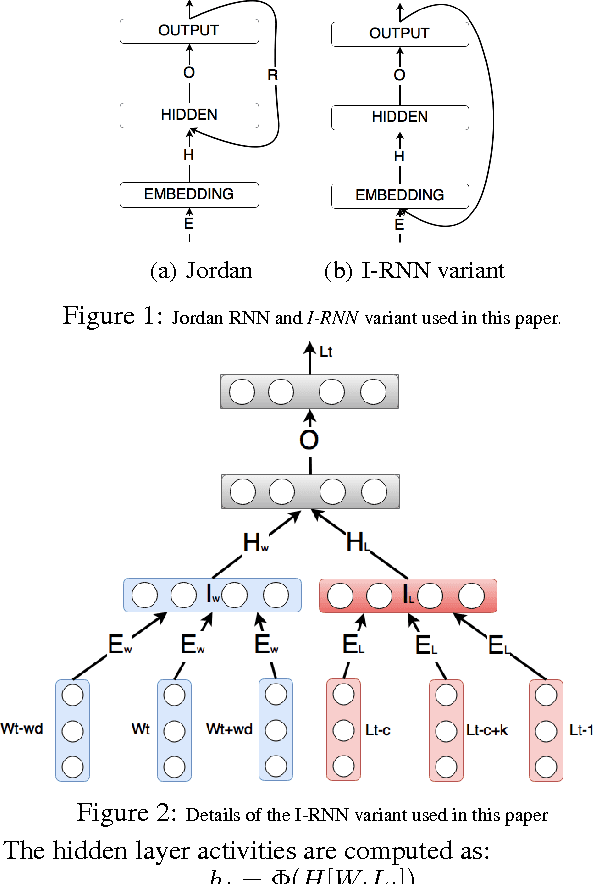

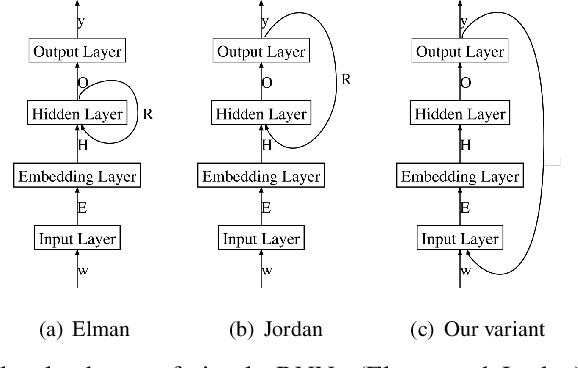

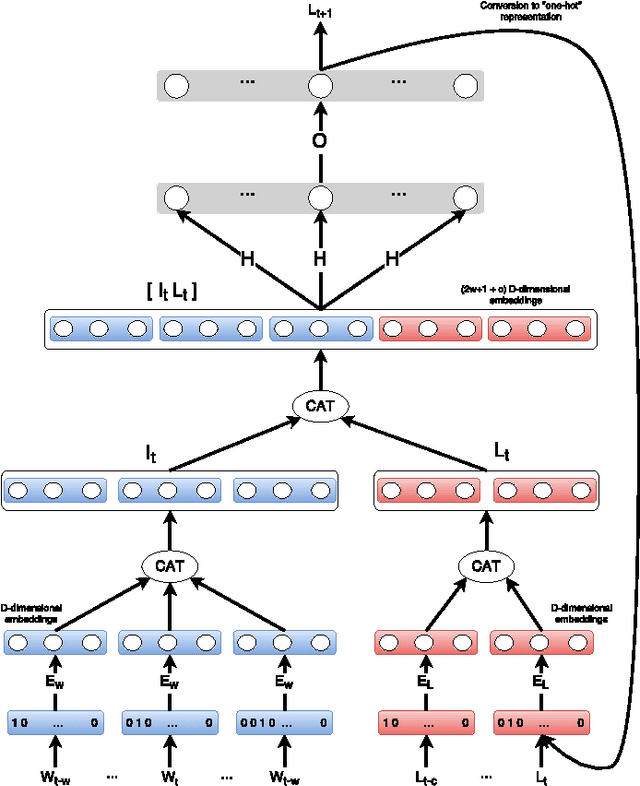

Abstract:In the last few years, Recurrent Neural Networks (RNNs) have proved effective on several NLP tasks. Despite such great success, their ability to model \emph{sequence labeling} is still limited. This lead research toward solutions where RNNs are combined with models which already proved effective in this domain, such as CRFs. In this work we propose a solution far simpler but very effective: an evolution of the simple Jordan RNN, where labels are re-injected as input into the network, and converted into embeddings, in the same way as words. We compare this RNN variant to all the other RNN models, Elman and Jordan RNN, LSTM and GRU, on two well-known tasks of Spoken Language Understanding (SLU). Thanks to label embeddings and their combination at the hidden layer, the proposed variant, which uses more parameters than Elman and Jordan RNNs, but far fewer than LSTM and GRU, is more effective than other RNNs, but also outperforms sophisticated CRF models.

Improving Recurrent Neural Networks For Sequence Labelling

Jun 08, 2016

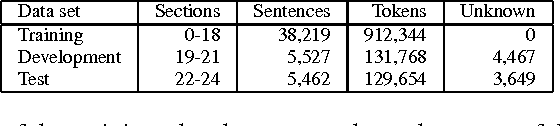

Abstract:In this paper we study different types of Recurrent Neural Networks (RNN) for sequence labeling tasks. We propose two new variants of RNNs integrating improvements for sequence labeling, and we compare them to the more traditional Elman and Jordan RNNs. We compare all models, either traditional or new, on four distinct tasks of sequence labeling: two on Spoken Language Understanding (ATIS and MEDIA); and two of POS tagging for the French Treebank (FTB) and the Penn Treebank (PTB) corpora. The results show that our new variants of RNNs are always more effective than the others.

Etiqueter un corpus oral par apprentissage automatique à l'aide de connaissances linguistiques

Mar 30, 2010

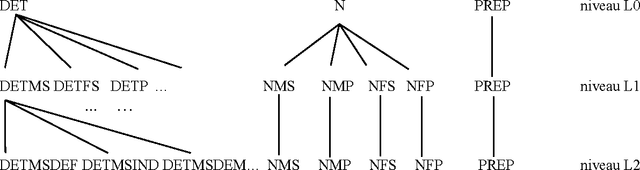

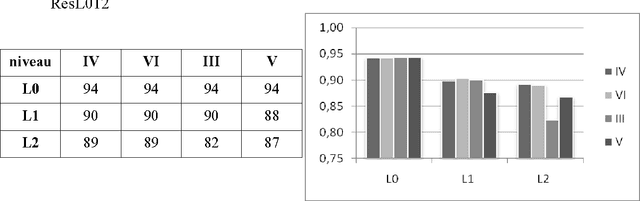

Abstract:Thanks to the Eslo1 ("Enqu\^ete sociolinguistique d'Orl\'eans", i.e. "Sociolinguistic Inquiery of Orl\'eans") campain, a large oral corpus has been gathered and transcribed in a textual format. The purpose of the work presented here is to associate a morpho-syntactic label to each unit of this corpus. To this aim, we have first studied the specificities of the necessary labels, and their various possible levels of description. This study has led to a new original hierarchical structuration of labels. Then, considering that our new set of labels was different from the one used in every available software, and that these softwares usually do not fit for oral data, we have built a new labeling tool by a Machine Learning approach, from data labeled by Cordial and corrected by hand. We have applied linear CRF (Conditional Random Fields) trying to take the best possible advantage of the linguistic knowledge that was used to define the set of labels. We obtain an accuracy between 85 and 90%, depending of the parameters used.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge