Indrajeet Kumar Sinha

Equitable-FL: Federated Learning with Sparsity for Resource-Constrained Environment

Sep 02, 2023

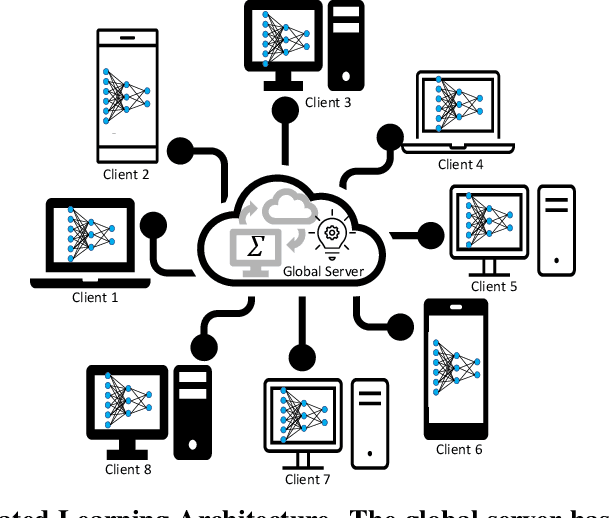

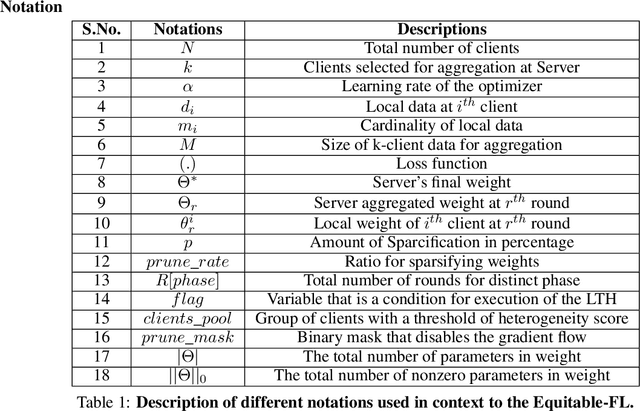

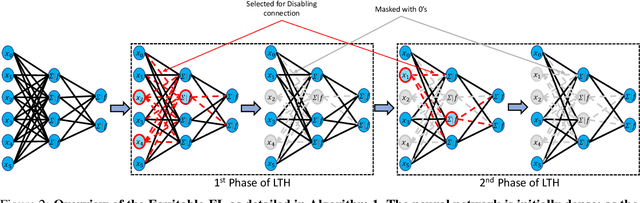

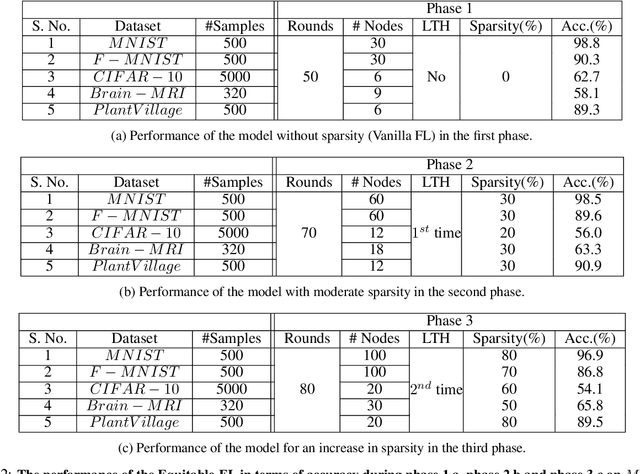

Abstract:In Federated Learning, model training is performed across multiple computing devices, where only parameters are shared with a common central server without exchanging their data instances. This strategy assumes abundance of resources on individual clients and utilizes these resources to build a richer model as user's models. However, when the assumption of the abundance of resources is violated, learning may not be possible as some nodes may not be able to participate in the process. In this paper, we propose a sparse form of federated learning that performs well in a Resource Constrained Environment. Our goal is to make learning possible, regardless of a node's space, computing, or bandwidth scarcity. The method is based on the observation that model size viz a viz available resources defines resource scarcity, which entails that reduction of the number of parameters without affecting accuracy is key to model training in a resource-constrained environment. In this work, the Lottery Ticket Hypothesis approach is utilized to progressively sparsify models to encourage nodes with resource scarcity to participate in collaborative training. We validate Equitable-FL on the $MNIST$, $F-MNIST$, and $CIFAR-10$ benchmark datasets, as well as the $Brain-MRI$ data and the $PlantVillage$ datasets. Further, we examine the effect of sparsity on performance, model size compaction, and speed-up for training. Results obtained from experiments performed for training convolutional neural networks validate the efficacy of Equitable-FL in heterogeneous resource-constrained learning environment.

FAM: fast adaptive federated meta-learning

Sep 01, 2023

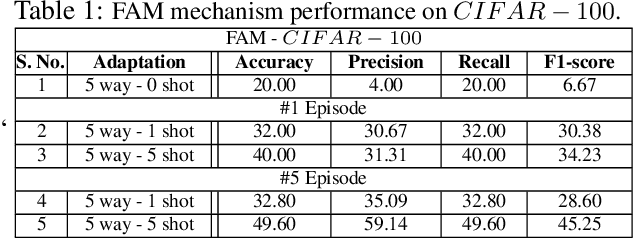

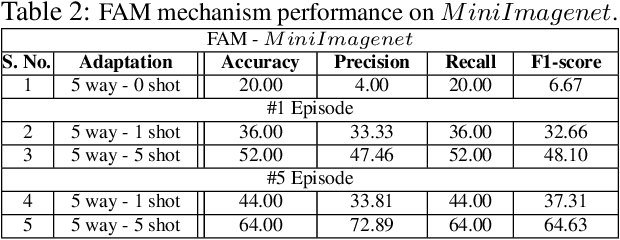

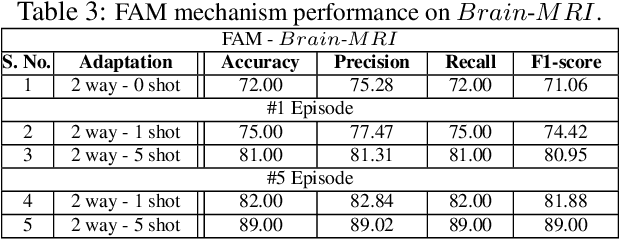

Abstract:In this work, we propose a fast adaptive federated meta-learning (FAM) framework for collaboratively learning a single global model, which can then be personalized locally on individual clients. Federated learning enables multiple clients to collaborate to train a model without sharing data. Clients with insufficient data or data diversity participate in federated learning to learn a model with superior performance. Nonetheless, learning suffers when data distributions diverge. There is a need to learn a global model that can be adapted using client's specific information to create personalized models on clients is required. MRI data suffers from this problem, wherein, one, due to data acquisition challenges, local data at a site is sufficient for training an accurate model and two, there is a restriction of data sharing due to privacy concerns and three, there is a need for personalization of a learnt shared global model on account of domain shift across client sites. The global model is sparse and captures the common features in the MRI. This skeleton network is grown on each client to train a personalized model by learning additional client-specific parameters from local data. Experimental results show that the personalization process at each client quickly converges using a limited number of epochs. The personalized client models outperformed the locally trained models, demonstrating the efficacy of the FAM mechanism. Additionally, the sparse parameter set to be communicated during federated learning drastically reduced communication overhead, which makes the scheme viable for networks with limited resources.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge