Hyunwoo Yu

VIPA: Visual Informative Part Attention for Referring Image Segmentation

Feb 16, 2026Abstract:Referring Image Segmentation (RIS) aims to segment a target object described by a natural language expression. Existing methods have evolved by leveraging the vision information into the language tokens. To more effectively exploit visual contexts for fine-grained segmentation, we propose a novel Visual Informative Part Attention (VIPA) framework for referring image segmentation. VIPA leverages the informative parts of visual contexts, called a visual expression, which can effectively provide the structural and semantic visual target information to the network. This design reduces high-variance cross-modal projection and enhances semantic consistency in an attention mechanism of the referring image segmentation. We also design a visual expression generator (VEG) module, which retrieves informative visual tokens via local-global linguistic context cues and refines the retrieved tokens for reducing noise information and sharing informative visual attributes. This module allows the visual expression to consider comprehensive contexts and capture semantic visual contexts of informative regions. In this way, our framework enables the network's attention to robustly align with the fine-grained regions of interest. Extensive experiments and visual analysis demonstrate the effectiveness of our approach. Our VIPA outperforms the existing state-of-the-art methods on four public RIS benchmarks.

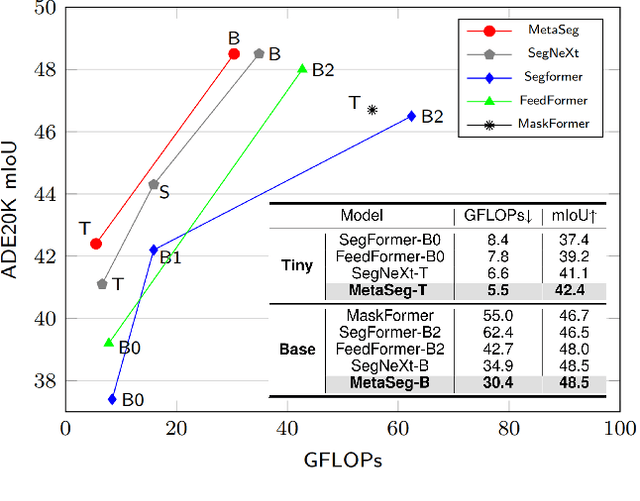

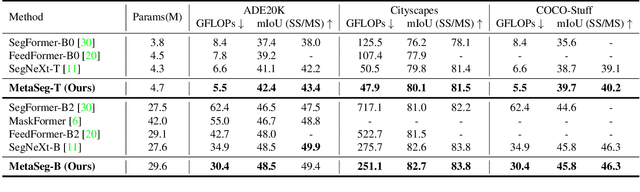

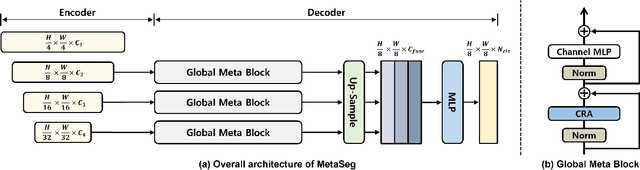

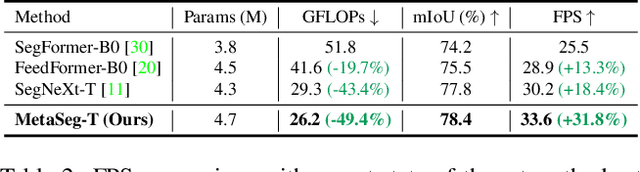

MetaSeg: MetaFormer-based Global Contexts-aware Network for Efficient Semantic Segmentation

Aug 15, 2024

Abstract:Beyond the Transformer, it is important to explore how to exploit the capacity of the MetaFormer, an architecture that is fundamental to the performance improvements of the Transformer. Previous studies have exploited it only for the backbone network. Unlike previous studies, we explore the capacity of the Metaformer architecture more extensively in the semantic segmentation task. We propose a powerful semantic segmentation network, MetaSeg, which leverages the Metaformer architecture from the backbone to the decoder. Our MetaSeg shows that the MetaFormer architecture plays a significant role in capturing the useful contexts for the decoder as well as for the backbone. In addition, recent segmentation methods have shown that using a CNN-based backbone for extracting the spatial information and a decoder for extracting the global information is more effective than using a transformer-based backbone with a CNN-based decoder. This motivates us to adopt the CNN-based backbone using the MetaFormer block and design our MetaFormer-based decoder, which consists of a novel self-attention module to capture the global contexts. To consider both the global contexts extraction and the computational efficiency of the self-attention for semantic segmentation, we propose a Channel Reduction Attention (CRA) module that reduces the channel dimension of the query and key into the one dimension. In this way, our proposed MetaSeg outperforms the previous state-of-the-art methods with more efficient computational costs on popular semantic segmentation and a medical image segmentation benchmark, including ADE20K, Cityscapes, COCO-stuff, and Synapse. The code is available at https://github.com/hyunwoo137/MetaSeg.

Cross-aware Early Fusion with Stage-divided Vision and Language Transformer Encoders for Referring Image Segmentation

Aug 14, 2024

Abstract:Referring segmentation aims to segment a target object related to a natural language expression. Key challenges of this task are understanding the meaning of complex and ambiguous language expressions and determining the relevant regions in the image with multiple objects by referring to the expression. Recent models have focused on the early fusion with the language features at the intermediate stage of the vision encoder, but these approaches have a limitation that the language features cannot refer to the visual information. To address this issue, this paper proposes a novel architecture, Cross-aware early fusion with stage-divided Vision and Language Transformer encoders (CrossVLT), which allows both language and vision encoders to perform the early fusion for improving the ability of the cross-modal context modeling. Unlike previous methods, our method enables the vision and language features to refer to each other's information at each stage to mutually enhance the robustness of both encoders. Furthermore, unlike the conventional scheme that relies solely on the high-level features for the cross-modal alignment, we introduce a feature-based alignment scheme that enables the low-level to high-level features of the vision and language encoders to engage in the cross-modal alignment. By aligning the intermediate cross-modal features in all encoder stages, this scheme leads to effective cross-modal fusion. In this way, the proposed approach is simple but effective for referring image segmentation, and it outperforms the previous state-of-the-art methods on three public benchmarks.

Embedding-Free Transformer with Inference Spatial Reduction for Efficient Semantic Segmentation

Jul 24, 2024

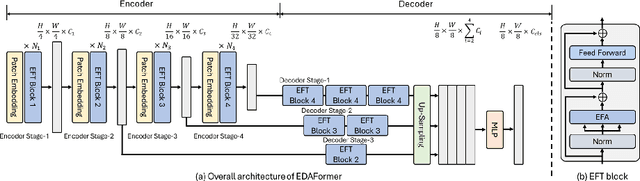

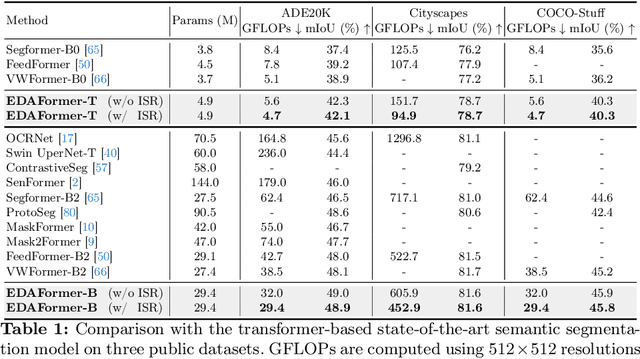

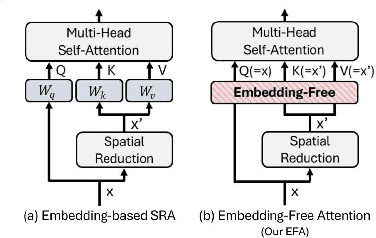

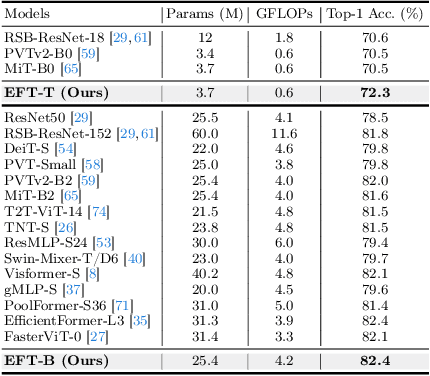

Abstract:We present an Encoder-Decoder Attention Transformer, EDAFormer, which consists of the Embedding-Free Transformer (EFT) encoder and the all-attention decoder leveraging our Embedding-Free Attention (EFA) structure. The proposed EFA is a novel global context modeling mechanism that focuses on functioning the global non-linearity, not the specific roles of the query, key and value. For the decoder, we explore the optimized structure for considering the globality, which can improve the semantic segmentation performance. In addition, we propose a novel Inference Spatial Reduction (ISR) method for the computational efficiency. Different from the previous spatial reduction attention methods, our ISR method further reduces the key-value resolution at the inference phase, which can mitigate the computation-performance trade-off gap for the efficient semantic segmentation. Our EDAFormer shows the state-of-the-art performance with the efficient computation compared to the existing transformer-based semantic segmentation models in three public benchmarks, including ADE20K, Cityscapes and COCO-Stuff. Furthermore, our ISR method reduces the computational cost by up to 61% with minimal mIoU performance degradation on Cityscapes dataset. The code is available at https://github.com/hyunwoo137/EDAFormer.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge