Heiko Buelow

Improved Fourier Mellin Invariant for Robust Rotation Estimation with Omni-cameras

Feb 12, 2019

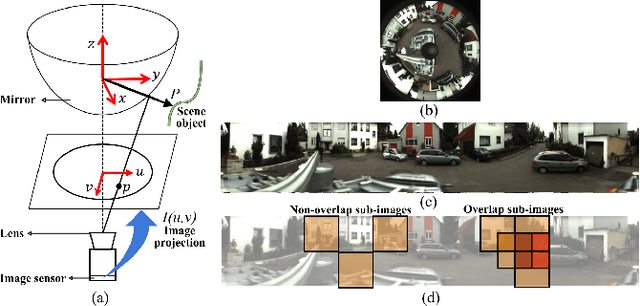

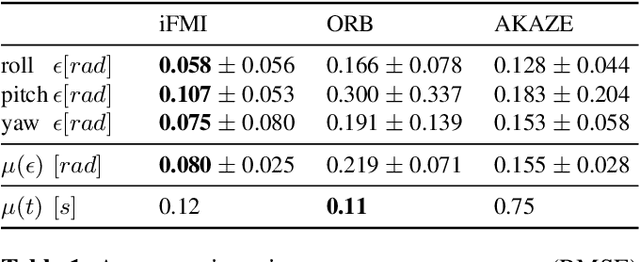

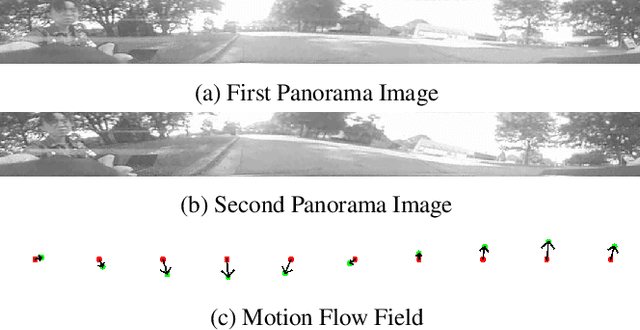

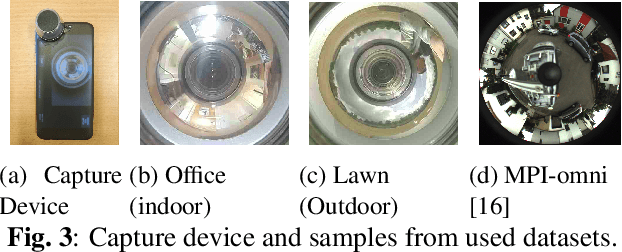

Abstract:Spectral methods such as the improved Fourier Mellin Invariant (iFMI) transform have proved faster, more robust and accurate than feature based methods on image registration. However, iFMI is restricted to work only when the camera moves in 2D space and has not been applied on omni-cameras images so far. In this work, we extend the iFMI method and apply a motion model to estimate an omni-camera's pose when it moves in 3D space. This is particularly useful in field robotics applications to get a rapid and comprehensive view of unstructured environments, and to estimate robustly the robot pose. In the experiment section, we compared the extended iFMI method against ORB and AKAZE feature based approaches on three datasets showing different type of environments: office, lawn and urban scenery (MPI-omni dataset). The results show that our method boosts the accuracy of the robot pose estimation two to four times with respect to the feature registration techniques, while offering lower processing times. Furthermore, the iFMI approach presents the best performance against motion blur typically present in mobile robotics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge