Hanlin Hu

opXRD: Open Experimental Powder X-ray Diffraction Database

Mar 07, 2025Abstract:Powder X-ray diffraction (pXRD) experiments are a cornerstone for materials structure characterization. Despite their widespread application, analyzing pXRD diffractograms still presents a significant challenge to automation and a bottleneck in high-throughput discovery in self-driving labs. Machine learning promises to resolve this bottleneck by enabling automated powder diffraction analysis. A notable difficulty in applying machine learning to this domain is the lack of sufficiently sized experimental datasets, which has constrained researchers to train primarily on simulated data. However, models trained on simulated pXRD patterns showed limited generalization to experimental patterns, particularly for low-quality experimental patterns with high noise levels and elevated backgrounds. With the Open Experimental Powder X-Ray Diffraction Database (opXRD), we provide an openly available and easily accessible dataset of labeled and unlabeled experimental powder diffractograms. Labeled opXRD data can be used to evaluate the performance of models on experimental data and unlabeled opXRD data can help improve the performance of models on experimental data, e.g. through transfer learning methods. We collected \numpatterns diffractograms, 2179 of them labeled, from a wide spectrum of materials classes. We hope this ongoing effort can guide machine learning research toward fully automated analysis of pXRD data and thus enable future self-driving materials labs.

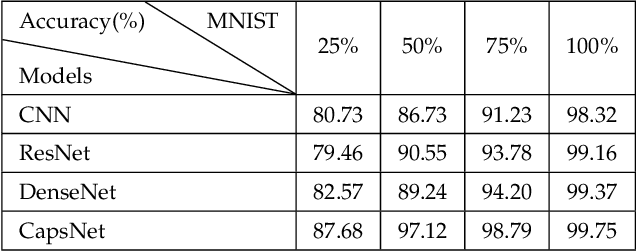

Assessing four Neural Networks on Handwritten Digit Recognition Dataset (MNIST)

Nov 16, 2018

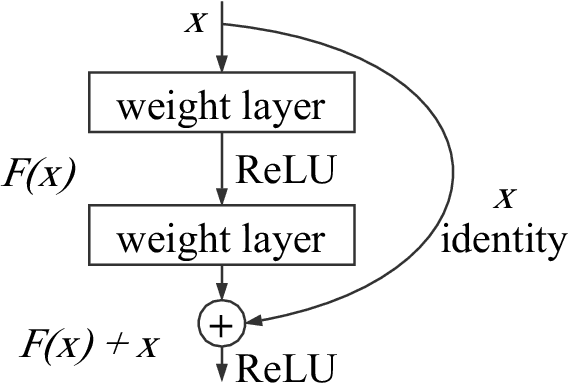

Abstract:Although the image recognition has been a research topic for many years, many researchers still have a keen interest in it. In some papers, however, there is a tendency to compare models only on one or two datasets, either because of time restraints or because the model is tailored to a specific task. Accordingly, it is hard to understand how well a certain model generalizes across image recognition field. In this paper, we compare four neural networks on MNIST dataset with different division. Among of them, three are Convolutional Neural Networks (CNN), Deep Residual Network (ResNet) and Dense Convolutional Network (DenseNet) respectively, and the other is our improvement on CNN baseline through introducing Capsule Network (CapsNet) to image recognition area. We show that the previous models despite do a quite good job in this area, our retrofitting can be applied to get a better performance. The result obtained by CapsNet is an accuracy rate of 99.75\%, and it is the best result published so far. Another inspiring result is that CapsNet only needs a small amount of data to get the excellent performance. Finally, we will apply CapsNet's ability to generalize in other image recognition field in the future.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge