Habiba Farrukh

New Metrics to Evaluate the Performance and Fairness of Personalized Federated Learning

Jul 28, 2021

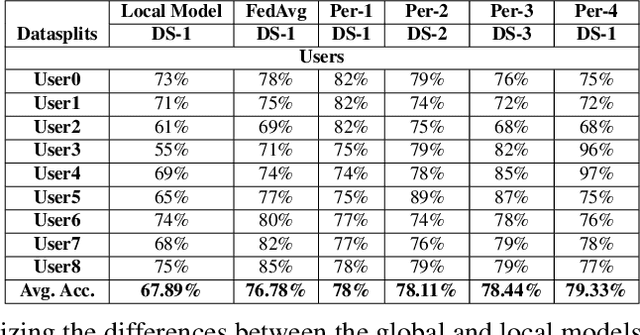

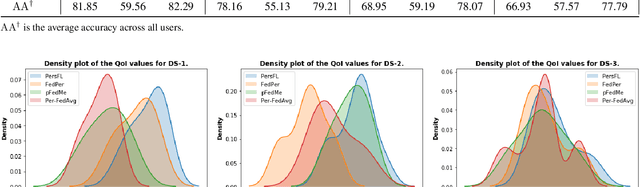

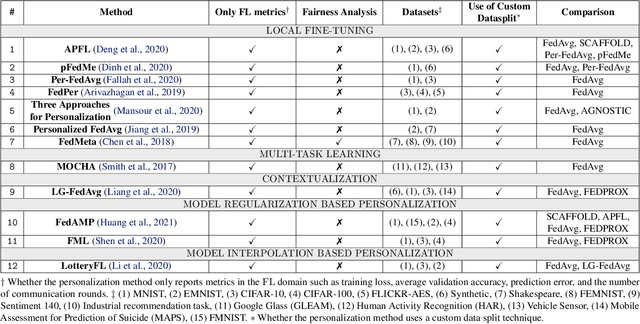

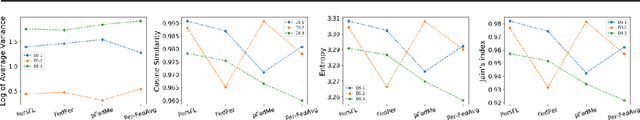

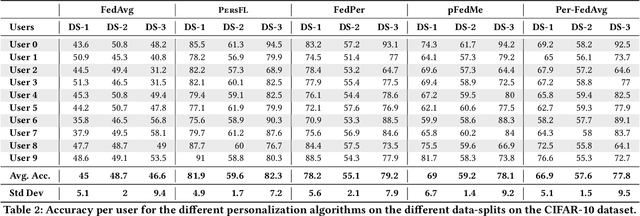

Abstract:In Federated Learning (FL), the clients learn a single global model (FedAvg) through a central aggregator. In this setting, the non-IID distribution of the data across clients restricts the global FL model from delivering good performance on the local data of each client. Personalized FL aims to address this problem by finding a personalized model for each client. Recent works widely report the average personalized model accuracy on a particular data split of a dataset to evaluate the effectiveness of their methods. However, considering the multitude of personalization approaches proposed, it is critical to study the per-user personalized accuracy and the accuracy improvements among users with an equitable notion of fairness. To address these issues, we present a set of performance and fairness metrics intending to assess the quality of personalized FL methods. We apply these metrics to four recently proposed personalized FL methods, PersFL, FedPer, pFedMe, and Per-FedAvg, on three different data splits of the CIFAR-10 dataset. Our evaluations show that the personalized model with the highest average accuracy across users may not necessarily be the fairest. Our code is available at https://tinyurl.com/1hp9ywfa for public use.

Unifying Distillation with Personalization in Federated Learning

May 31, 2021

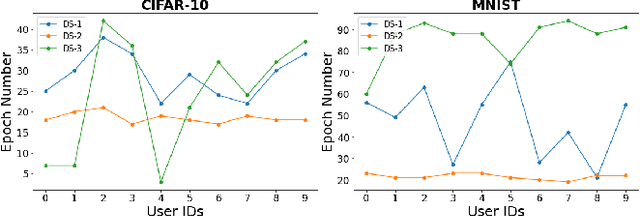

Abstract:Federated learning (FL) is a decentralized privacy-preserving learning technique in which clients learn a joint collaborative model through a central aggregator without sharing their data. In this setting, all clients learn a single common predictor (FedAvg), which does not generalize well on each client's local data due to the statistical data heterogeneity among clients. In this paper, we address this problem with PersFL, a discrete two-stage personalized learning algorithm. In the first stage, PersFL finds the optimal teacher model of each client during the FL training phase. In the second stage, PersFL distills the useful knowledge from optimal teachers into each user's local model. The teacher model provides each client with some rich, high-level representation that a client can easily adapt to its local model, which overcomes the statistical heterogeneity present at different clients. We evaluate PersFL on CIFAR-10 and MNIST datasets using three data-splitting strategies to control the diversity between clients' data distributions. We empirically show that PersFL outperforms FedAvg and three state-of-the-art personalization methods, pFedMe, Per-FedAvg, and FedPer on majority data-splits with minimal communication cost. Further, we study the performance of PersFL on different distillation objectives, how this performance is affected by the equitable notion of fairness among clients, and the number of required communication rounds. PersFL code is available at https://tinyurl.com/hdh5zhxs for public use and validation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge